Reverse Engineering InternalCall Methods in .NET

Often times, when attempting to reverse engineer a particular .NET method, I will hit a wall because I’ll dig in far enough into the method’s implementation that I’ll reach a private method marked [MethodImpl(MethodImplOptions.InternalCall)]. For example, I was interested in seeing how the .NET framework loads PE files in memory via a byte array using the System.Reflection.Assembly.Load(Byte[]) method. When viewed in ILSpy (my favorite .NET decompiler), it will show the following implementation:

So the first thing it does is check to see if you’re allowed to load a PE image in the first place via the CheckLoadByteArraySupported method. Basically, if the executing assembly is a tile app, then you will not be allowed to load a PE file as a byte array. It then calls the RuntimeAssembly.nLoadImage method. If you click on this method in ILSpy, you will be disappointed to find that there does not appear to be a managed implementation.

As you can see, all you get is a method signature and an InternalCall property. To begin to understand how we might be able reverse engineer this method, we need to know the definition of InternalCall. According to MSDN documentation, InternalCall refers to a method call that “is internal, that is, it calls a method that is implemented within the common language runtime.” So it would seem likely that this method is implemented as a native function in clr.dll. To validate my assumption, let’s use Windbg with sos.dll – the managed code debugger extension. My goal using Windbg will be to determine the native pointer for the nLoadImage method and see if it jumps to its respective native function in clr.dll. I will attach Windbg to PowerShell since PowerShell will make it easy to get the information needed by the SOS debugger extension. The first thing I need to do is get the metadata token for the nLoadImage method. This will be used in Windbg to resolve the method.

As you can see, the Get-ILDisassembly function in PowerSploit conveniently provides the metadata token for the nLoadImage method. Now on to Windbg for further analysis…

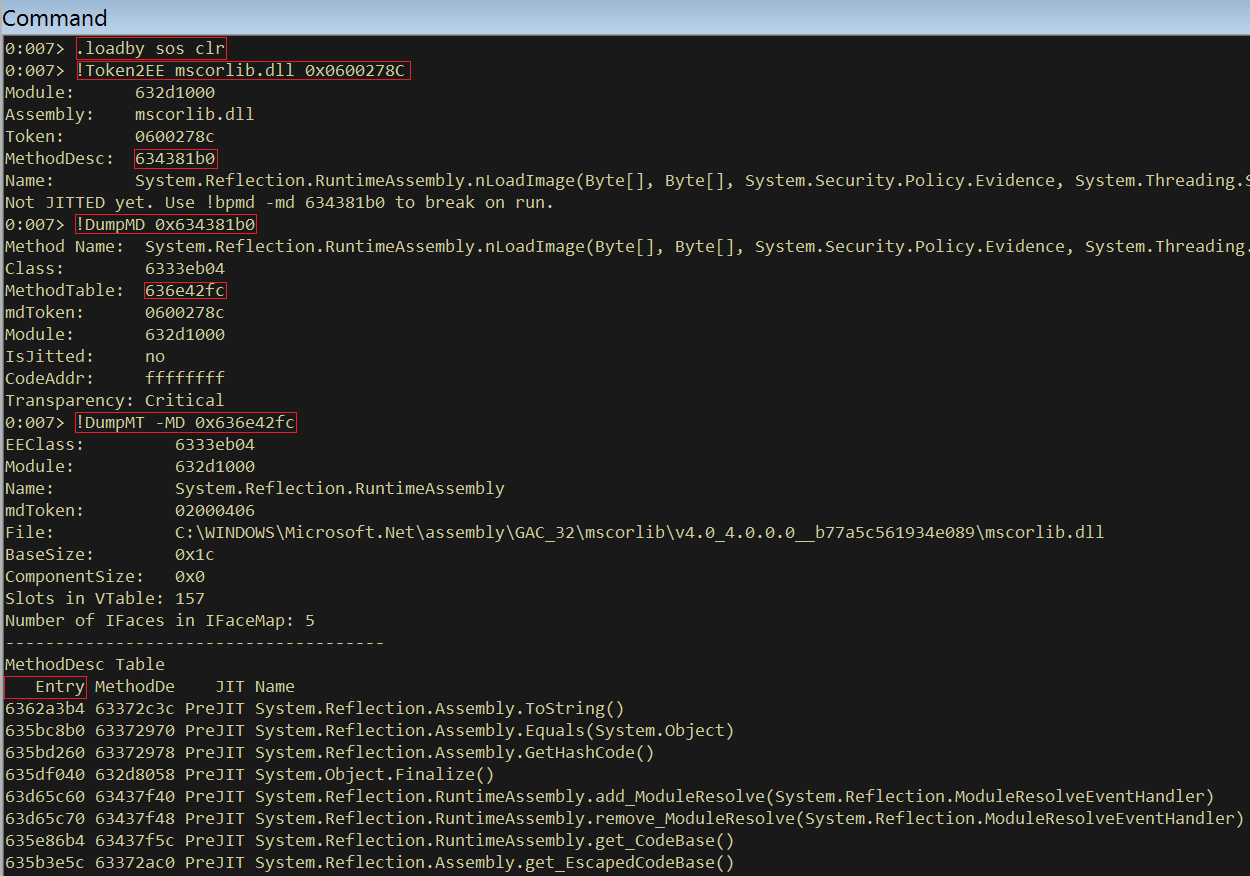

The following commands were executed:

1) .loadby sos clr

Load the SOS debugging extension from the directory that clr.dll is loaded from

2) !Token2EE mscorlib.dll 0x0600278C

Retrieves the MethodDesc of the nLoadImage method. The first argument (mscorlib.dll) is the module that implements the nLoadImage method and the hex number is the metadata token retrieved from PowerShell.

3) !DumpMD 0x634381b0

I then dump information about the MethodDesc. This will give the address of the method table for the object that implements nLoadImage

4) !DumpMT -MD 0x636e42fc

This will dump all of the methods for the System.Reflection.RuntimeAssembly class with their respective native entry point. nLoadImage has the following entry:

635910a0 634381b0 NONE System.Reflection.RuntimeAssembly.nLoadImage(Byte[], Byte[], System.Security.Policy.Evidence, System.Threading.StackCrawlMark ByRef, Boolean, System.Security.SecurityContextSource)

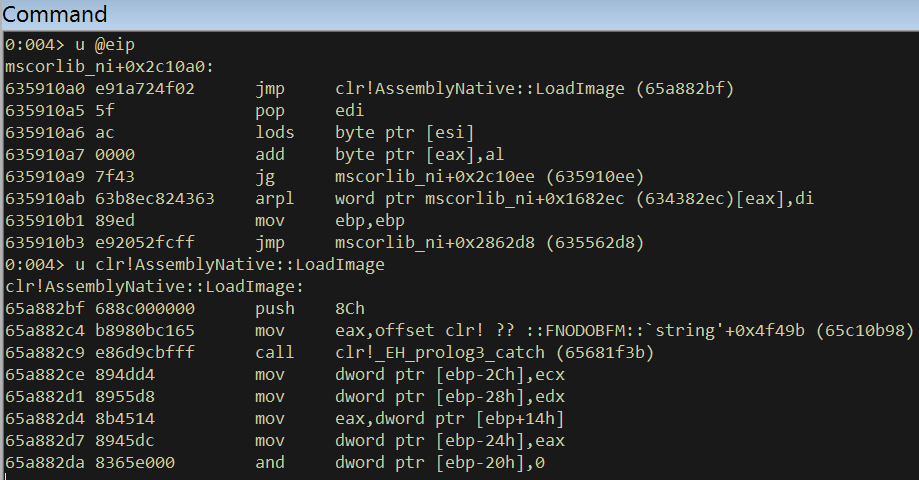

So the native address for nLoadImage is 0x635910a0. Now, set a breakpoint on that address, let the program continue execution and use PowerShell to call the Load method on a bogus PE byte array.

PS C:\> [Reflection.Assembly]::Load(([Byte[]]@(1,2,3)))

You’ll then hit your breakpoint in WIndbg and if you disassemble from where you landed, the function that implements the nLoadImage method will be crystal clear – clr!AssemblyNative::LoadImage

You can now use IDA for further analysis and begin digging into the actual implementation of this InternalCall method!

After digging into some of the InternalCall methods in IDA you’ll quickly see that most functions use the fastcall convention. In x86, this means that a static function will pass its first two arguments via ECX and EDX. If it’s an instance function, the ‘this’ pointer will be passed via ECX (as is standard in thiscall) and its first argument via EDX. Any remaining arguments are pushed onto the stack.

So for the handful of people that have wondered where the implementation for an InternalCall method lies, I hope this post has been helpful.