Fuzzing for known vulnerabilities with Road0Day & LAVA

Fuzzing for known vulnerabilities with Rode0day & LAVA

It might seem strange to want to spend time and resources looking for known vulnerabilities. That is the case with the Rode0day competition in which seeded vulnerabilities are injected into binaries with the tool LAVA. If you stop and think for a moment on the challenges of fuzzing and vulnerability discovery, one of the primary challenges is an inability to know if your fuzzing technique is effective. One might infer that if you find a lot of unique crashes, in different code paths then your fuzzing strategy is effective… or was the code just poorly written? If you find no crashes, or very few, is your fuzzing strategy not working properly? Is the program just handling malformed input well? These questions are difficult to answer and as a result it can be difficult to know if you are wasting resources or if it’s just a matter of time before you’d find a vulnerability.

Enter Large-scale Automated Vulnerability Addition(LAVA) which aims to automate injection of buffer overflow vulnerabilities in an automated way while ensuring that the bugs are security critical, reachable from user input, and plentiful. The presentation is very interesting and I highly recommend watching the full video. TLDR; the LAVA developers injected 2000 flaws in a binary and an open source fuzzer & symbolic execution tool found less than 2% of the bugs! It should however be noted that their were purely academic, and the fuzzing runs were relatively short. With an unsophisticated approach low detection rates are to be expected.

In the Rode0day Competition challenge binaries are released every month. The challenges are available with source code so it’s possible to compile them with binary instrumentation to get started (relatively) quickly. So let’s get started with one of the prior challenges to get a fuzzer setup. For the purposes of the competition, AFL will be our go to fuzzer. I’ll be using an instance in AWS ec2 running ubuntu 18.04 and in this case AFL is available in the apt repo so first run:

$sudo apt-get install afl

once AFL is installed we can grab a target binary from the competition

$wget https://rode0day.mit.edu/static/archive/Beta.tar.gz

$tar -zxvf Beta.tar.gz

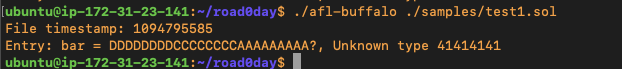

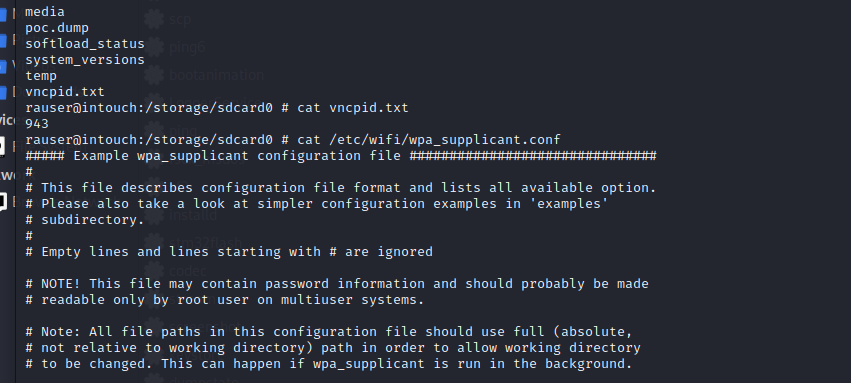

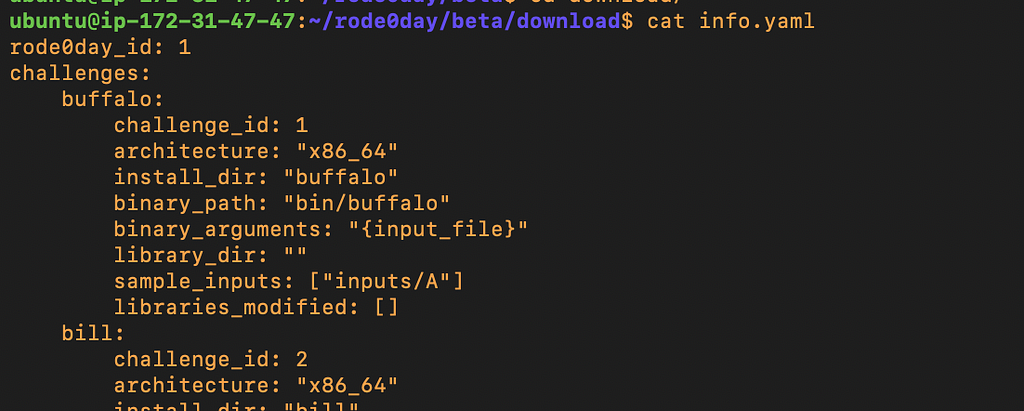

I chose to start with the beta challenges however you can choose any challenge from the list. Reading the info.yaml file that’s included describes the challenge and the first challenge “buffalo” looks like a good one to start with since it takes one argument from the command line directly.

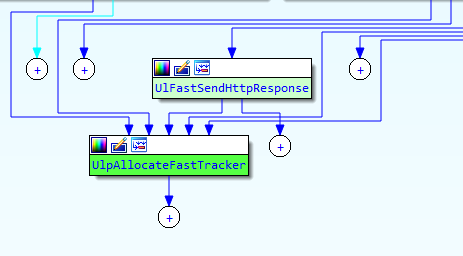

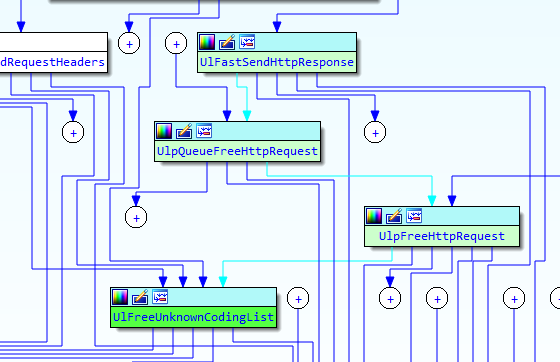

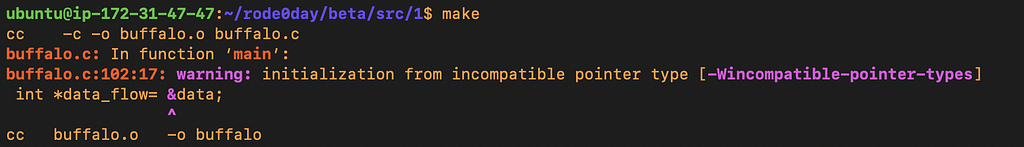

Next we want to compile the target binary for AFL instrumentation, but before we can do that let’s see if it will compile without modifications:

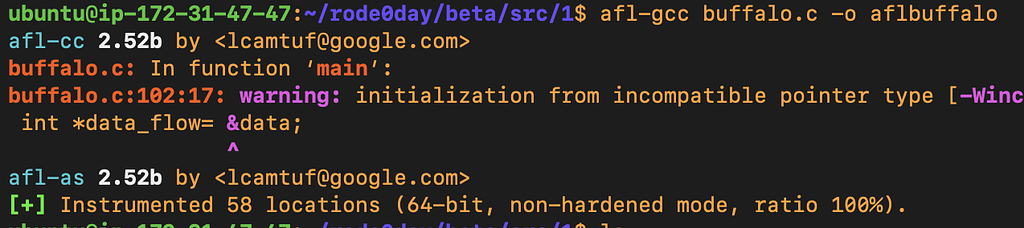

Even though there were warnings the binary does compile and we have the same functionality between our compiled binary and the included binary. We should be ready to start fuzzing with AFL, let’s compile with instrumentation. we can use afl-gcc directly, or modify the Makefile.

ubuntu@ip-172–31–47–47:~/rode0day/beta/src/1$ afl-gcc buffalo.c -o aflbuffalo

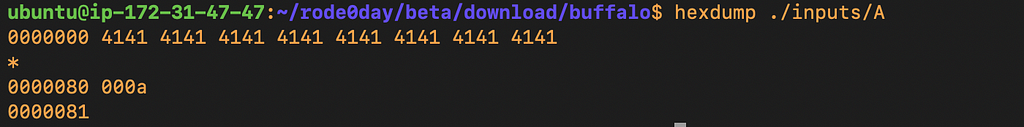

Next we can review the included input corpus for our buffalo which in this case, is nowhere near as exciting as say, a corpus of jpegs.

Our corpus contains only one file which has A’s and not much else, not very exciting…

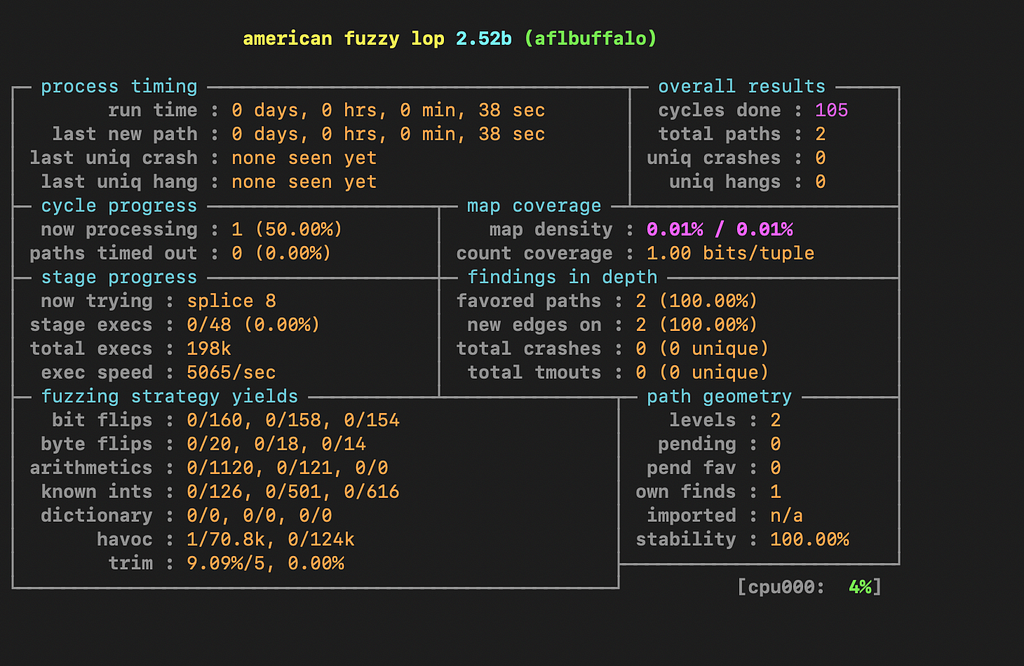

so we can launch AFL with the command:

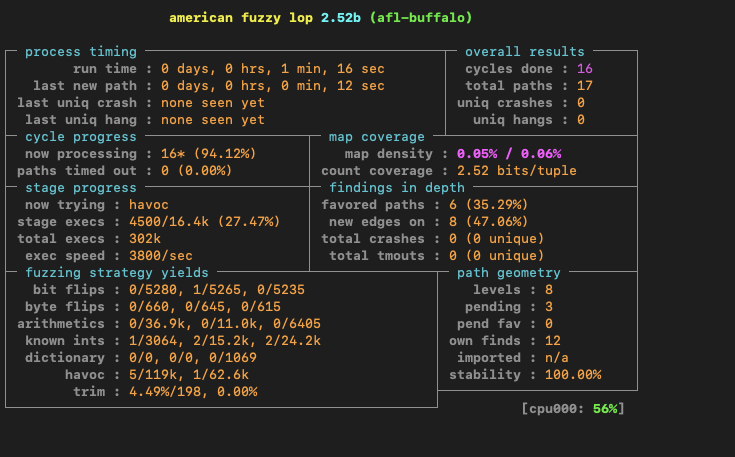

afl-fuzz -i ../../test/ -o ./crashes/ ./aflbuffalo @@

where -i is the input directory containing our input files, -o is the output directory to store our crashes ./aflbuffalo is the compiled program to test and @@ simple means append the input files to the command line.

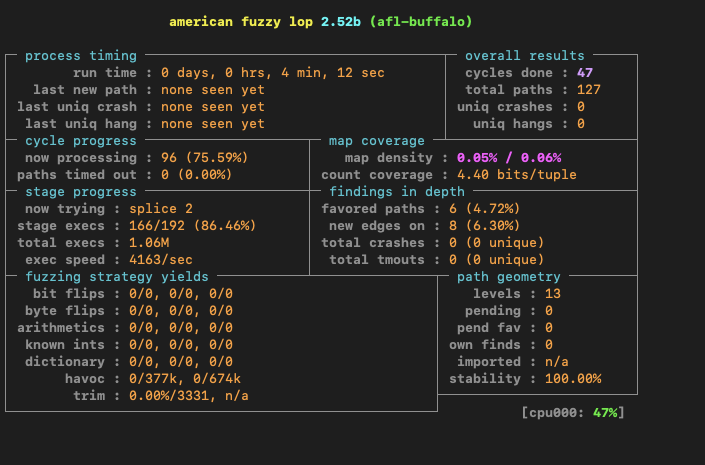

After letting the fuzzer run for some time with only one input file in AFL we wont end up seeing total paths increase significantly, which means we are not exploring and testing new code paths. Adding just one new file to the input directory resulted in another code path being hit. This points to the overall importance of having a large, but efficient corpus. I’ll have a follow-up blog post about creating a corpus for this challenge binary.

If you liked this blog post, more are available on our blog redblue42.com